Last year, after I started a search for a good out-of-the-box all-flash-storage setup for a video editing NAS, I floated the idea of an all-M.2 NVMe NAS to ASUSTOR. I am not the first person with the idea, nor is ASUSTOR the first prebuilt NAS company to build one (that honor goes QNAP, with their TBS-453DX).

But I do think the concept can be executed to suit different needs—like in my case, video editing over a 10 Gbps network with minimal latency for at least one concurrent user with multiple 4K streams and sometimes complex edits, without lower-bitrate transcoded media (e.g. ProRes RAW).

And so, we now have two new entrants from ASUSTOR, the 6-M.2-slot Flashstor 6 (FS6706T) with dual 2.5 Gbps networking for $449, and the 12-M.2-slot Flashstor 12 Pro (FS6712X) with 10 Gbps networking for $799.

Both have identical exteriors save for the RJ45 Ethernet ports: the 6-bay unit has two 2.5G ports (pictured first), while the 12-bay unit has a single 10G port (pictured second):

The units both run on an Intel Celeron N5105 processor, and have HDMI, optical TOSlink S/PDIF, and USB ports, along with a Kensington lock slot.

ASUSTOR sent me both units for testing (though I will soon pass them along to Raid Owl so he can continue the testing!), and I've only had a chance for a quick teardown so far—I wasn't yet able to initialize the unit (more on that later).

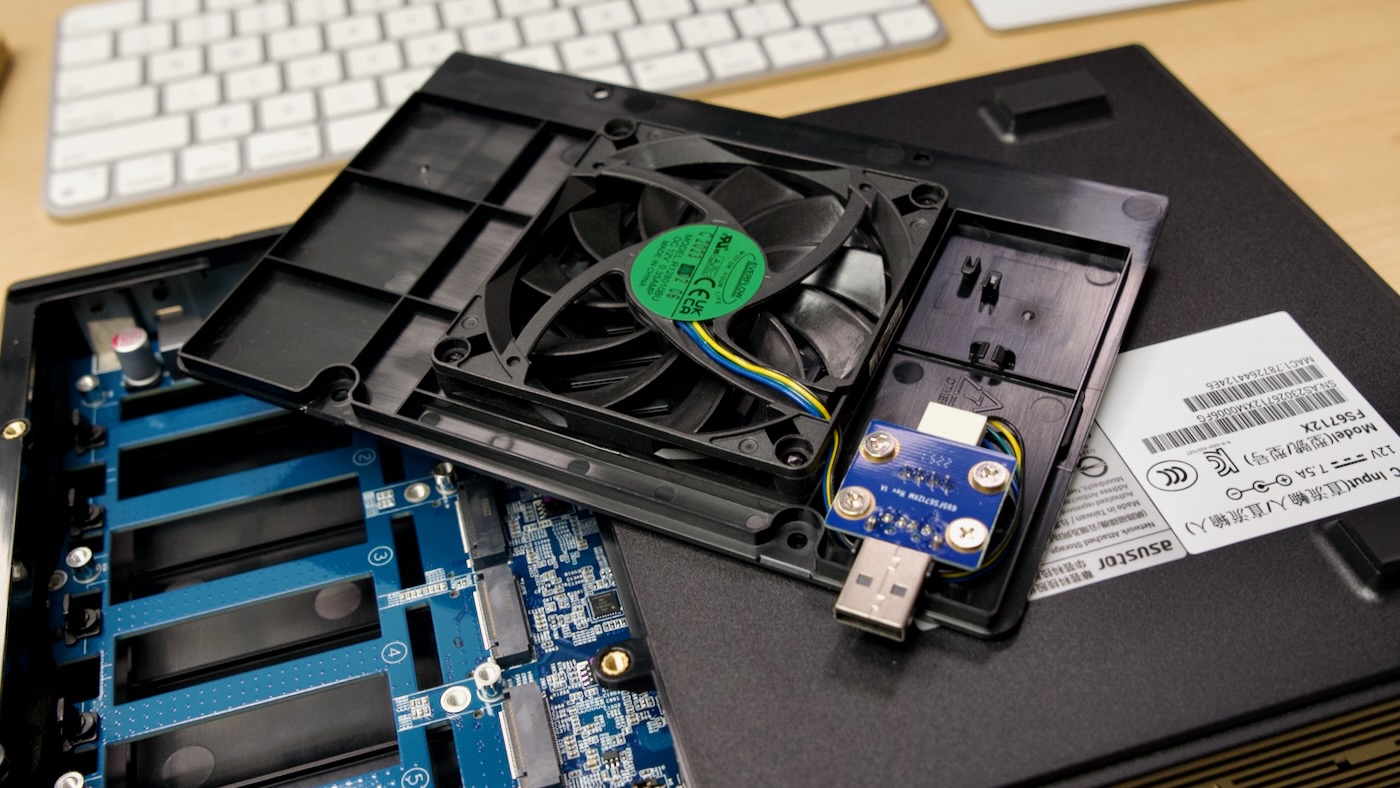

If you flip one over, you can see the lone fan that's directly under the M.2 NVMe slots (and pushes air through slots in the PCB across a massive heatsink covering the CPU):

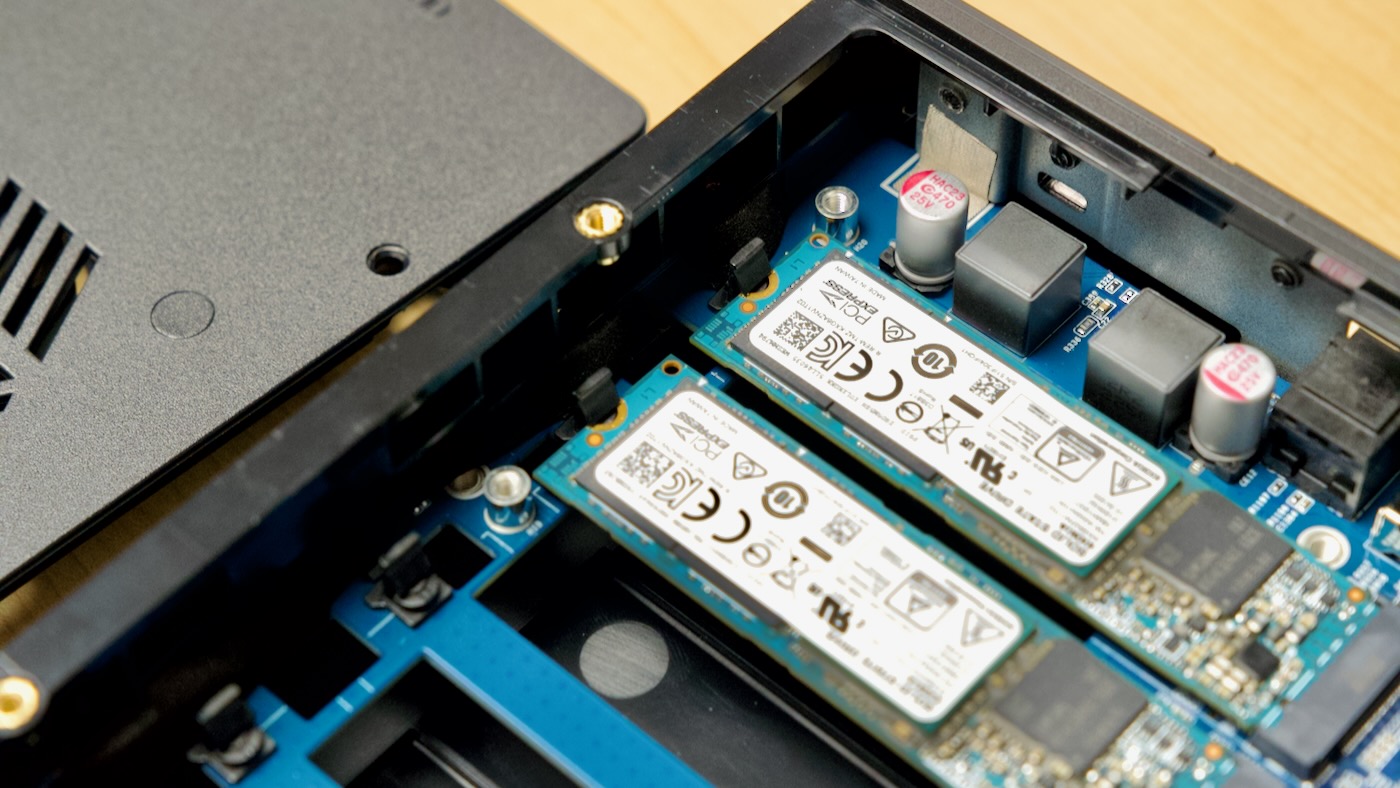

Gaining access to the M.2 slots requires the removal of four small screws. You slide the fan cover out, and that also disconnects a little USB-A port that powers the fan itself:

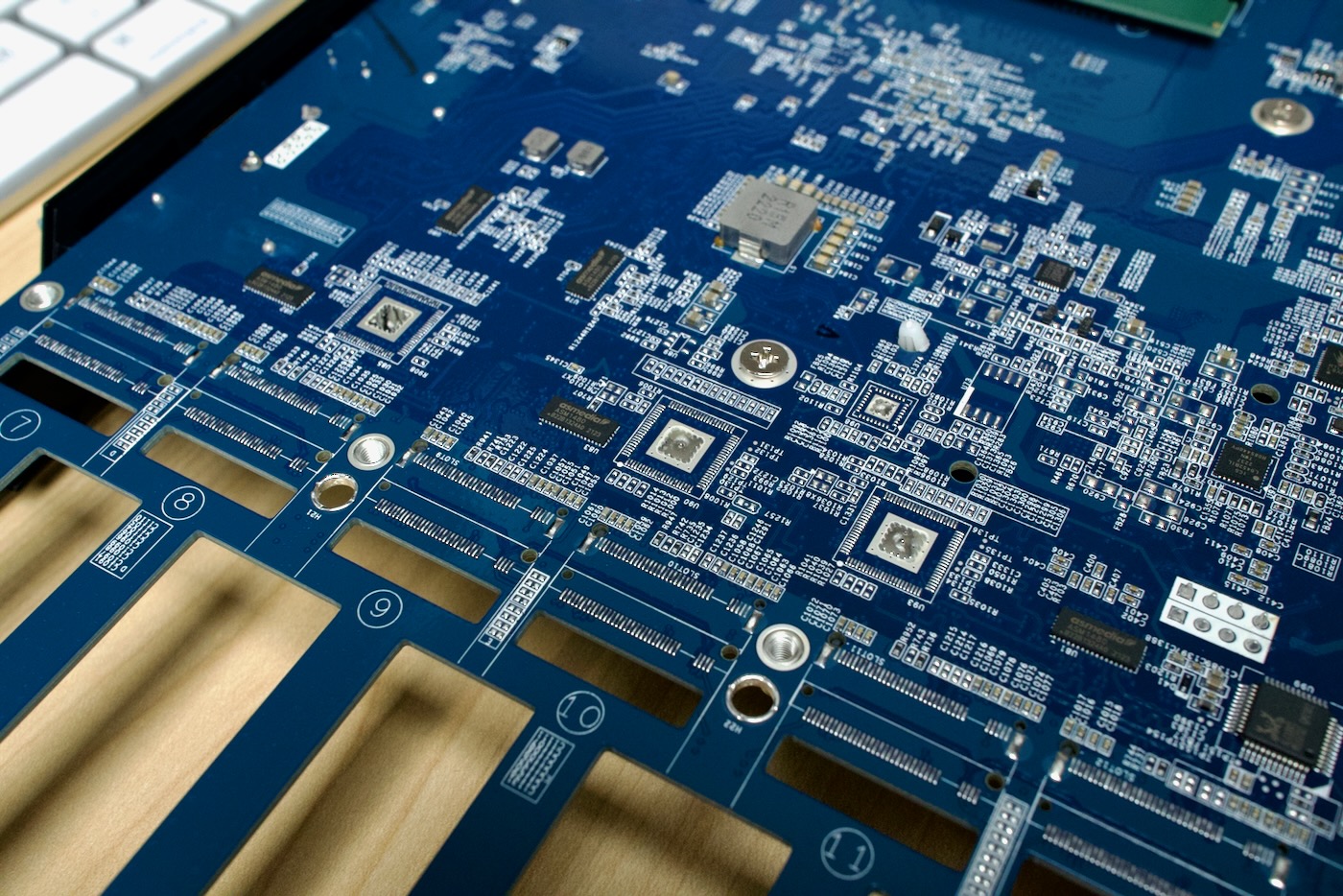

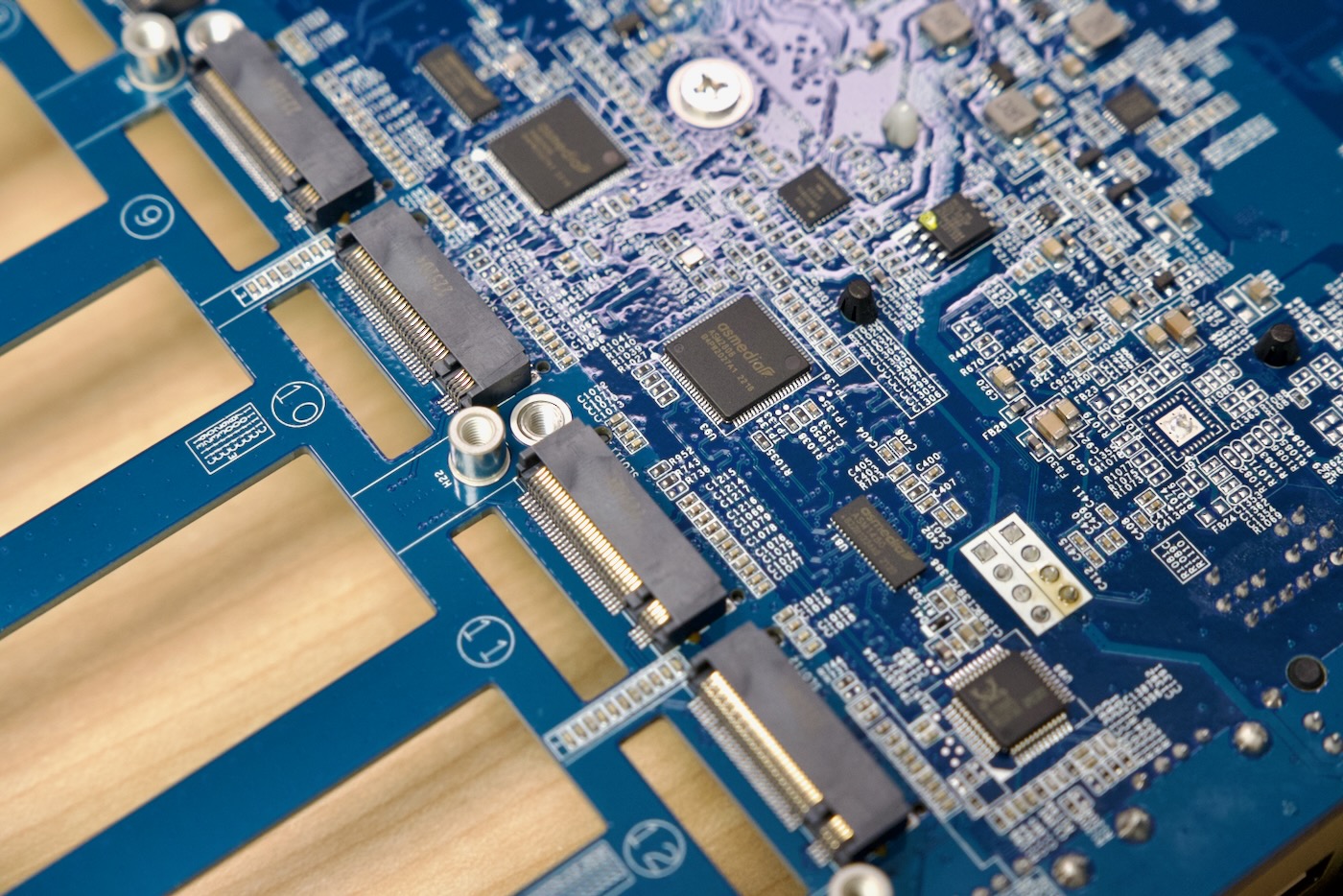

And underneath, you can find M.2 slots labeled 1-6, and peeking closely above those slots, there are a number of ASMedia ASM1480 PCIe multiplexers:

Those multiplexers seem to be the primary means of splitting up PCIe traffic on the 6-bay unit—the N5105 only has 8 PCIe Gen 3 lanes—and supporting two NICs (in the 6-bay unit) or one 10G NIC (in the 12-bay unit) plus all those M.2 slots... you have to mux the traffic.

If you flip over the 6-bay unit and pop off the top cover, you reveal a bunch of unpopulated pads for the additional M.2 slots, along with some pads for extra ICs:

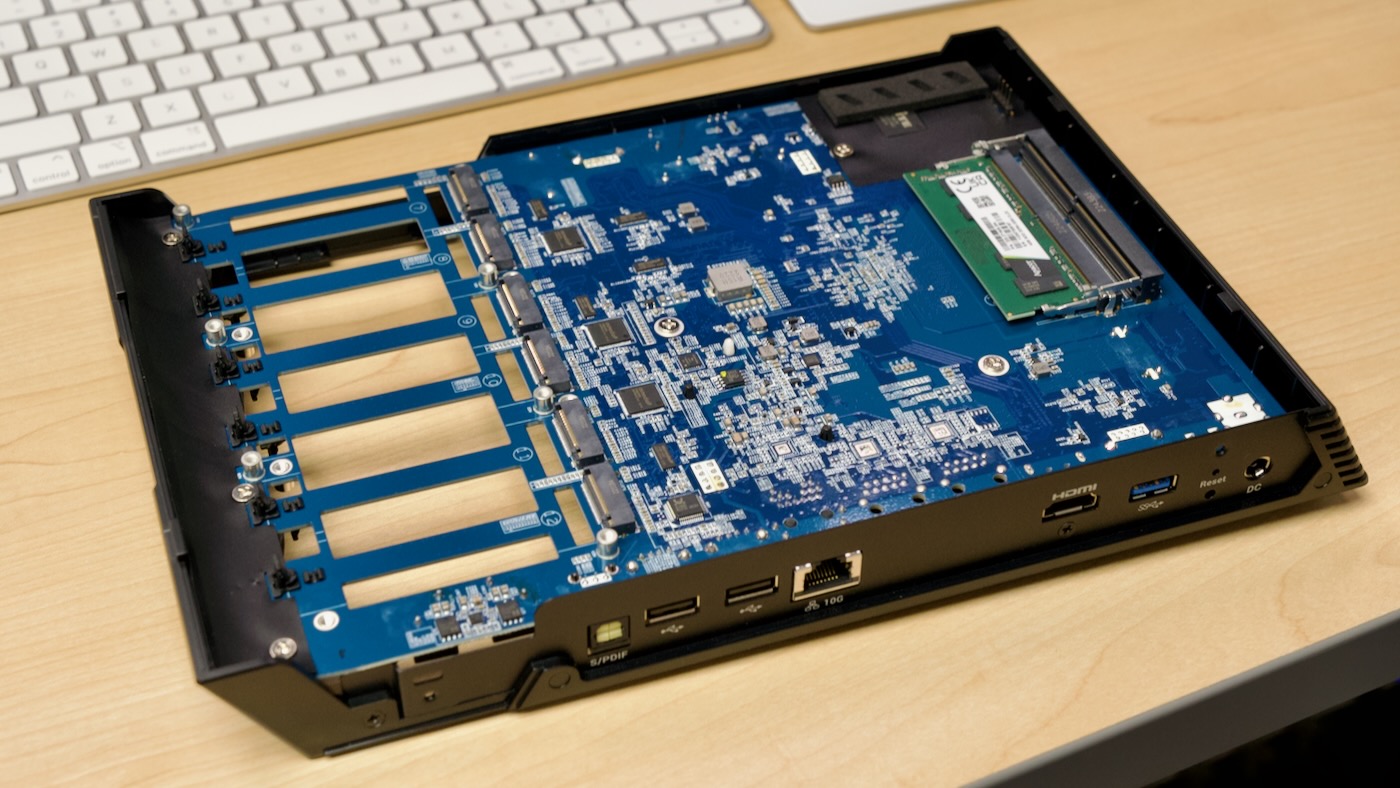

On the 12-bay unit, those ICs are populated, and they are 3 of ASMedia's ASM2806 PCI express switch chips:

The 12-bay unit has an extra heatsink over the 10 Gbps NIC chip, while the 6-bay unit uses two 2.5 Gbps Realtek RTL8125GB NICs.

Also on the topside are two DDR4 SODIMM slots. The units both come with 4GB of Apacer DDR4 3200 CL22 RAM in one of the slots. The N5105 supports up to 16GB of RAM total, and though Patrick from Serve The Home was able to get 64 GB of RAM recognized, he encountered errors as more than 40GB of data was cached in RAM. I would stick to the 16GB rated maximum if you want the best stability.

The M.2 slots each have a little plastic retention tab, making installation and removal of drives extremely simple (easier even than the little plastic twisty mechanism employed by nicer motherboards these days). After the drive clicks in, I don't have any worry it would move even with a modest drop of the NAS.

After powering it up, I noticed a strong red motif emblazoned around the power button on the side, along with the four status LEDs on the top lighting up.

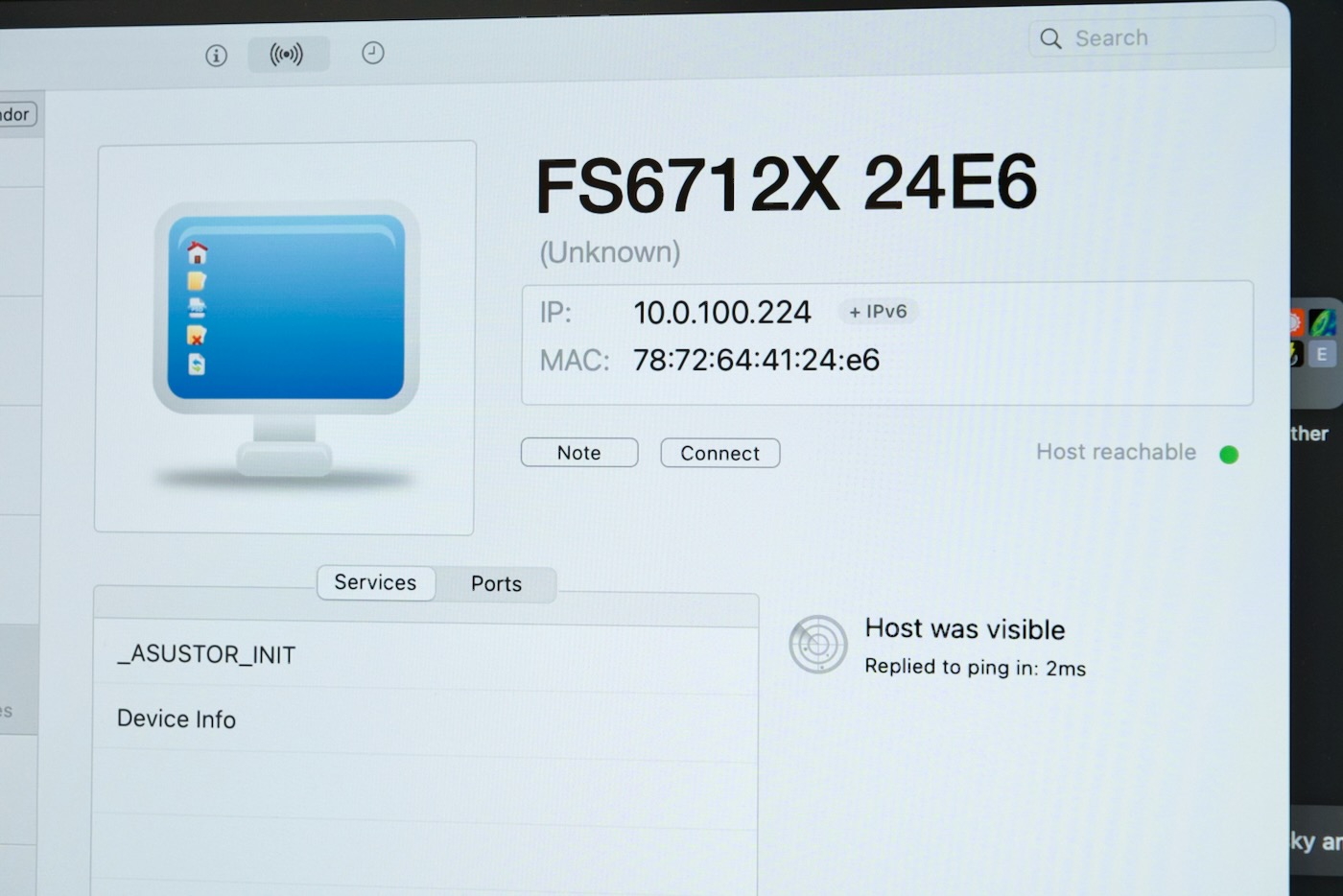

With the unit powered up, I fired up iNet Network Scanner and found the device on the network, with the device name "FS6712X 24E6". I could connect via SSH or HTTP on port 8000, so I did both! While I was putzing around trying to initialize the NAS with two Kioxia XG6 SSDs, I also logged in with username and password admin, and started exploring:

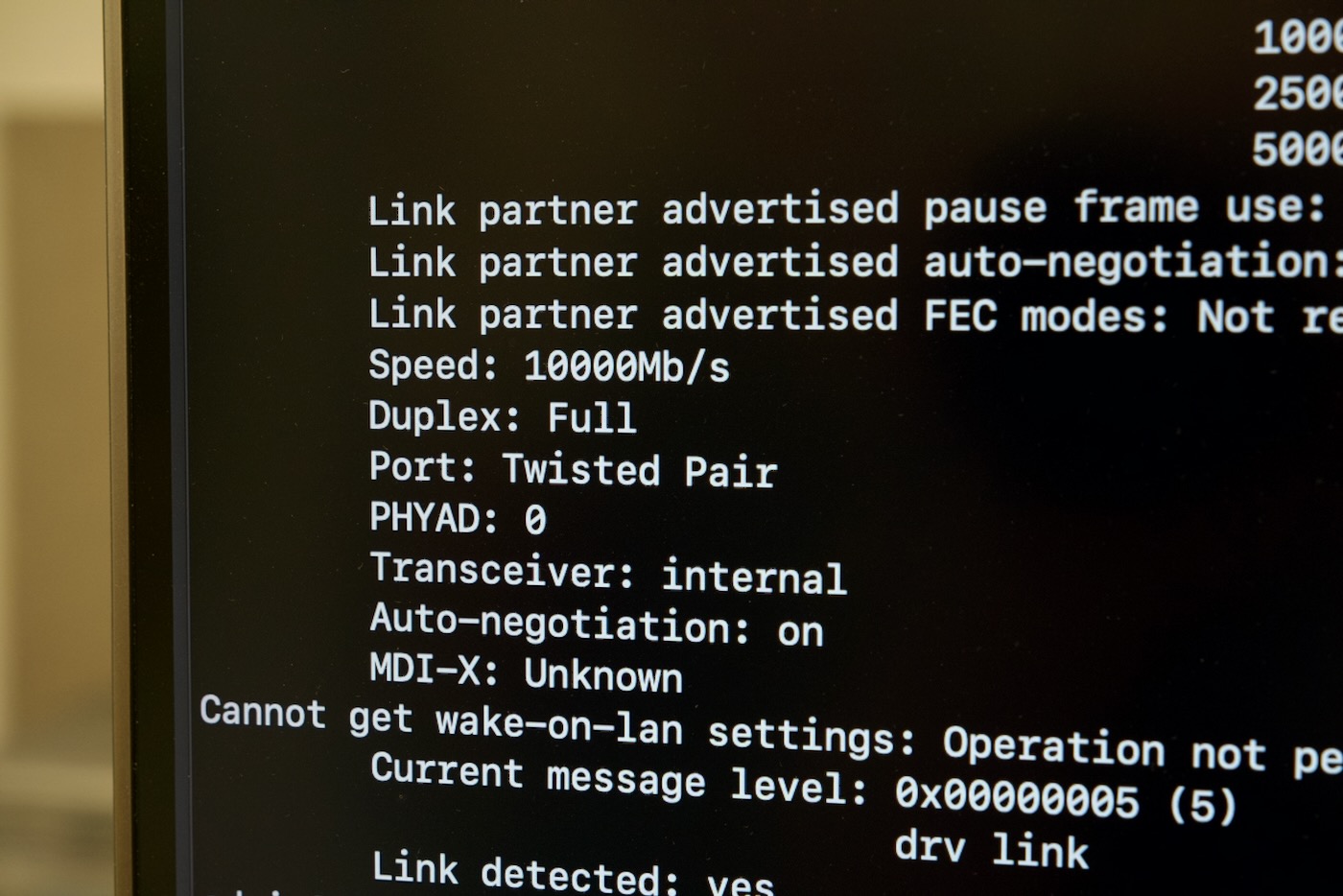

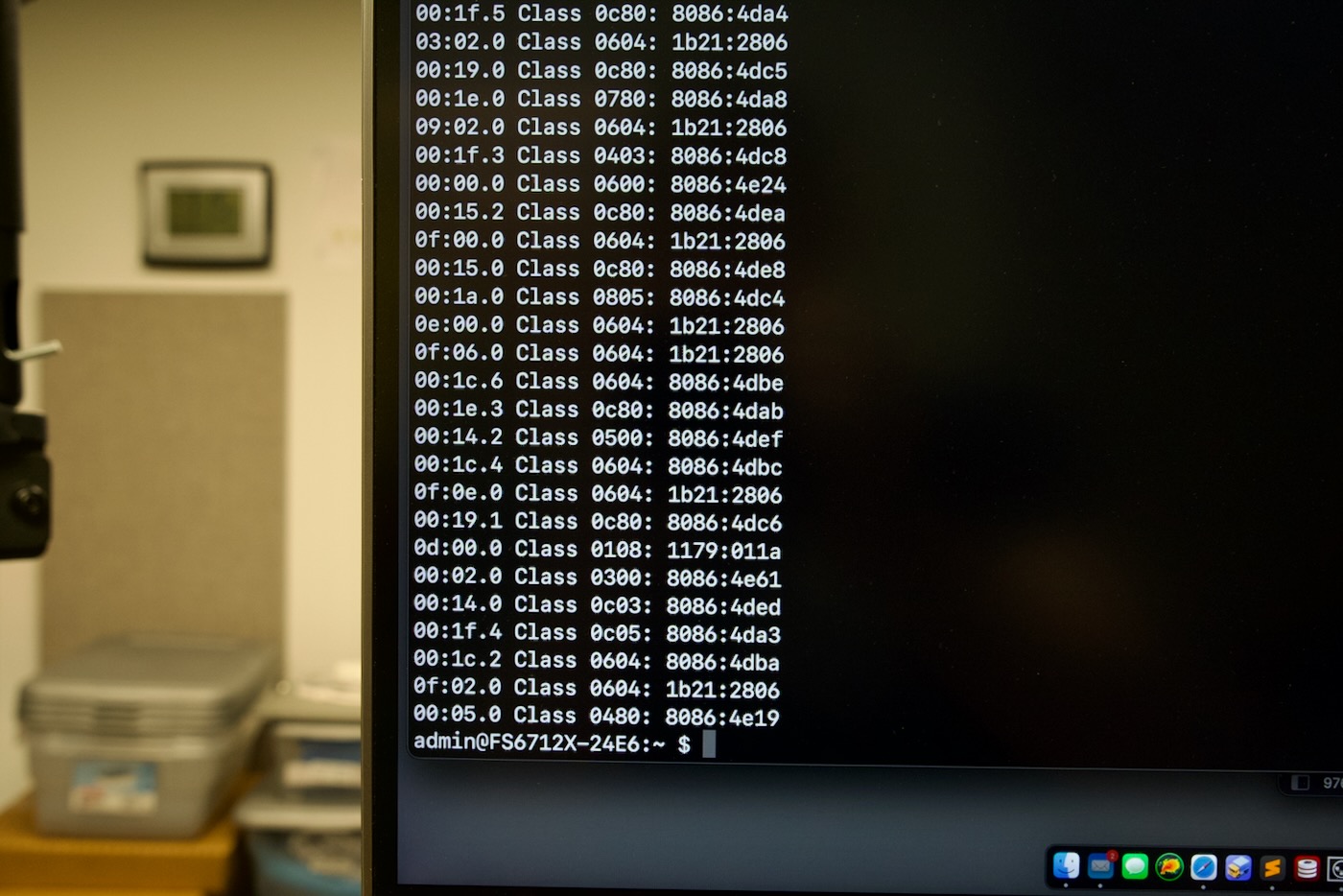

ethtool eth0 reported a full duplex 10 Gbps link, and so I tried lspci -vvvv, but whatever flavor of mini Linux this box uses (it runs a Linux fork from ASUSTOR called ADM, or ASUSTOR Data Manager), it only reports IDs, and I couldn't pull more info than that:

I also tried plugging in the HDMI output, but at least for now, all I see is a blinking cursor:

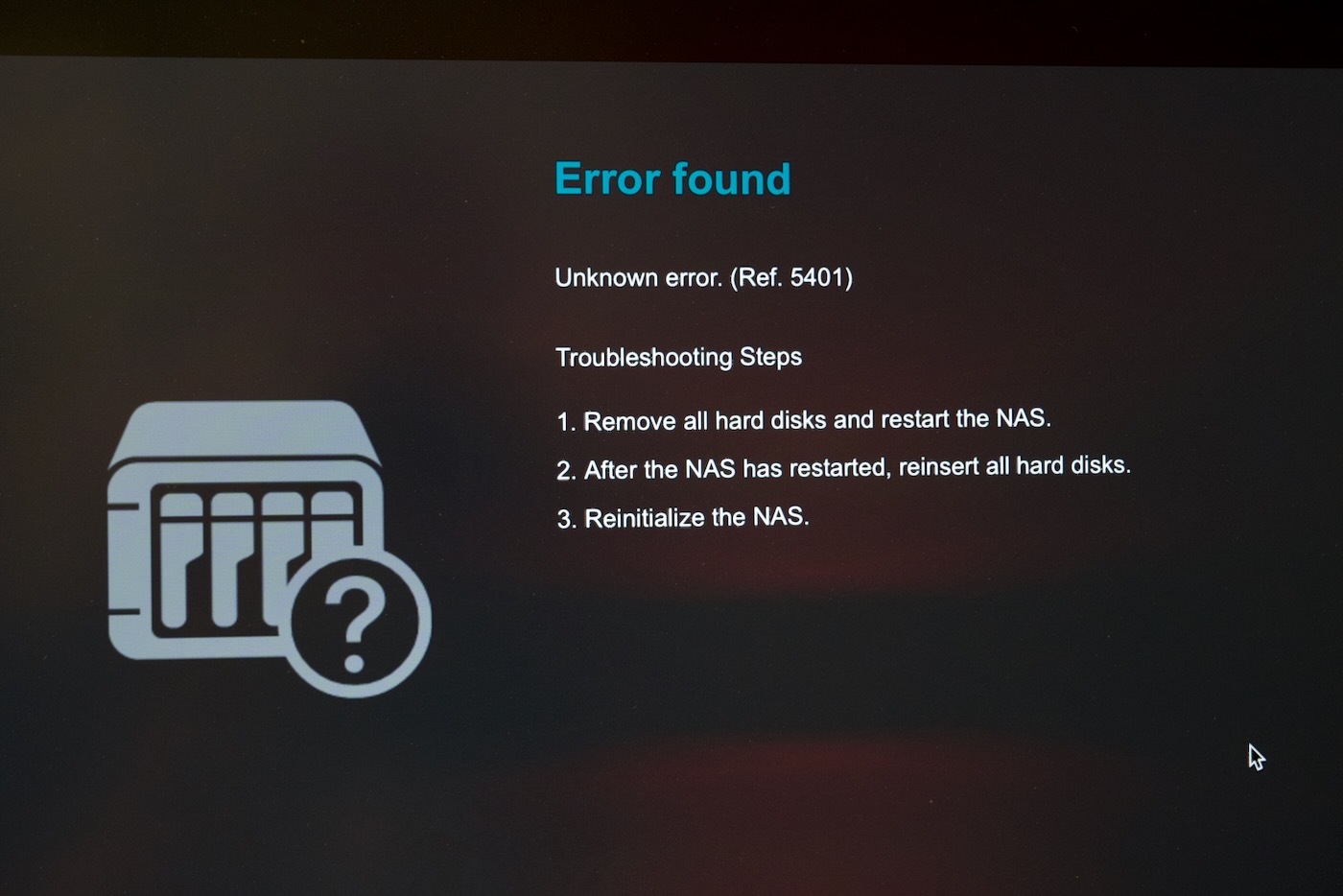

Maybe there will be some sort of UI after initialization. Speaking of initialization, I eventually got the following error message during that process:

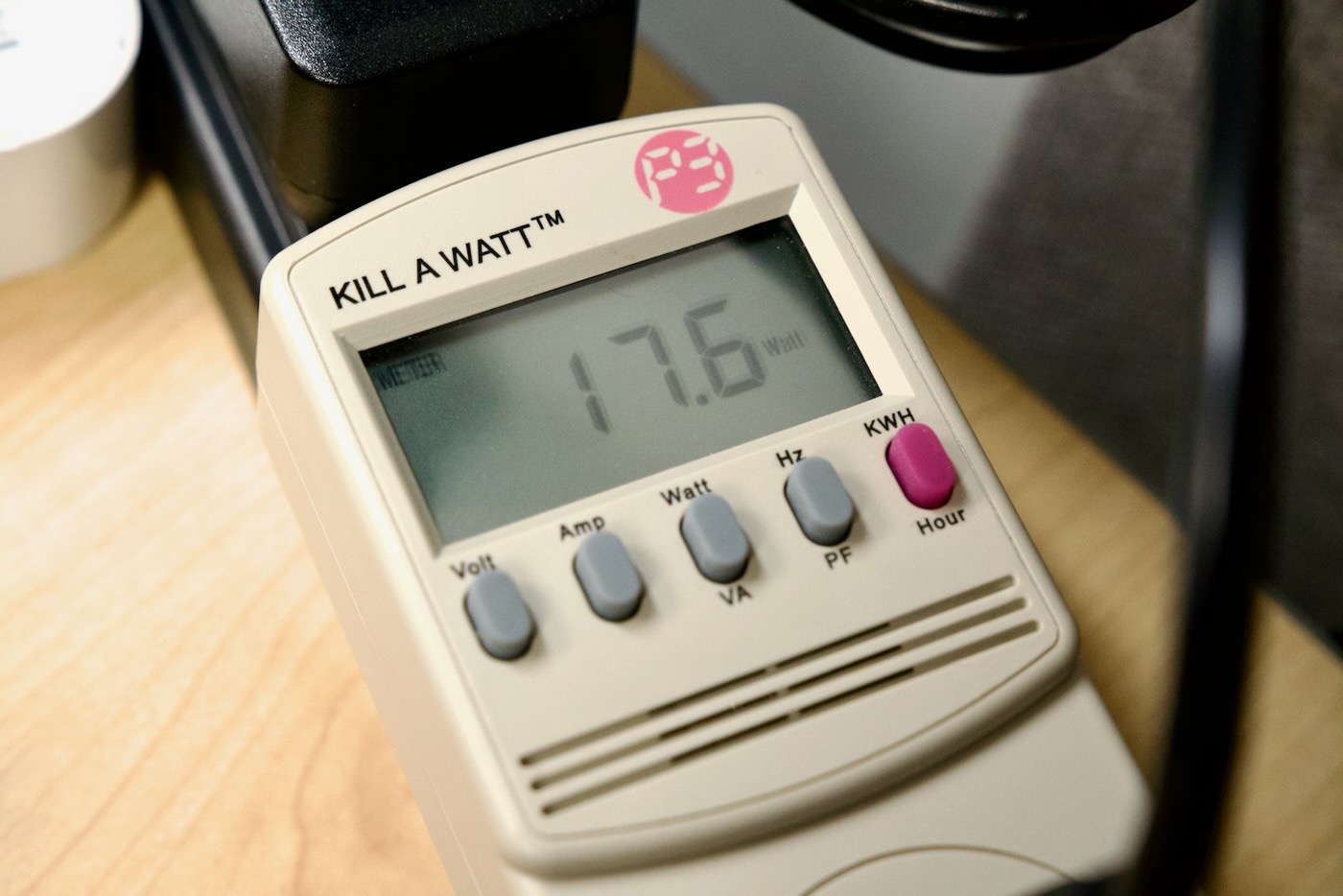

As I'm wheels up for the UK right now (I'm literally typing this on the plane before takeoff), I didn't have time to explore further—that'll come soon (along with more detail of the 6 vs 12 Pro units!). But I did take a quick power usage reading with no NVMe drives installed and 4GB of RAM:

That was while connected to my 10 Gbps network, so power usage on the Flashstor 6 may be a little reduced. I will be putting these through there paces soon—and I see Serve The Home has already gotten some preliminary test results. What else would you like to see?

Comments

Nice! You asked what we'd like to see... I'd like to see if you can run different operating systems on it. TrueNAS or something? Proxmox maybe? Hope you're doing well!

So far it seems like that is not an option, but I have been talking to ASUSTOR's rep about this—it would greatly extend the lifetime of their hardware if they could either unlock it for other OSes during it's supported lifespan, or at the end (they seem to support it for a long while though).

It would be neat to be able to run your own OS on it if you wanted something like ZFS support and you knew what you were doing.

I got one of the 12 bay units. F2 to poke at the BIOS settings and boot priorities does work as expected. I didn't see anything particularly interesting in the settings. Changing the boot priorities I tried booting the latest Arch installer and it loaded fine but lsblk was only recognizing a couple of the 12 drives. I took a backup of the 8 GB emmc as well just to have it. At this point I'm not sure what else to poke at, bit disappointing the drives didn't show up on a stock kernel.

Does that happen to be related to this issue? Unable to boot system, won't mount because of missing partitions because of "nvme nvme1: globally duplicate IDs for nsid 1".

That it does! I submitted a diff to the nvme maintainers so we'll see if it gets merged in. I emailed with some more details last night but I was able to get pretty much everything working after that!

Nice little unit. Would be nice to try TrueNAS but the main limitation would be that the unit does not support ECC RAM. Despite many (uninformed) posts on the web, ZFS does require ECC RAM or else the hashes in the tree can get corrupted (as they are hashed in RAM before writing to the array).

Lol, there is an "uninformed post" from literally an openZFS developer.

Has anyone been able to install Proxmox or TrueNAS SCALE on one of these. I'm interested in the Flashstor 6. I need an off-the-shelf NAS that can run an open source hypervisor or nas, preferably ssd based. Even with the caveats mentioned above - it makes a good fit for an upcoming project I have.

Did you get the FS6706T to be able to run TRueNAS? From your recent Youtube video it seems so. How is this done?

Yes; see this blog post.

Hi.. Wiill it work with vmware esxi host?

Yes it works as LUN

If I were to get the 12 drive version would I be running into a scenario where all the nvme SSDs would need to be replaced simultaneously due to the TRIM limitations for an all SSD Raid 5? My config would be the 12 of the crucial P3 4TB or Teamgroup M34 4TB drives.

wouldn’t raid 10 be better here?

Would a person be able to load UNRAID on one of these?

If you truly have the ear of Asustor, ask them to consider making something similar, but as Direct-Attached Storage (DAS). There's shockingly few compact options for more than 4x NVMe.

Basically, the options that I've found:

- IODyne Pro Data (12x NVMe)

- the new OWC Node Titan (9x NVMe)

(which is just an Akitio Node Titan enclosure with something like the OWC Accelsior SE inside)

- the new OWC Thunderblade X8 (8x NVMe)

and none of these, thus far, are available as empty shells.