Many months ago, when I was first testing different SATA cards on the Raspberry Pi Compute Module 4, I started hearing from GitHub user PastuDan about his experiences testing a few different SATA interface chips on the CM4.

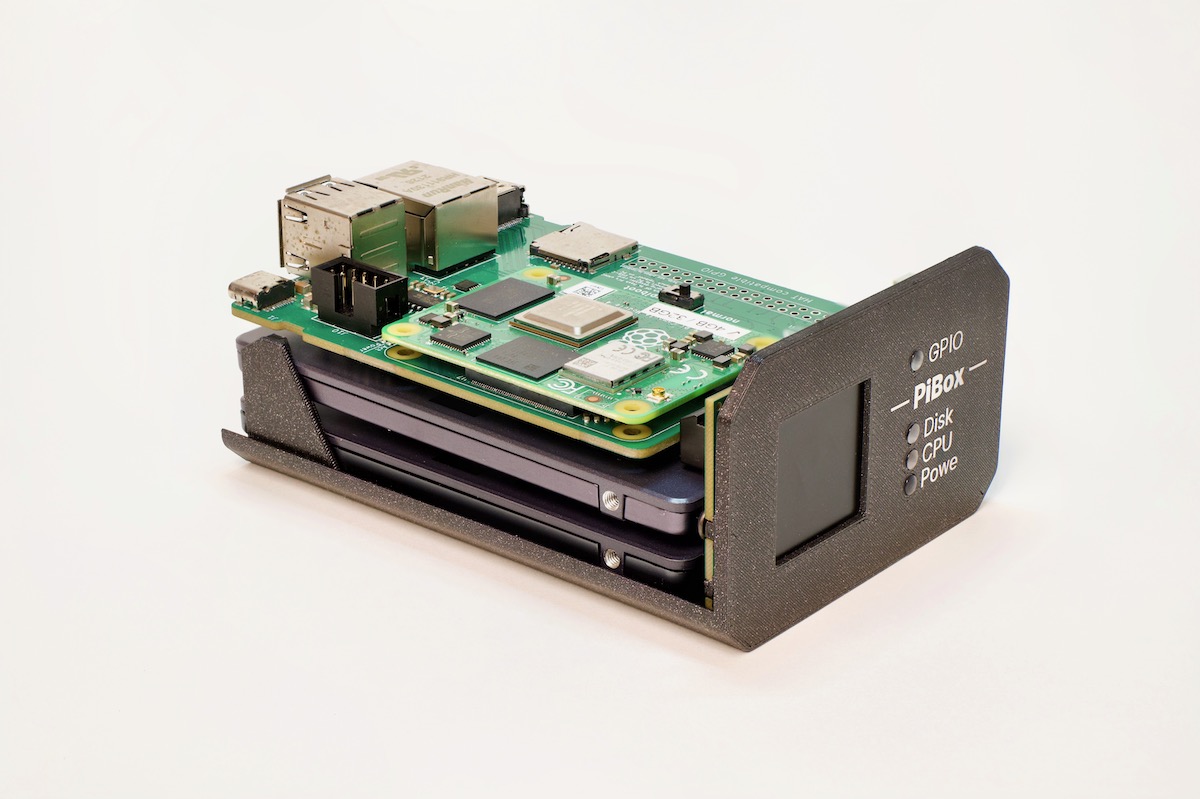

As it turns out, he was working on the design for the PiBox mini 2, a small two-drive NAS unit powered by a Compute Module 4 with 2 native SATA ports (providing data and power), 1 Gbps Ethernet, HDMI, USB 2, and a front-panel LCD for information display.

The Hardware

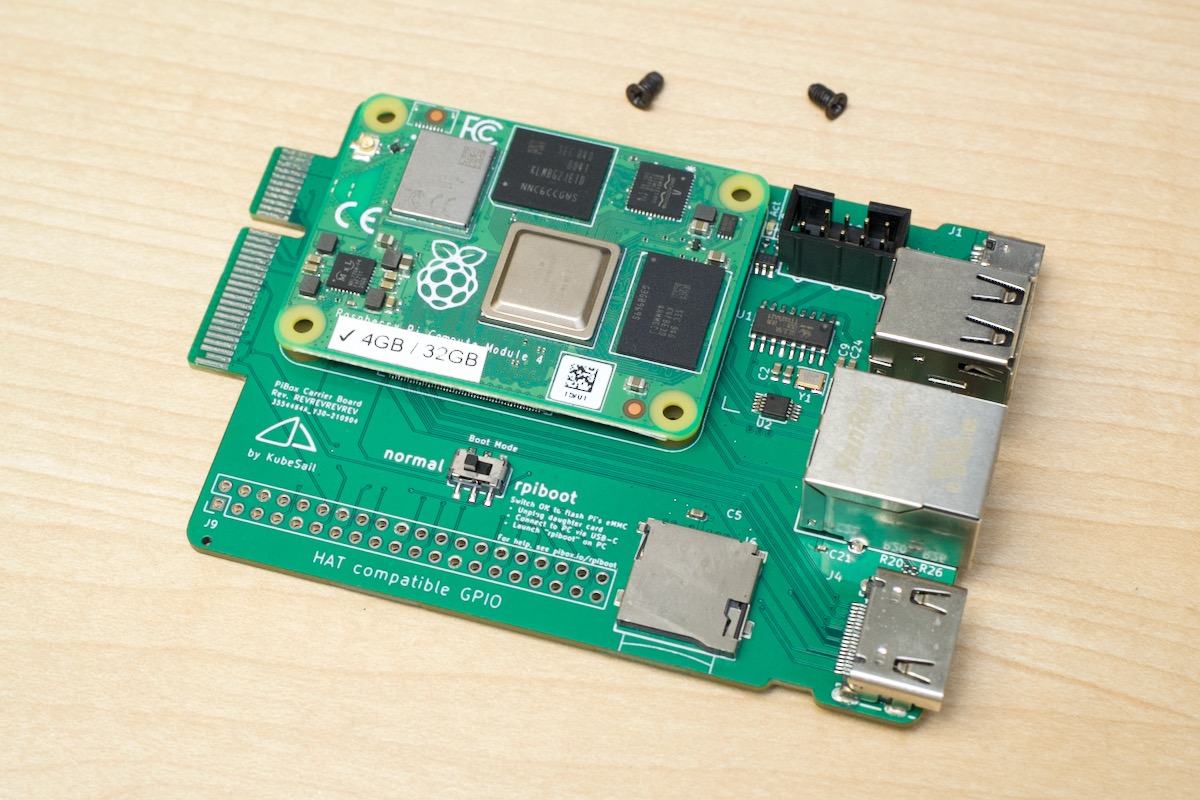

The PiBox mini 2 is powered by the Compute Module 4 on this interesting carrier board:

Notice the edge connector? It's a PCI Express x4 plug—but this board doesn't plug into a PCI Express slot—rather, it uses that hardware connector to plug into a special backplane, which holds the SATA chip, 2 SATA connectors for hard drives or SSDs, and has a status display connector and activity LEDs.

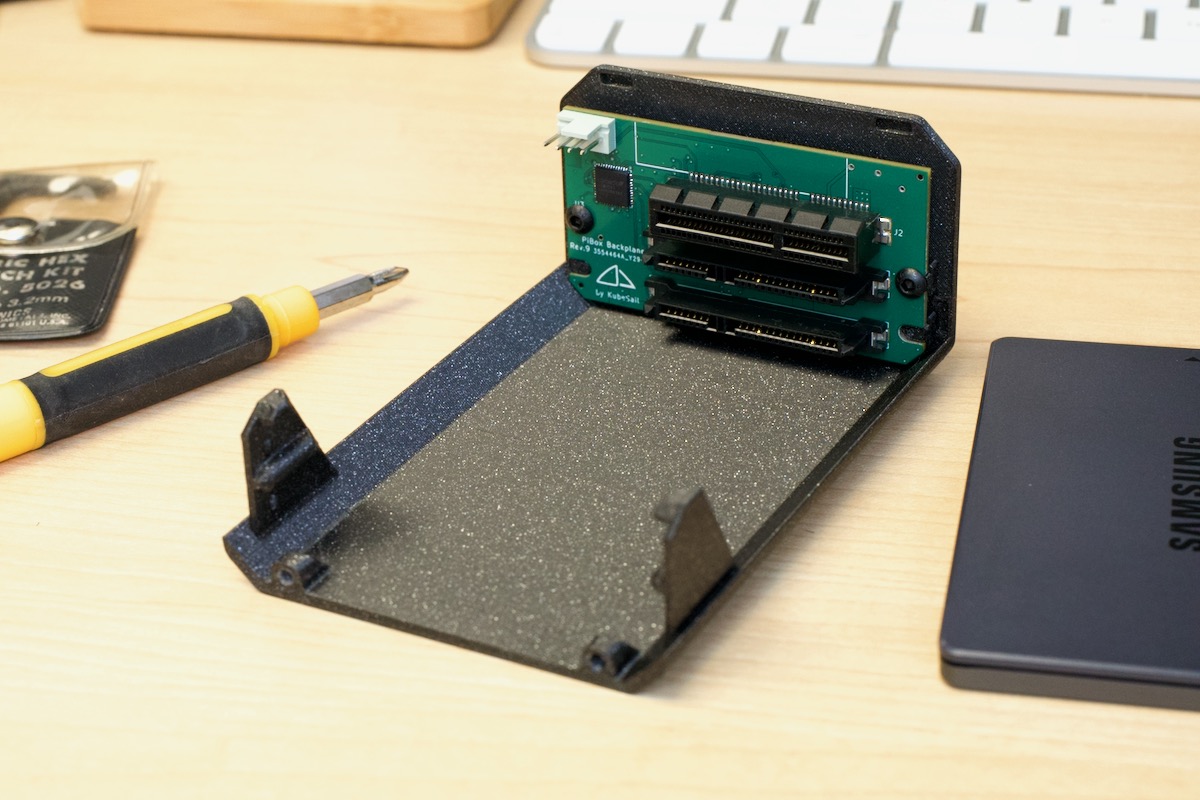

The backplane fits nicely into their 3D case design:

A metal enclosure will be provided at a later time, but the 3D enclosure is already pretty nice—much more compact than anything else I've used with similar specs.

All the IO is on the rear, and it's nothing amazing, but having a full size HDMI port means this NAS could pull double-duty and run media software like Plex and be connected directly to a TV.

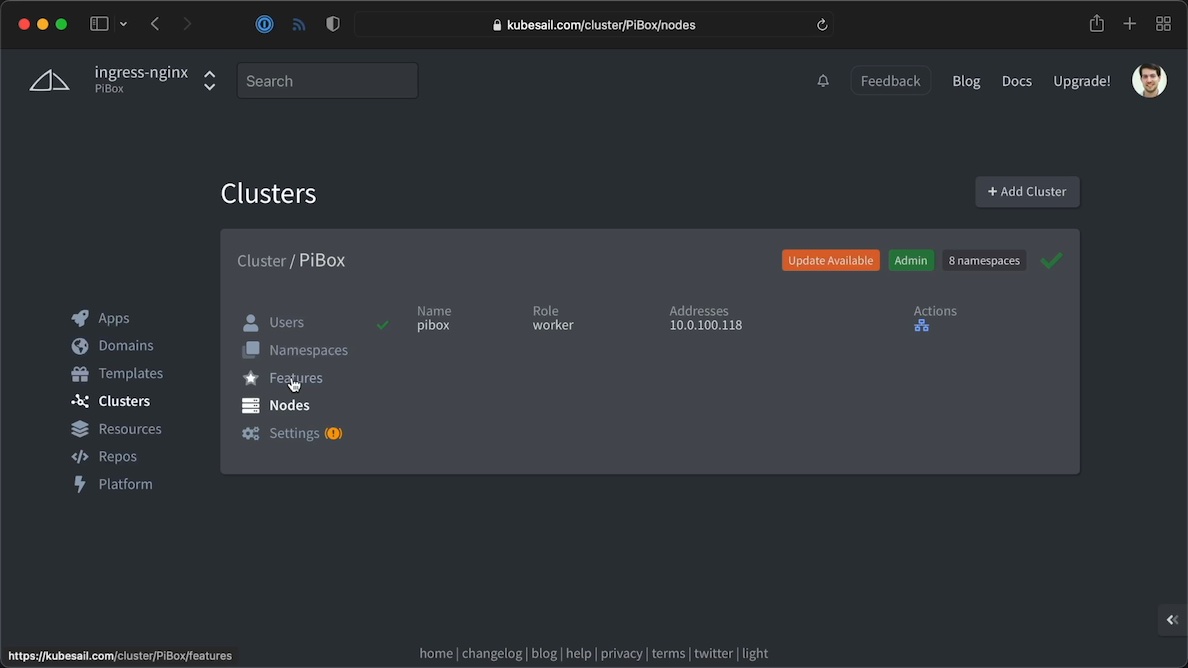

Kubesail and MicroK8s

The box came pre-installed with MicroK8s and the Kubesail Agent, which ties the little Kubernetes endpoint into Kubesail, a semi-managed Kubernetes platform that allows you to bring your own cluster (you don't need PiBox to use it!) and proxies traffic to the cluster to make self-hosting much easier.

I didn't spend a whole lot of time testing Kubesail itself, but I did like the overall approach, and especially liked the documentation and ability to dive straight into my cluster's YAML templates when needed, all through a web UI.

As stated previously, there's no need to run Kubernetes or Kubesail at all—if you just want to run OpenMediaVault or some other OS install, that's easy to do, and Kubesail even publishes a guide for customizing other OSes for the PiBox, so things like the front-panel LCD and PWM fan control still work.

Disk performance

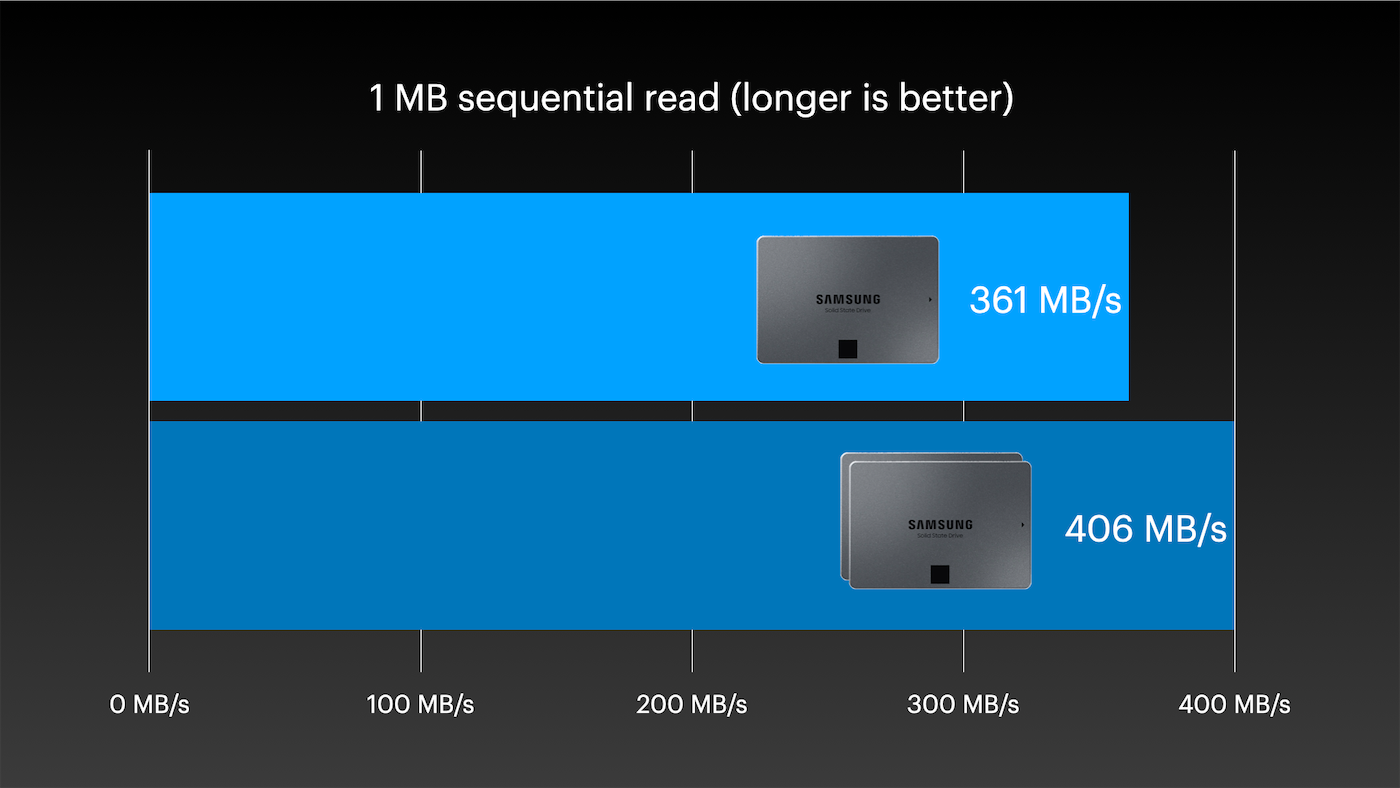

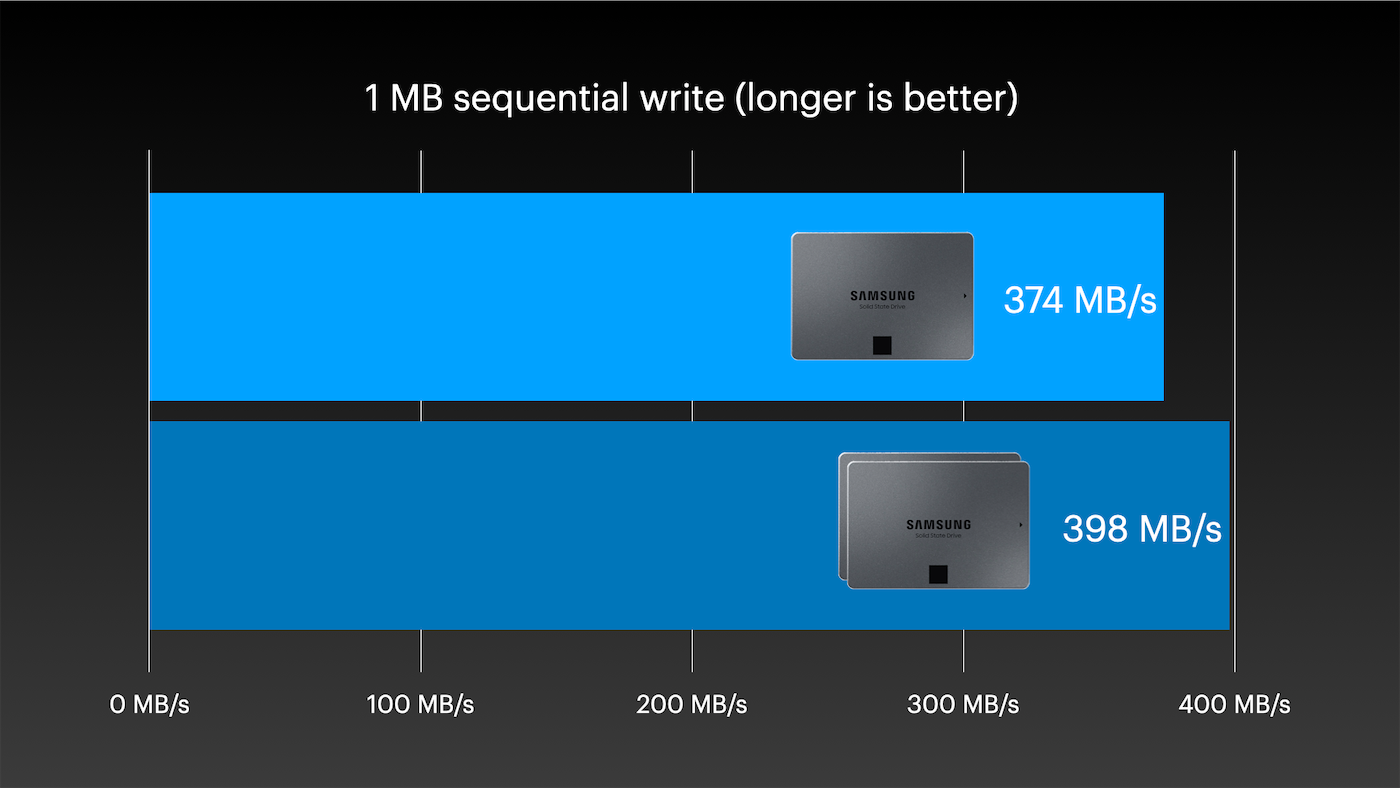

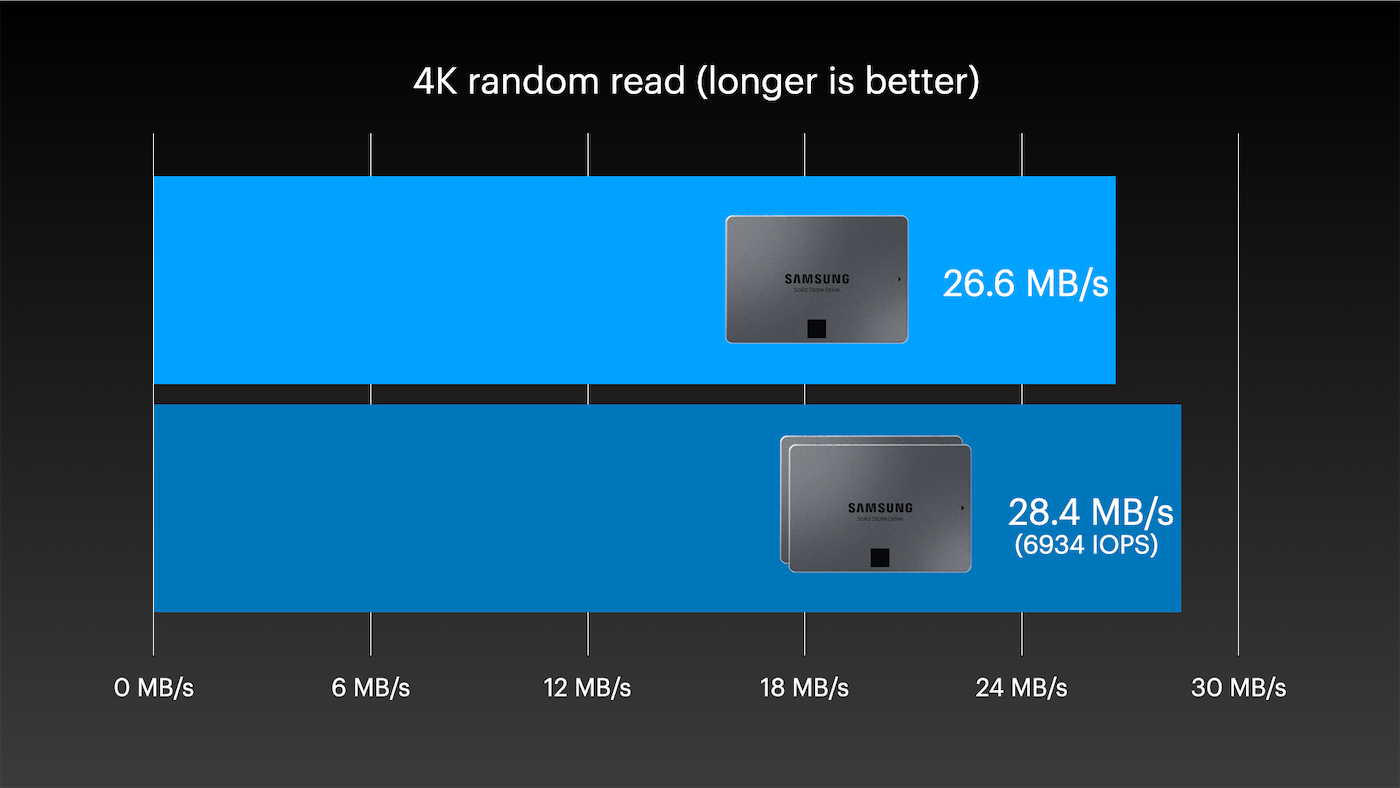

I ran some baseline performance tests, though the ASM1061 SATA-III controller used is similar in performance to the other boards I've tested with the Compute Module 4—meaning the maximum throughput is limited by the ~3.6 Gbps real-world throughput of the Pi's PCIe Gen 2.0 x1 lane.

Using mdadm (sudo mdadm --create --verbose /dev/md0 --level=1 --raid-devices=2 /dev/sda /dev/sdb), I created a RAID 1 array with two Samsung 8TB 870 QVO SSDs (don't ask how much these things cost...). Then I used fio to test:

1M Sequential Read

fio --name TEST --eta-newline=5s --filename=fio-tempfile.dat --rw=read --size=500m --io_size=10g --blocksize=1024k --ioengine=libaio --fsync=10000 --iodepth=32 --direct=1 --numjobs=1 --runtime=60 --group_reporting

1M Sequential Write

fio --name TEST --eta-newline=5s --filename=fio-tempfile.dat --rw=write --size=500m --io_size=10g --blocksize=1024k --ioengine=libaio --fsync=10000 --iodepth=32 --direct=1 --numjobs=1 --runtime=60 --group_reporting

4K Random Read

fio --name TEST --eta-newline=5s --filename=fio-tempfile.dat --rw=randread --size=500m --io_size=10g --blocksize=4k --ioengine=libaio --fsync=1 --iodepth=1 --direct=1 --numjobs=1 --runtime=60 --group_reporting

The numbers aren't ground-breaking, but they're in line with what I've gotten on other Pi storage tests when using native SATA-III drives directly on the Pi's PCIe bus (instead of through USB-to-SATA adapters, as is popular to do in older Pi NAS products like the Argon Eon.

And for a Pi, 7000 IOPS is nothing to be scoffed at. The best I've gotten with microSD cards or eMMC storage is around 2-3000 IOPS.

Running things Kubernetes' storage or log output on SSDs also saves the microSD card or built-in eMMC storage from many small writes, greatly extending their life.

Unfortunately, right now you can't fully boot the Pi off native SATA storage. Hopefully that will change someday!

Teardown and Review

I compiled all the details about my teardown and review of the PiBox mini 2 in this YouTube video:

If you're interested in getting one, they're currently running a Kickstarter for the PiBox mini 2, and Kubesail is planning on making some other versions, too, eventually including a 5-bay 3.5" NAS!

The PiBox mini 2 isn't the only full-featured NAS product I'm testing right now, either—I've just started testing a new NAS build using Radxa's Taco! Make sure you subscribe to my YouTube channel for the latest news.

Comments

Hello Jeff,

thanks for sharing this review.

Before KubeSail released this device, it was a offering a self-hosted service related to K8S.

However I didn't fully understand what's the benefit of KubeSail deployment in a K8S cluster.

If it's about deploying software solutions, e.g. Nextcloud, this can be achieved with Rancher, too.

Can you please share your thoughts of KubeSail's use cases?

THX

Kubesail is very much like Rancher in that regard; just another option for managed Kubernetes clusters.

Is there any word that they will offer an option of buying just the little monitor and necessary boards (excluding the CM4) and sell the 3D printable case files for me to print? I have a Prusa Mini+ and have no problem making my own case.

But can you add two dual m.2 adapter like this QNAP Dual M.2 SATA SSD to 2.5” SATA RAID Adapter

https://www.amazon.com/dp/B07RLKVN9N/?coliid=I588IBNLZV9F5&colid=37YIA4…

Put two 8TB m.2's in each of those for 32TB of raw space. That is the big question. Not sure what the best performance would be though, to use the onboard hardware RAID with the QNAP's, or use it as a JBOD with software RAID. Still just a pipe dream, as it is way to cost prohibitive with the cost of the 8TB m.2's being like $1400 a piece. But that would be a nice jellyfin server.

Waveshare has released a very similar product. Can't post the link because it's forbidden, but you can find by the name of "All-In-One Mini-Computer for Raspberry Pi Compute Module 4" on their website... Do you know if the two products are related?