Raspberry Pi Cluster Episode 6 - Turing Pi Review

A few months ago, in the 'before times', I noticed this post on Hacker News mentioning the Turing Pi, a 'Plug & Play Raspberry Pi Cluster' that sits on your desk.

It caught my attention because I've been running my own old-fashioned 'Raspberry Pi Dramble' cluster since 2015.

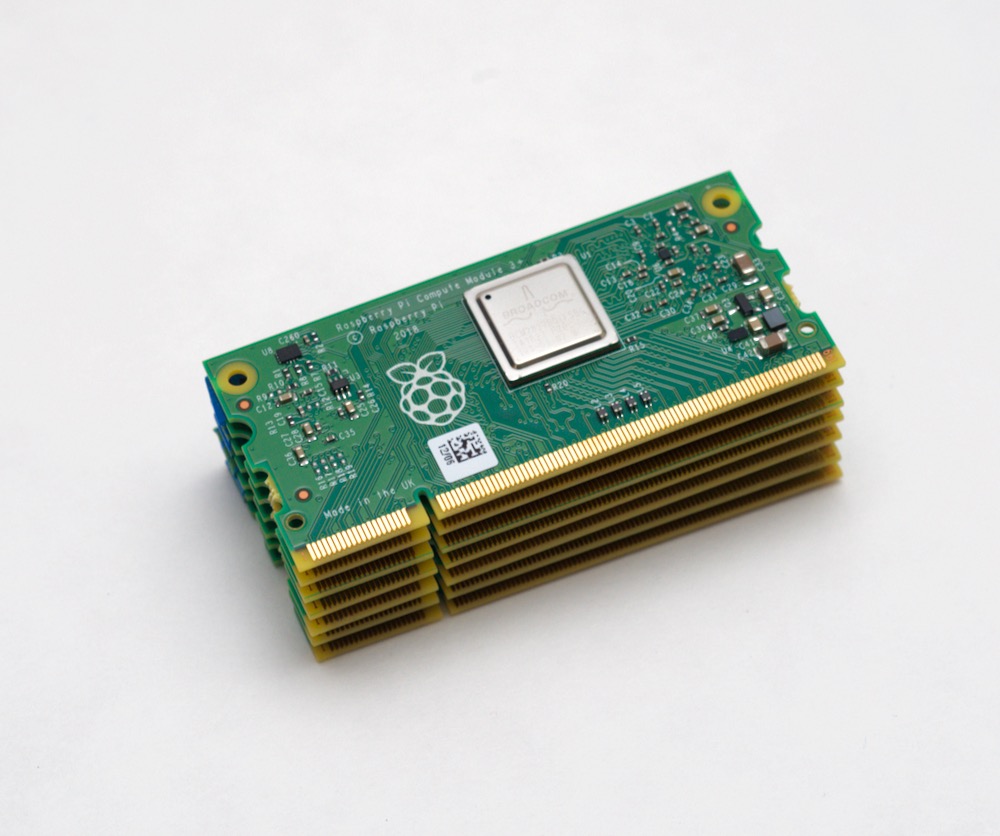

So today, I'm wrapping up my Raspberry Pi Cluster series with my thoughts about the Turing Pi that I used to build a 7-node Kubernetes cluster.

Video version of this post

This blog post has a companion video embedded below: