Run Ansible Tower or AWX in Kubernetes or OpenShift with the Tower Operator

Note: Please note that the Tower Operator this post references is currently in early alpha status, and has no official support from Red Hat. If you are planning on using Tower for production and have a Red Hat Ansible Automation subscription, you should use one of the official Tower installation methods. Someday the operator may become a supported install method, but it is not right now.

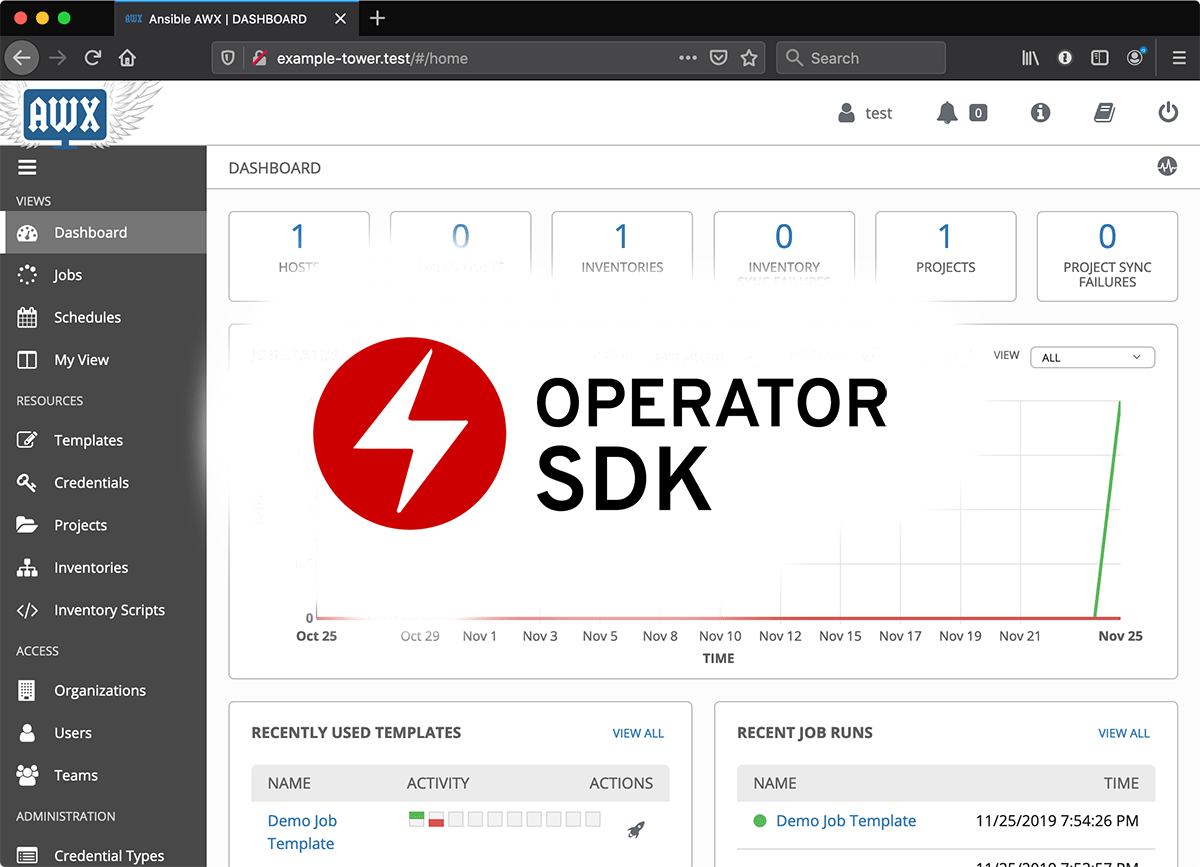

I have been building a variety of Kubernetes Operators using the Operator SDK. Operators make managing applications in Kubernetes (and OpenShift/OCP) clusters very easy, because you can capture the entire application lifecycle in the Operator's logic.