If you haven't heard, the Raspberry Pi 5 was announced today (it'll be available in October).

I have a full video going over the hardware—what's changed, what's new, and what's gone—and I've embedded it below. Scroll beyond to read more about specs, quirks, and some of the things I learned testing a dozen or so PCIe devices with it.

The Video

Specifications / Comparison to Pi 4

| Raspberry Pi 4 | Raspberry Pi 5 | |

|---|---|---|

| CPU | 4x Arm A72 @ 1.8 GHz (28nm) | 4x Arm A76 @ 2.4 GHz (16nm) |

| GPU | VideoCore VI @ 500 MHz | VideoCore VII @ 800 MHz |

| RAM | 1/2/4/8 GB LPDDR4 @ 2133 MHz | 4/8 GB LPDDR4x @ 4167 MHz |

| USB | USB 2.0 (shared), USB 3.0 (shared) | 2x USB 2.0, 2x USB 3.0 |

| PCIe | (Internal use) | PCIe Gen 2.0 x1 |

| Southbridge | N/A | RP1 via 4 PCIe Gen 2.0 lanes |

| Ethernet | 1 Gbps | 1 Gbps (w/ PTP support) |

| Wifi/BT | 802.11ac/BLE 5.0 | 802.11ac/BLE 5.0 |

| GPIO | 40-pin header via BCM2711 | 40-pin header via RP1 Southbridge |

| Fan | 5v via GPIO pins, no PWM | 4-pin fan header, with PWM |

| UART | via GPIO, requires config | via UART header, direct to SoC |

| Price | $35 (1GB) / $45 (2GB) / $55 (4GB) / $75 (8GB) | $60 (4GB) /$80 (8GB) |

Pi 5 Overview

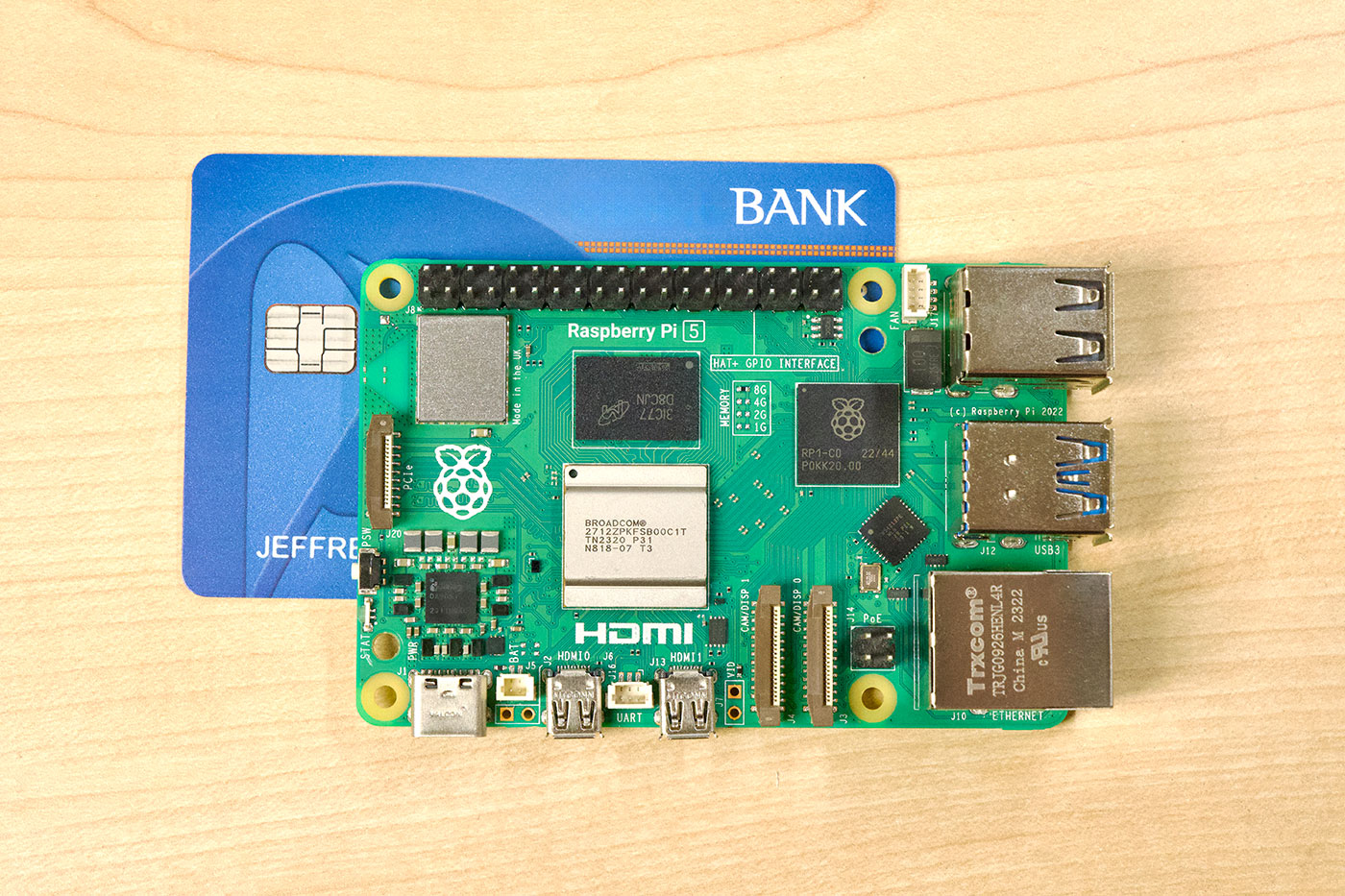

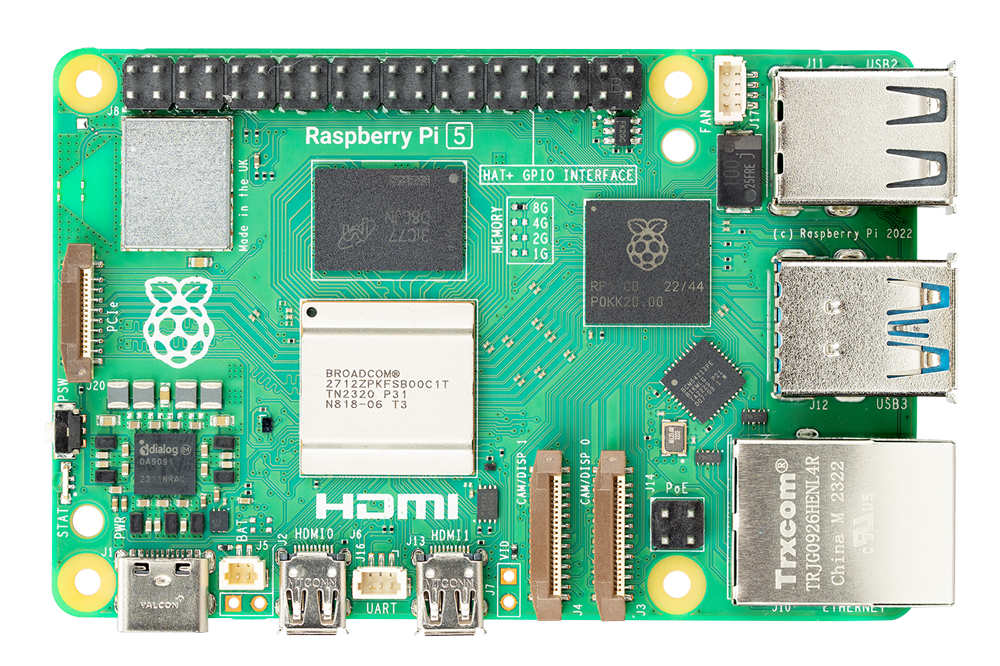

The Raspberry Pi 5 model B preserves the credit-card-sized footprint of the previous generations, but crams a bit more functionality into the tiny space, including an RTC, a power button, a separate UART header, a 4-pin fan connector, a PCI Express FPC connector, two dual-purpose CSI/DSI FPC connectors, and four independent USB buses (one to each of the 2x USB 3.0 ports and 2x USB 2.0 ports).

Some things have moved or been modified:

- The Ethernet jack flipped back over to the same orientation as the Pi 3 B+ and earlier

- The two ACT/STATUS LEDs have become one, in a combined STAT LED that changes color

- The VL805 USB 3.0 controller chip has been replaced by an RP1 'southbridge', which soaks up 4 PCIe lanes from the new BCM2712 SoC and distributes them to all the interfaces

- The A/V jack is gone, in its place is an unpopulated analog video header

- The CSI and DSI ports are gone, replaced by two dual-purpose CAM/DISP ports capable of more bandwidth and flexible use (you can have stereo cameras on the Pi 5 directly now)

- The PMIC chip is upgraded and can handle 25W of USB-C power input at 5V/5A (Raspberry Pi sells a new PSU for this, though the old 3A PSU can be used still)

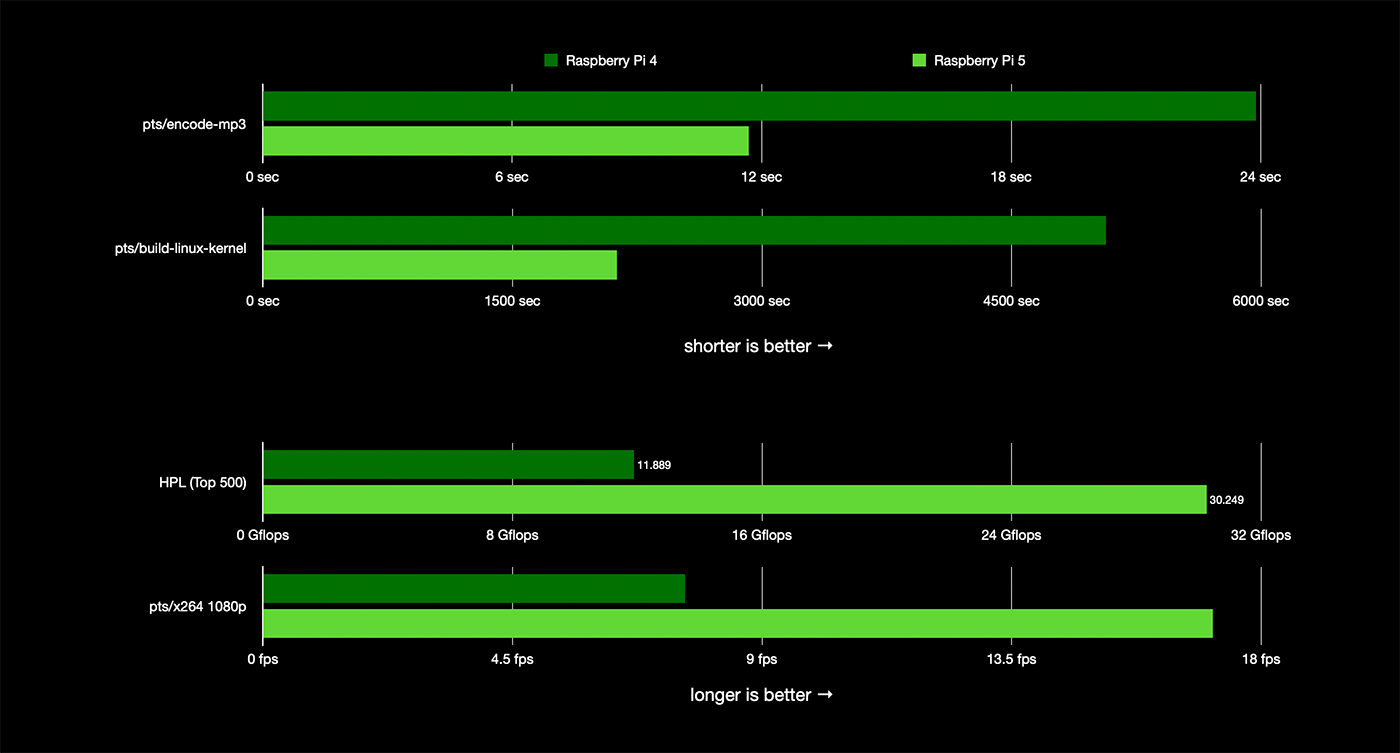

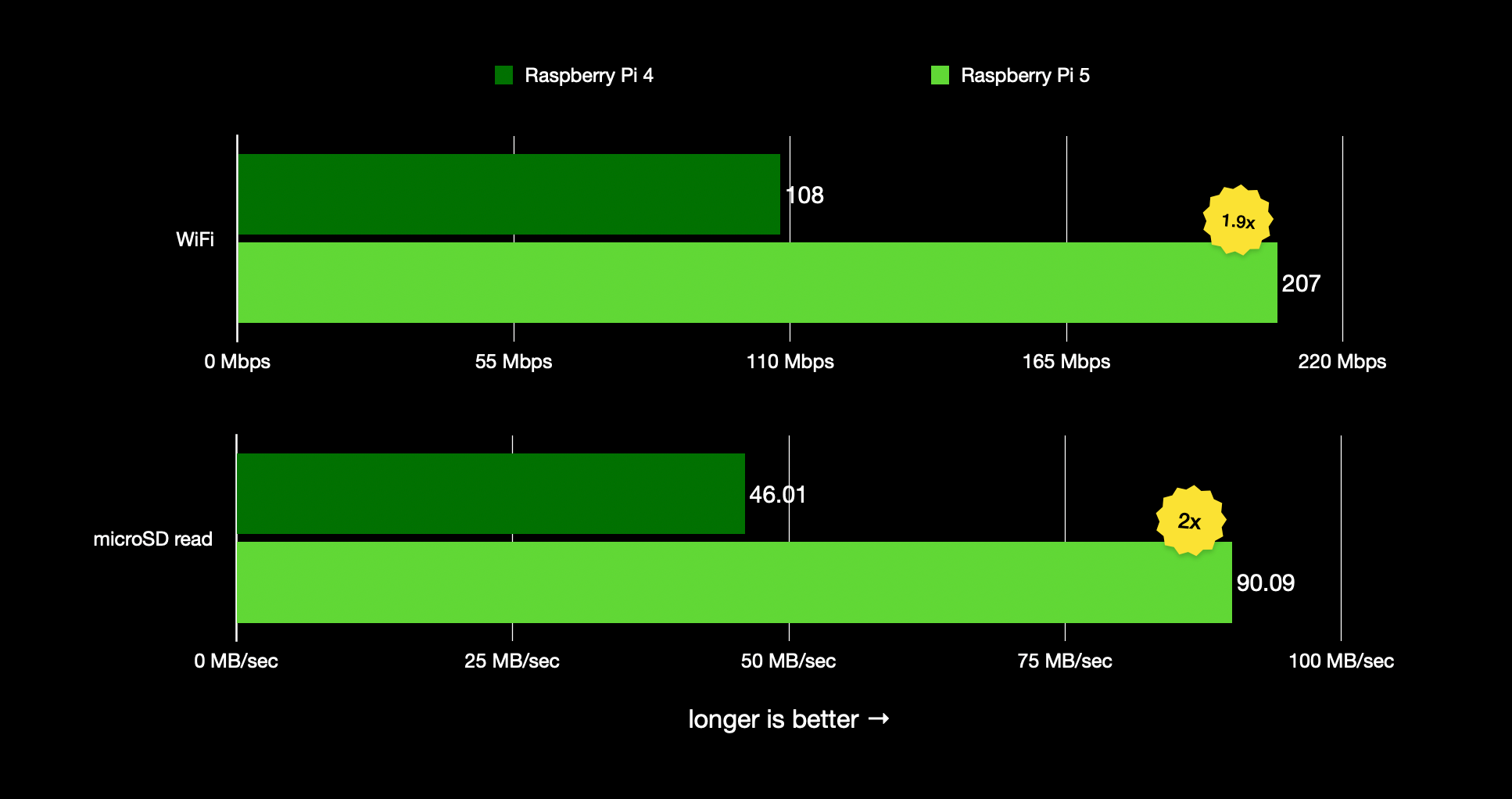

The Pi 5 is 2-3x faster than the Pi 4 in every meaningful way:

- The SoC performs between 1.8-2.4x faster on every CPU and system benchmark I've run

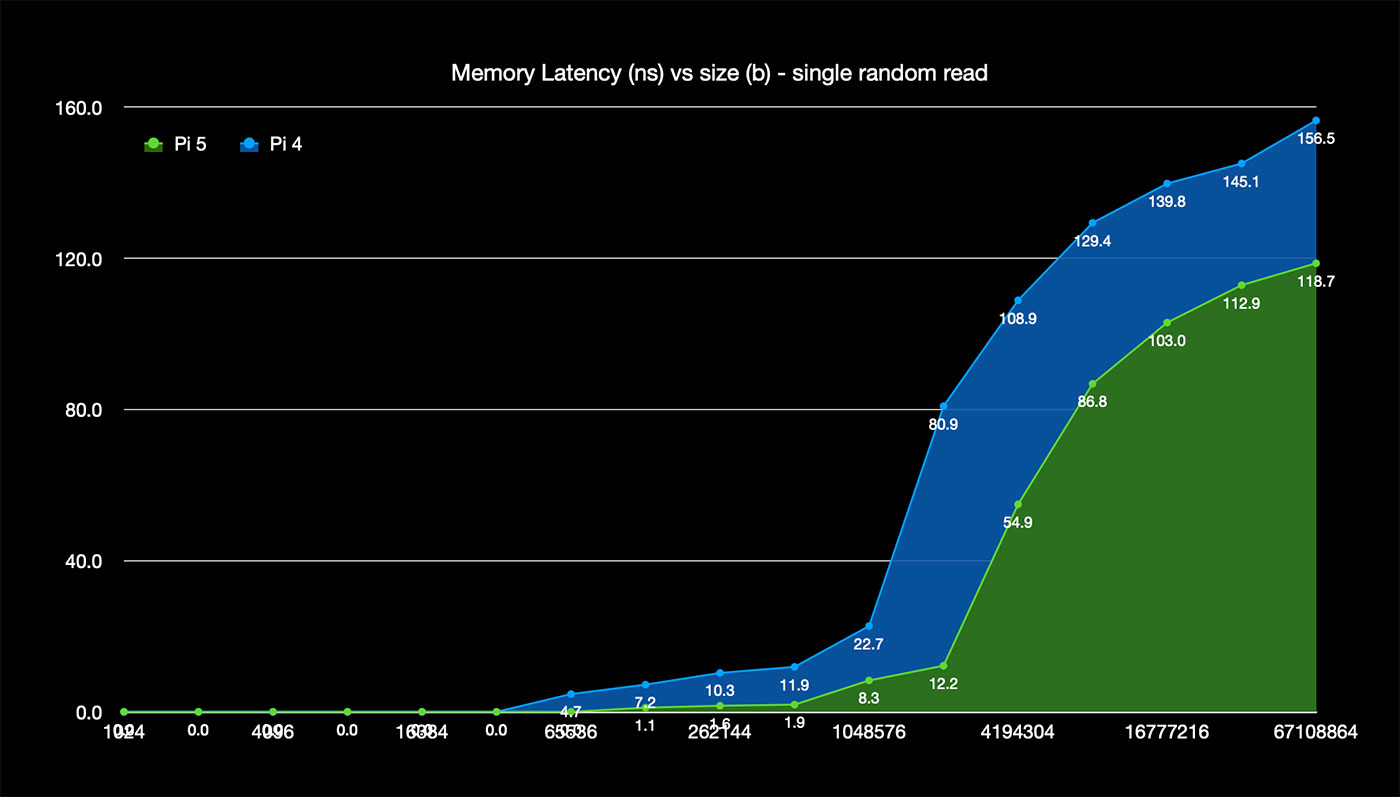

- LPDDR4X memory runs double the speed (at a lower voltage) than the LPDDR4 of the Pi 4, leading to 2-4x faster memory access, with a substantial improvement in latency

- Overall USB bandwidth is double, owing to the four independent USB buses

- HDMI output performance is doubled, and you can run 4K @ 60 Hz without stuttering while performing other activities (the Pi 4 would have issues even at 30 Hz sometimes).

- The microSD card slot is UHS-I (SDR104), doubling the performance from the Pi 4 to 104 MB/sec maximum

- The WiFi performance is also doubled—I got 200 Mbps via 5 GHz 802.11c versus the 104 Mbps I got on the Pi 4.

Cryptography-related functions are 45x faster, owing to the Arm crypto extensions finally making their way into the Pi 5's SoC:

Pi 5 and Raspberry Pi OS 12 "Bookworm"

The Pi 5 has been built to work best with Pi OS 12 "Bookworm" (based on the Debian release of the same name), and during the alpha testing phase, we did encounter some growing pains—a few of which may make it to production, as they are longstanding issues that also affect other Arm-based systems.

One is the decision to trial 16k page size, which gives slightly improved performance at the expense of long-tail compatibility with old armv7/32-bit binaries. I don't know if Pi OS 12 will ship with 16k pagesize enabled or not, but there are ways to shim compatibility with older software if necessary.

Another is deprecating libraries and utilities like tvservice and wpa_supplicant in favor of more modern alternatives like kmsprint and NetworkManager. I'll be publishing a post on nmcli use on Raspberry Pi OS 12 soon, so make sure you're subscribed to this blog's RSS feed!

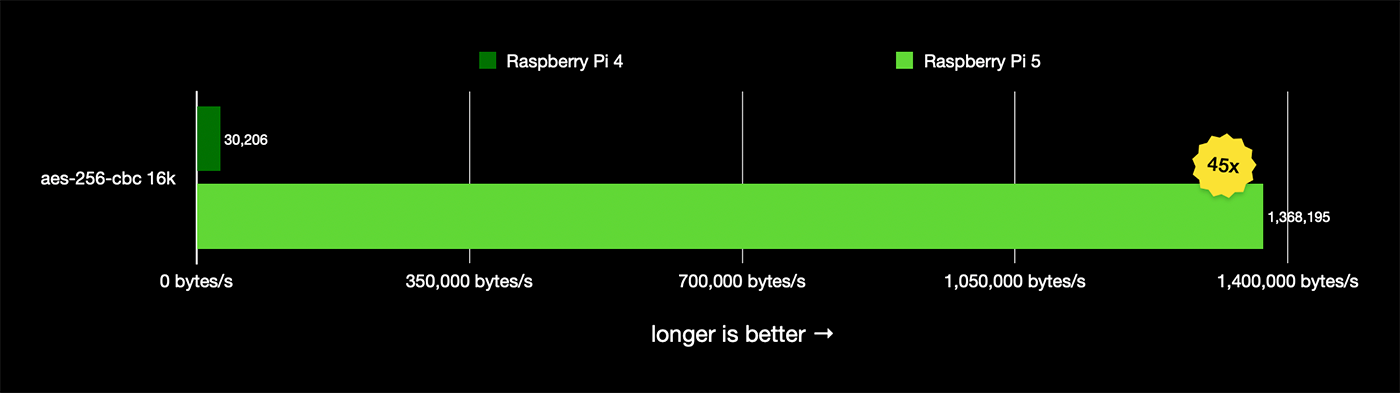

Exploring PCIe on the Pi 5

But now we get to it—my favorite new feature of the Pi 5: PCI Express exposed directly to the end user!

A close inspection of the RP1 specs indicate it uses 4 lanes of PCIe Gen 2.0 from the BCM2712 SoC... meaning the board actually has five lanes of PCIe bandwidth available. But only one lane is exposed to the user via the FPC connector pictured above.

By default, the external PCIe header is not enabled, so to enable it, you can add one of the following options into /boot/firmware/config.txt and reboot:

# Enable the PCIe External connector.

dtparam=pciex1

# This line is an alias for above (you can use either/or to enable the port).

dtparam=nvme

And the connection is certified for Gen 2.0 speed (5 GT/sec), but you can force it to Gen 3.0 (10 GT/sec) if you add the following line after:

dtparam=pciex1_gen=3

I did that in much of my testing and never encountered any problems, so I'm guessing that outside of more exotic testing, you can run devices at PCIe Gen 3.0 speeds if you test and they run stable.

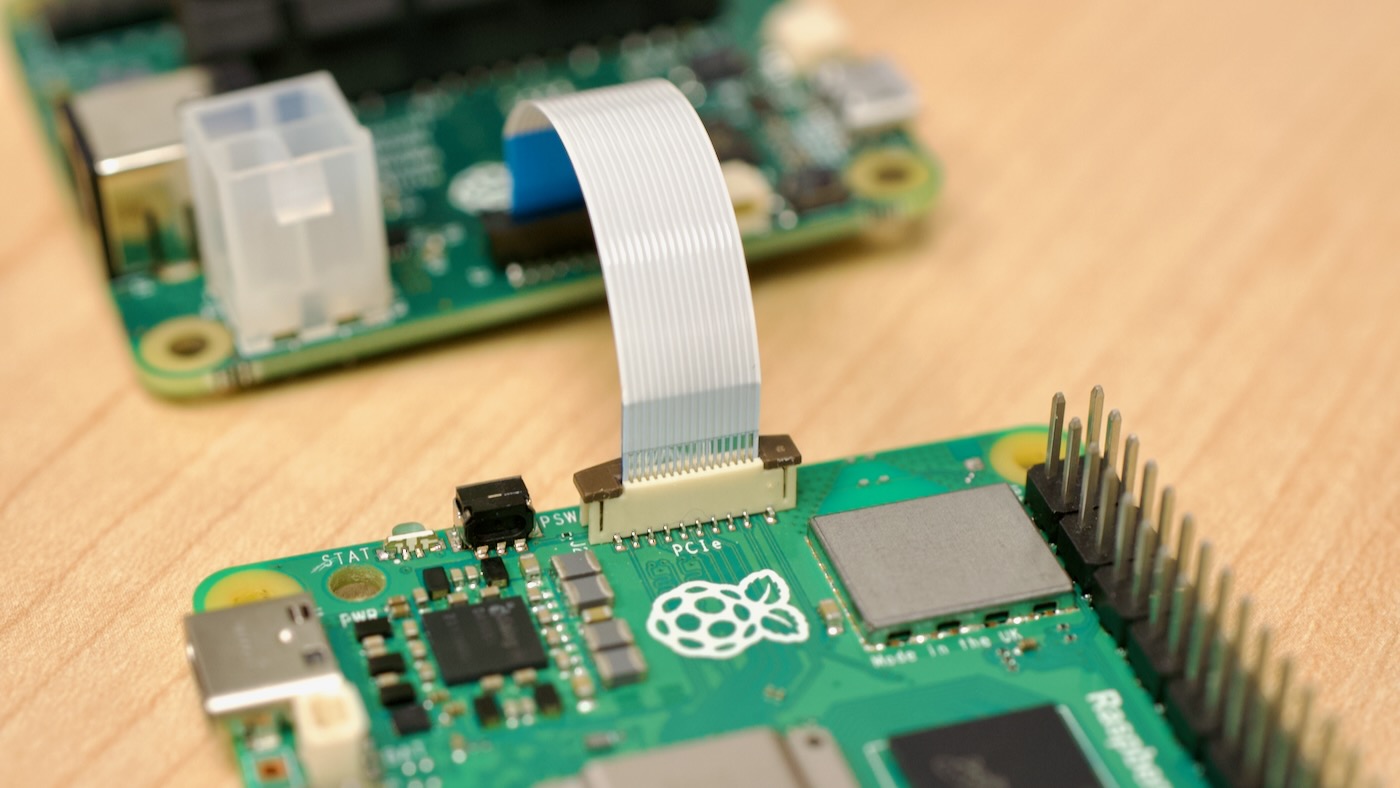

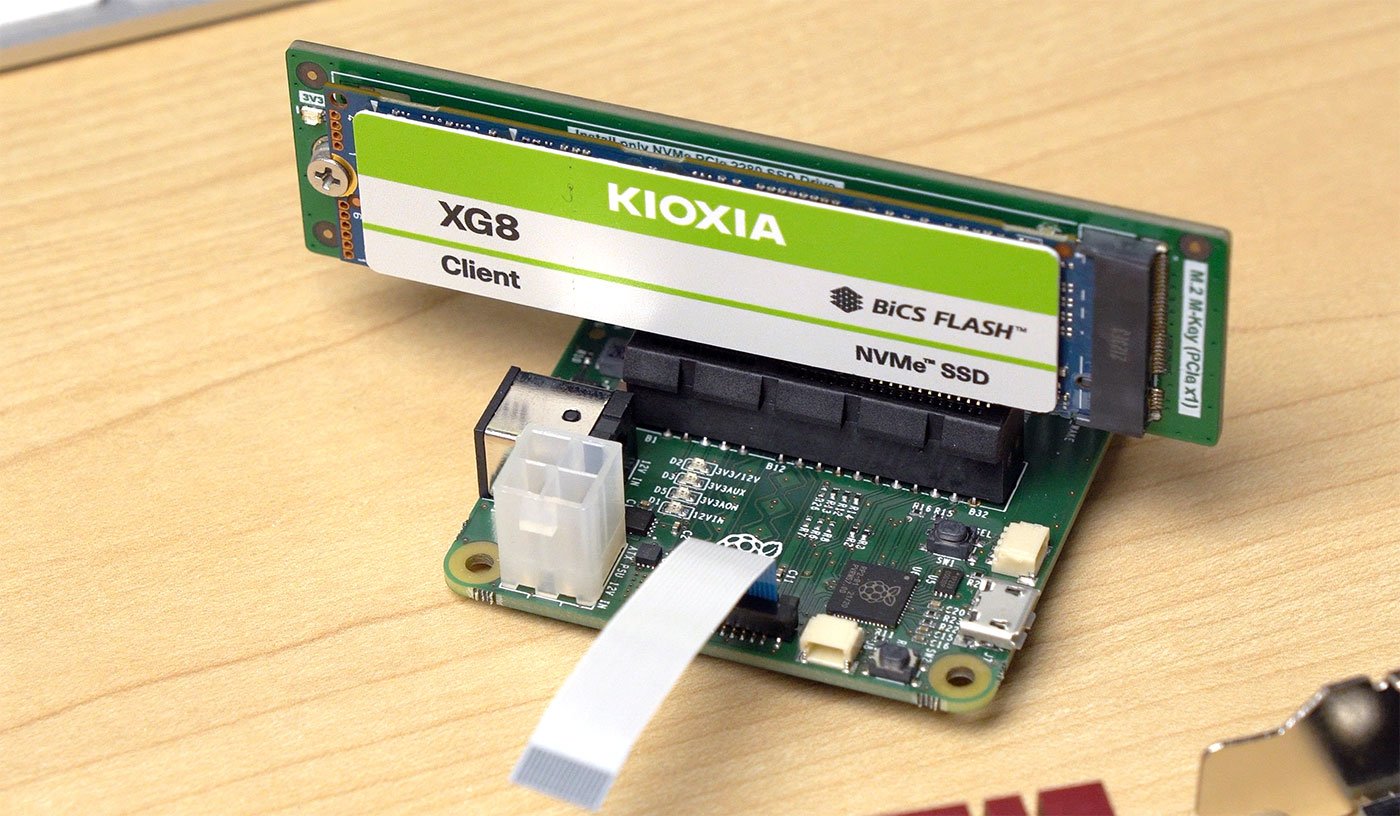

Currently there aren't any PCIe HATs (M.2, card slot, Mini PCIe, etc.), but I was able to borrow a debug adapter from Raspberry Pi for my testing. My hope is there will be new HATs adding on various high speed peripherals soon (just like we saw a massive growth in Compute Module 4 boards once the CM4 exposed PCIe to the masses).

One caveat if you plan on building your own PCIe HAT: the FPC connector only provides up to 5W on its 5V line, so you won't be able to power too many PCIe devices directly off the connector. I used a 12V barrel plug to provide power to the debug board I used.

I'm going to run through the devices I've tested so far—noting I have not had near enough time to do real debugging yet, like I have on the Compute Module 4. Eventually I plan on moving all this data into the Raspberry Pi PCIe Devices website.

NVMe SSDs

Booting from an NVMe SSD instead of the built-in microSD card slot is probably going to be the main use case for PCIe on the Pi 5, at least early on.

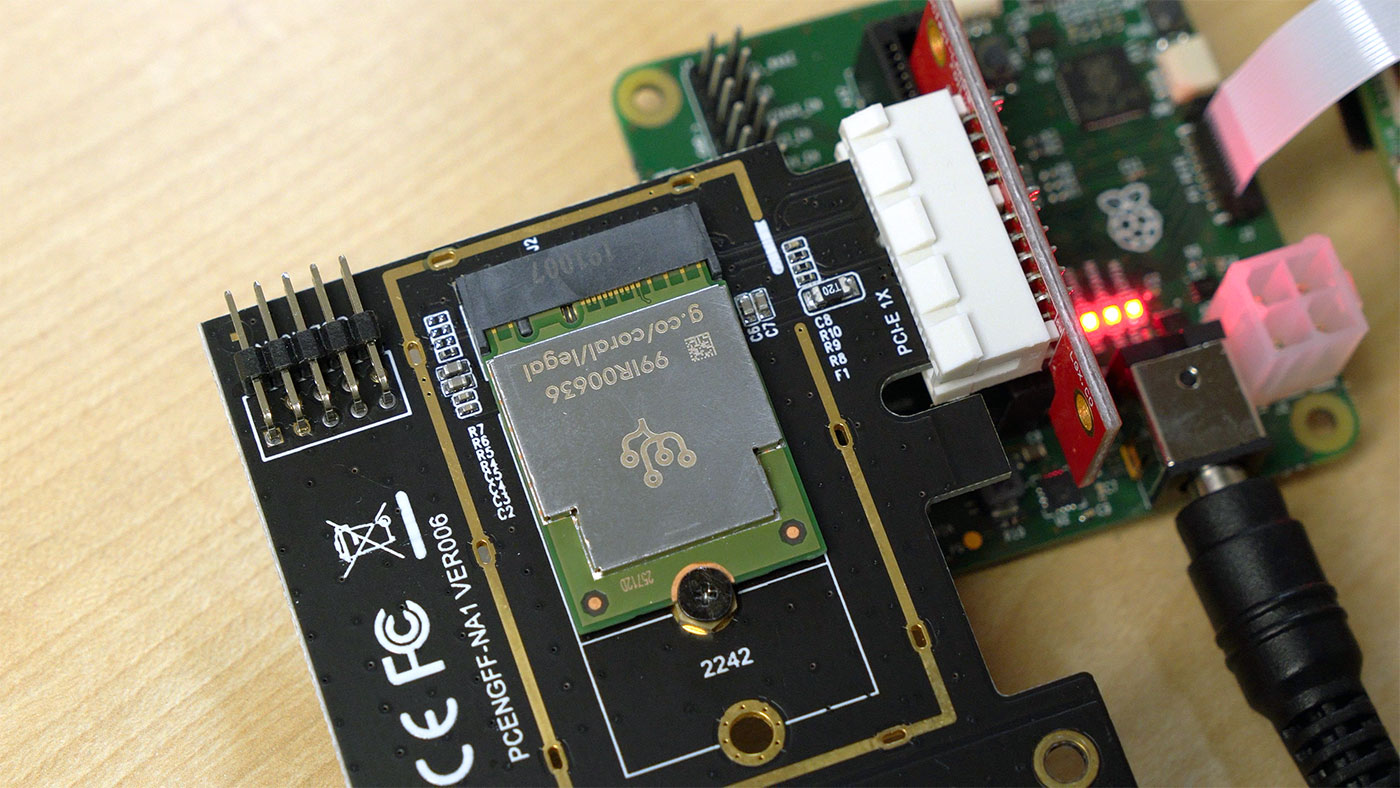

I tried a few different Gen 3 and Gen 4 SSDs, and settled on the above KIOXIA XG8, as it seems to run cool compared to some of my other SSDs.

I was able to get about 450 MB/sec under the default PCIe Gen 2.0 speed, and very nearly 900 MB/sec forcing the unsupported Gen 3.0—almost exactly a 2x speedup.

I ran most of my testing on the Pi 5 booting from this drive, and I'll publish a separate blog post on NVMe boot on the Pi 5. It's supported out of the box, though you need to modify the boot order in the EEPROM.

One issue I did run into—which I was still debugging with the Pi engineers at the time of this writing—is the WiFi brcfmac interface would not intialize properly while the NVMe SSD was powered and in use (whether booting from NVMe, microSD, or USB). With any other PCIe device I tested, built-in WiFi initialized properly. The current theory is it could be an issue with how the prototype PCIe adapter board I'm using is powered. (Edit: there was a bug in the BOOT_ORDER initialization that has since been fixed!)

I hope to see a first party NVMe HAT—and if not, whoever builds one first is going to get some of my cash immediately. It will be extremely handy to pop an M.2 slot on a Pi 5. Powering the SSD could be interesting, since many NVMe devices consume more than 5W.

Coral TPU / AI Accelerators

Coral TPUs are popular in tandem with Frigate for AI object detection. USB Coral devices have worked with all the Pi models for years, but PCIe versions had problems on the Compute Module 4's slightly-broken PCIe bus.

On the Pi 5, I was able to get the apex kernel module installed following the official Coral install guide, but noticed every once in a while I'd get a PCIe packet error message in the system logs:

[ 72.418344] apex 0000:01:00.0: Apex performance not throttled due to temperature

[ 77.534508] apex 0000:01:00.0: Apex performance not throttled due to temperature

[ 77.534543] pcieport 0000:00:00.0: AER: Corrected error received: 0000:00:00.0

[ 77.534554] pcieport 0000:00:00.0: PCIe Bus Error: severity=Corrected, type=Data Link Layer, (Transmitter ID)

[ 77.534557] pcieport 0000:00:00.0: device [14e4:2712] error status/mask=00001000/00002000

[ 77.534560] pcieport 0000:00:00.0: [12] Timeout

[ 82.654615] apex 0000:01:00.0: Apex performance not throttled due to temperature

Aside: I hate it when production code spams status messages to the system logs... I don't think I need to be reminded the TPU isn't overheating every five seconds!

After finding pycoral is incompatible with Python 3.11 (the version in Bookworm), I decided to test the Coral TPU in Docker.

After a bit more debugging—and re-seating everything, since signal integrity seemed to be more critical with the Coral—I wound up almost getting it to work:

[ 372.628183] pcieport 0000:00:00.0: AER: Corrected error received: 0000:00:00.0

[ 372.628199] pcieport 0000:00:00.0: PCIe Bus Error: severity=Corrected, type=Data Link Layer, (Transmitter ID)

[ 372.628204] pcieport 0000:00:00.0: device [14e4:2712] error status/mask=00001000/00002000

[ 372.628209] pcieport 0000:00:00.0: [12] Timeout

[ 373.268131] apex 0000:01:00.0: RAM did not enable within timeout (12000 ms)

[ 373.268141] apex 0000:01:00.0: Error in device open cb: -110

[ 373.268160] apex 0000:01:00.0: Apex performance not throttled due to temperature

Apparently one of the lab Raspberry Pi 5's works with the Coral, but it seems my alpha board and a few other test boards have these link issues. With most PCIe devices, a sporadic dropped packet seems to be handled gracefully. On the Coral, it seems to want to retrain every time there's a problem, and it never works end-to-end on mine.

I tried dropping to PCIe Gen 1 speed (2.5 GT/s), with dtparam=pciex1_gen=1 in /boot/firmware/config.txt, but I still hit the error. The leading theory is a better FPC connection and/or adapter board may solve this issue.

I should mention again: I'm using an early prototype PCIe adapter board—it's likely the signaling issues I've run into will be improved with production hardware!

Network Cards

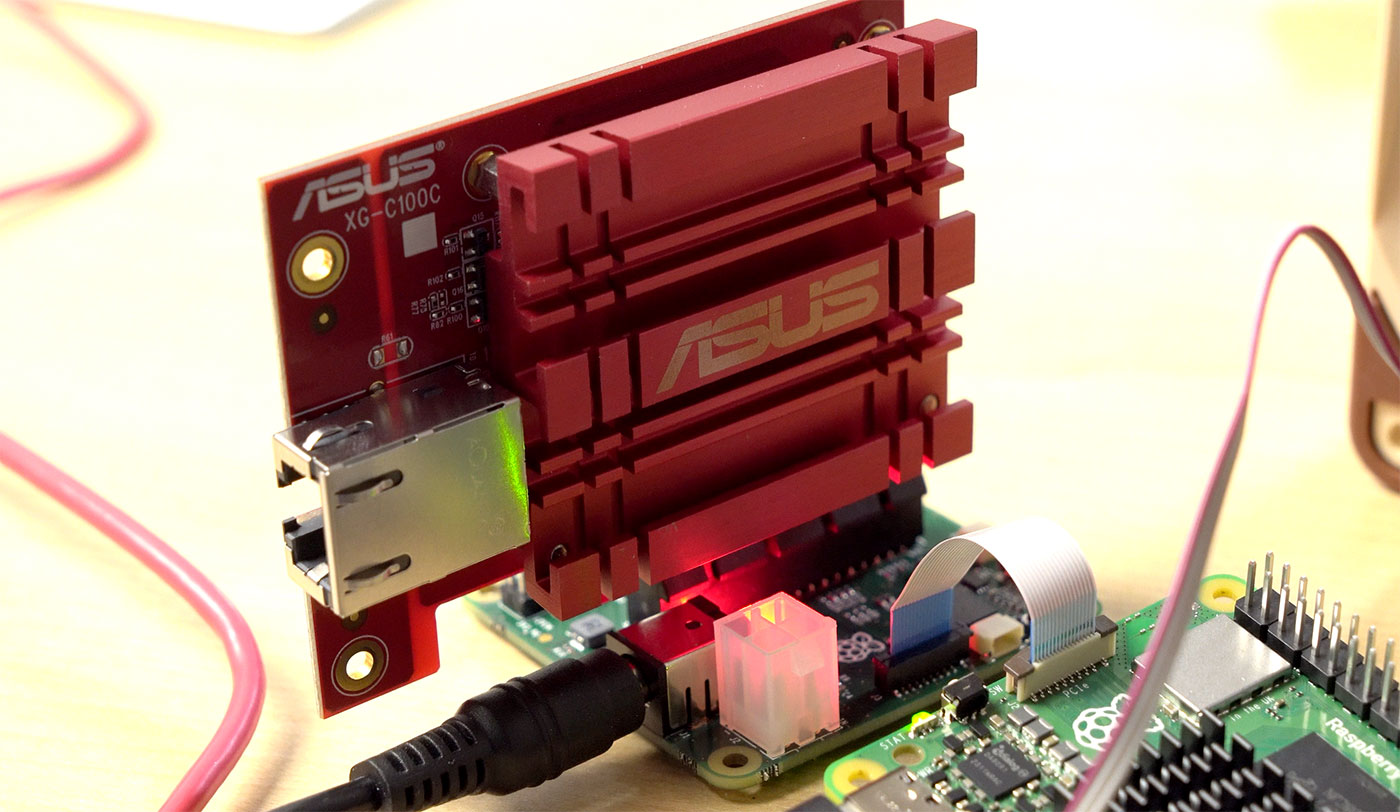

The signaling issues didn't seem to impact this Asus 10G NIC at all, however. To get it running, I recompiled the Linux kernel, adding in the proper Aquantia modules (see my guide).

Once in place, the card was immediately recognized, and at PCIe Gen 3, I was able to get 5.5-6 Gbps. I presume there may be a 10G NIC out there that will squeeze out closer to 10 Gbps through a Gen 3 x1 link, but I haven't found it.

Other NICs (like the trusty Intel I340-T4 I used on the CM4 for 4x Gigabit connections) seemed to work okay too, so I didn't spend much time on networking (yet!).

I think the Pi would pair well with a dual-2.5G NIC like this one from Syba. I have one, but have not had a chance to test it. I hope someone creates a 'Router HAT' with two 2.5G interfaces up top. And convinces OPNsense/PFsense to release an arm64-compatible build!

You can add on more interfaces via USB 3.0 as well—though my quick test of a single 2.5G adapter resulted in only 1.5 Gbps of transfer for some reason.

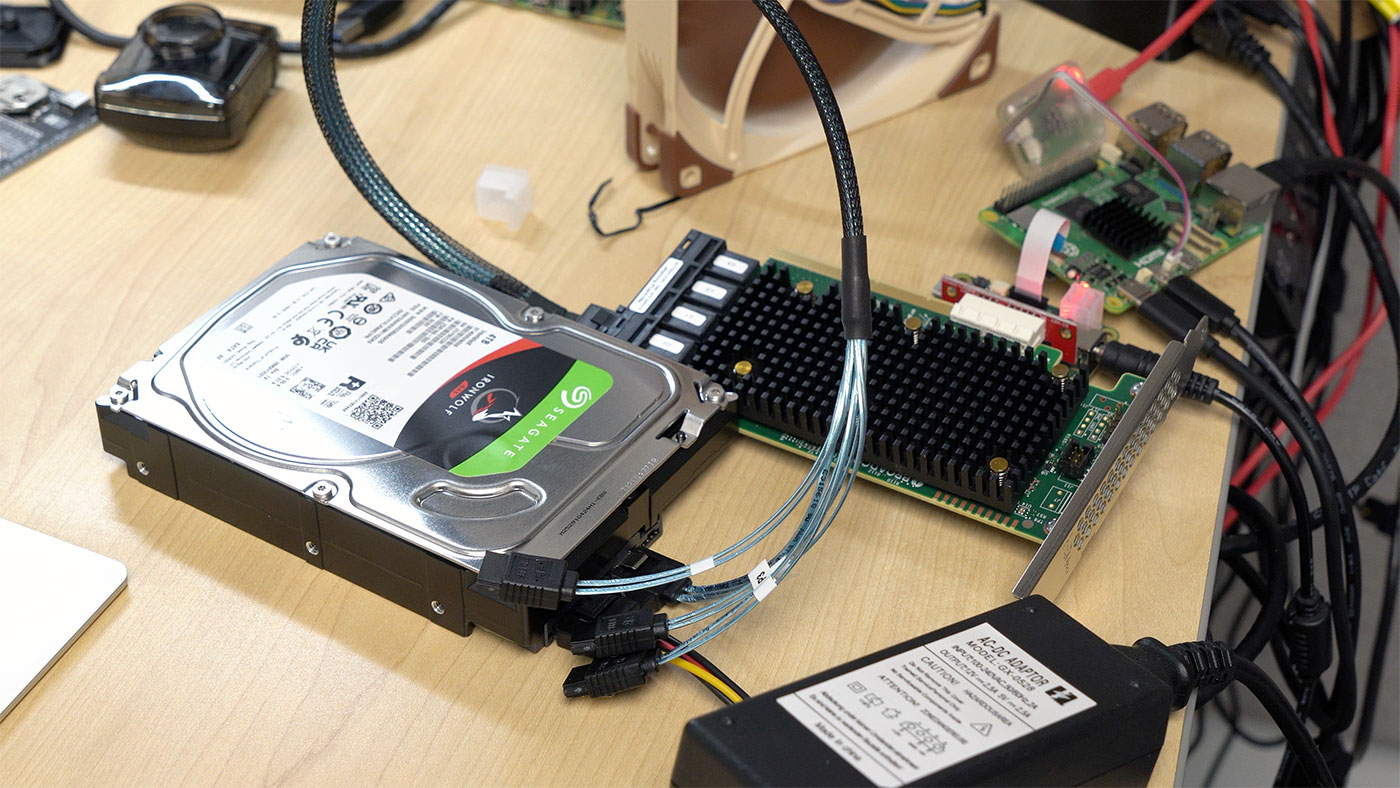

HBA/RAID Storage Cards

I also set up a hardware RAID and HBA card with a single SATA drive, to see if the mpt3sas driver from Broadcom (formerly LSI) would work out of the box (the CM4 required a patch to work around this bug). I tested one of the 9405W-16i cards I used in the Petabyte Pi project, and it seemed to be recognized in the kernel... but for some reason storcli didn't identify it.

I didn't have time yet for full debugging, though, and the same thing happened with the 9460-16i, so I will take up the gauntlet again soon. I'd love to try building another DIY NAS on the Pi 5 to see if I can resoundingly break the gigabit-write-over-the-network barrier (Samba held back speeds on the BCM2711!).

Graphics Cards

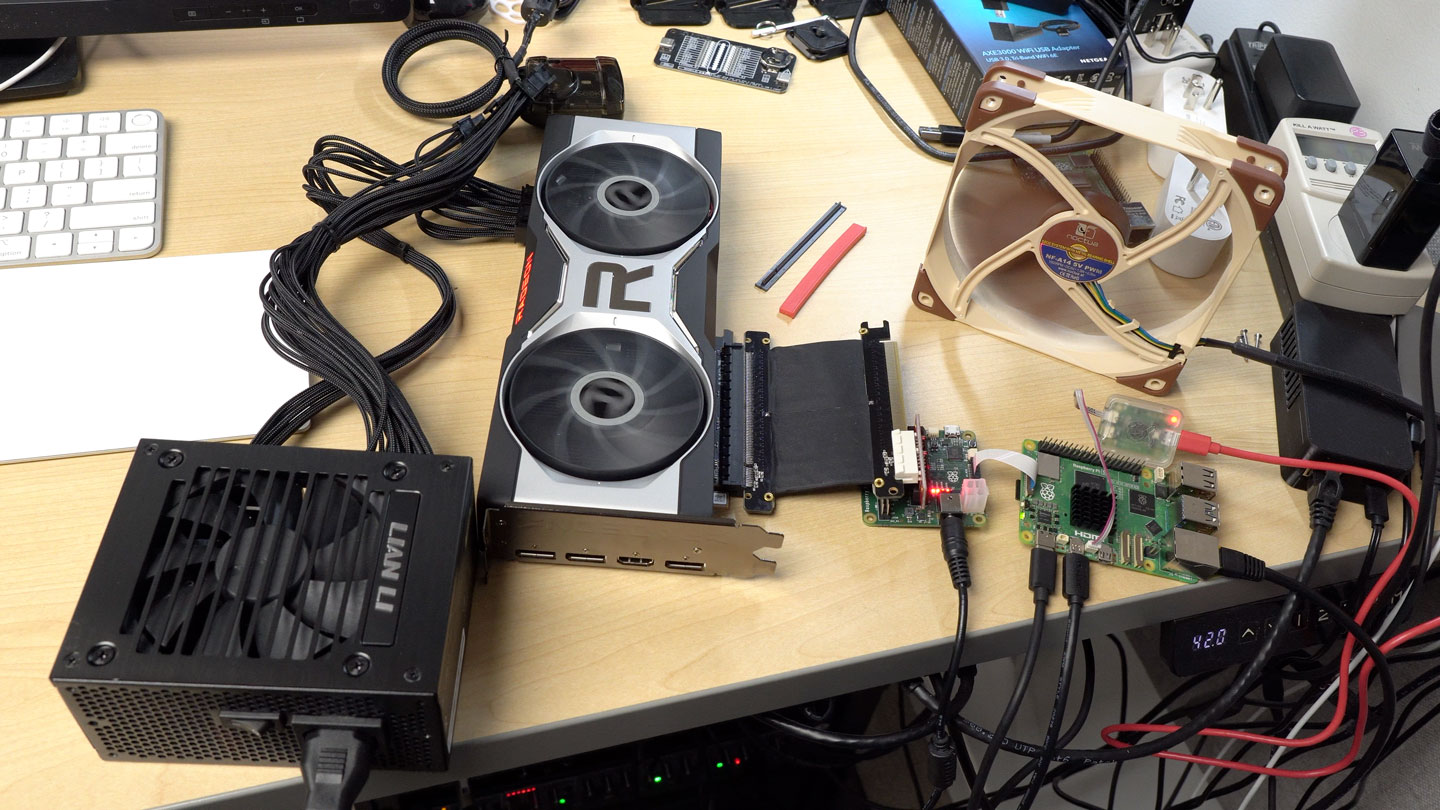

Anyone who's followed my Pi journey knows the elusive problems I've had getting graphics cards working on the Pi CM4. After a grueling amount of work testing various cards—and mostly relying on other people's help—we got partial success with a few older cards, for some very basic rendering tasks.

My ADLINK Ampere Altra Max workstation can take a modern Nvidia card, and on Ubuntu at least, the official arm64 drivers load without a hitch and allow GPU-accelerated gaming and compute.

Can the Pi 5? Well, I'm hopeful. On the CM4, many driver issues would result in the Pi completely locking up. Like, pull-power-and-let-it-sit-style lockups.

That is no longer the case, as the nouveau, nvidia, amdgpu, and radeon drivers all seem to identify the graphics cards I've plugged in. Nvidia's driver install succeeds without a hitch, and lspci shows the correct module loading.

But after all that, I'm still bumping into a darned RmInitAdapter failed! error message, which has vexed me before. Oh how a fully-open-source driver for Linux would be helpful!

Oh wait! AMD has that! How do they fare?

Testing an RX 6700 XT with the open source AMDGPU driver included in Linux, I ran into a few errors like:

[drm:psp_hw_start [amdgpu]] *ERROR* PSP load kdb failed!

[drm:psp_v11_0_ring_destroy [amdgpu]] *ERROR* Fail to stop psp ring

[drm:psp_hw_init [amdgpu]] *ERROR* PSP firmware loading failed

[drm: amdgpu_device_fw_loading [amdgpu]] *ERROR* hw_init of IP block <psp> failed -22

amdgpu 0000:03:00.0: amdgpu: amdgpu_device_ip_init failed

amdgpu 0000:03:00.0: amdgpu: amdgpu: finishing device.

But I think we're very close. Some of the issues also seem to be around timing-related errors, which could also be down to signal integrity with my early prototype PCIe adapter. I am definitely in the market for a slightly better FFC (Flexible Flat Cable) that has some form of shielding.

From what I've heard, PCIe HATs and better cables may already be in the works, but I'm sure my Pi PCIe addiction is not priority number one right now—not when there are about 10 other subsystems and the brand new RP1 southbridge chip going into full production!

Conclusion

This blog post only scratches the surface—I spent many more hours testing and debugging things on the Pi 5, and all that work was poured into my video on the Raspberry Pi 5, so go watch that.

If you have any questions I didn't cover, please let me know in the comments!

Comments

It looks like in the Pi 5 announcement blog post, Eben Upton announced there will be an M.2 HAT in early 2024 supporting 2230 and 2242-sized M.2 NVMe SSDs.

Note: I have corrected the memory latency graph—I had initially used a stacked chart by accident. Memory latency is improved, but not quite halved like my chart showed!

oh dear lord my wallet

I really hope there's a way to shoehorn 2280 M2 cards into the official M2 hat, or a 3rd party solution presents itself to make that happen, since 2280 cards are SO popular...

I wonder if thunderbolt support for the USB-C connector is in the pipelines

Thunderbolt should work with an add-in PCIe card but you may not be able to get hot plug or sleep / wake working without platform specific support in GPIO pins

What about PoE?

They are working on a revised PoE+ HAT; it looks like it will be at least a month or two before it's ready. I hope to start testing it once it happens though!

> Aside: I hate it when production code spams status messages to the system logs... I don't think I need to be reminded the TPU isn't overheating every five seconds!

(You probably knwo that already) If you read you logs with less as a pager you can use the &pattern command as a filter. Patterns beginning with an ! hide lines that match the pattern.

thank you @ben!gt for pointing me to this feature in less!

&pattern

Display only lines which match the pattern; lines which do

not match the pattern are not displayed. If pattern is

empty (if you type & immediately followed by ENTER), any

filtering is turned off, and all lines are displayed.

While filtering is in effect, an ampersand is displayed at

the beginning of the prompt, as a reminder that some lines

in the file may be hidden. Multiple & commands may be en‐

tered, in which case only lines which match all of the pat‐

terns will be displayed.

Certain characters are special as in the / command:

ˆN or !

Display only lines which do NOT match the pattern.

ˆR Don’t interpret regular expression metacharacters;

that is, do a simple textual comparison.

Baffling design choices.

Why does this need a 5V 5A power supply? Why not take advantage of the millions of QuickCharge chargers that can negotiate higher voltages? It would be cool to use an old phone charger that can negotiate 12V 2A. This would be easier for marginal USB-C cables to handle as well. My Pinecil works this way, why can't the new Pi? On that subject, it would also be great to be able to use a Pi with PoE without a $20 HAT. No PWM fan outputs on a board that can apparently pull over 20W when its cranking away is also a crummy place to pinch pennies.

Great. Its faster. I don't care. What I really wanted was some reliable, relatively fast integrated storage. Just put 16 GB of emmc on the thing already. Failing that, put a PCIe M.2 slot on the bottom. In 2023 if you want to sell it based on increased performance, shouldn't it have some kind of AI acceleration or image signal processing built in? This second option seems particularly useful in light of the speedy new camera interfaces.

Kudos on the power button and the real-time clock. Only about ten years too late on those items.

There is a PWM fan header.

I see you have PTP support listed for the pi5, which isn't listed on their blog post. On the cm4, PTP is handled on the PHY (BCM54210PE) while on the pi5 the PHY is listed as the BCM54213. Did they move it into the MAC? That might help with the issues getting a timestamp out of the PHY on the cm4, avoiding the slower MII bus.

Do you know if RP1 will include timestamping non-PTP packets? This is the "all" hardware receive filter in the output of "ethtool -T eth0". My use-case is hardware timestamping NTP packets.

See: https://github.com/geerlingguy/sbc-reviews/issues/21, which has the full ethtool output. It is provided through a Cadence GEM inside RP1 on the Pi 5.

excellent, I'm looking forward to testing this to see if it meets my needs

even before reading this.. once I saw pcie I was like.. this is the perfect portable firewall.. wasn't thinking pfsense.. but there are PLENTY of arm base firewall / router distros out there.. some are less friendly than others.. but a fork of something like ddwrt or tomato or even openwrt ..

I read the Pi5 supports 1.6a acoss USB devices, up from 1.2 on the 4; so this can now power two 4TB SSDs at once without a hub? The Samsung SHIELD 4TB maxes out at 4W during heavy use, and the Crucial X6 is 2.95W, so I am excited to check this out whenever mine ships, perhaps near the end of the year.

The Sandisk 4TB uses nearly all of the power just by itself, but of course, It's now notoriously unreliable so there are other reasons to avoid that.

I would love to see a comparison of external drives and their power usage with the RPI5...

Ooh, thanks for testing all this out!

Does the Pi 5 southbridge fix the VL805's USB bandwidth calculation bug? Can you you connect a bunch of USB audio devices (directly or on an MTT hub) without triggering the dreaded "Not enough bandwidth" error?

https://github.com/raspberrypi/linux/issues/3962

Can you test ZFS encryption over PCIE? Is there AES-NI support?

Configuring networking with NetworkManager is really easy. I've been using it professionally in my job for quite a few years. I actually much prefer using nmtui instead of nmcli. You can do 99% of what you need to do with nmtui, and it's far easier and more intuitive. Of course if you want to script stuff or do something exotic, nmcli is the way to do it.

For 10Gbps NIC, Aquantia AQC113C should support PCIe gen3, like below link. But I am guessing it won't hit 10Gbps with gen3 x1. Rumor said that the new model of Asus XG-C100C v2 has the same chip but I haven't tried myself.

https://a.co/d/8ENT9QY

On my machine,

41:00.0 Ethernet controller: Aquantia Corp. AQC113C NBase-T/IEEE 802.3bz Ethernet Controller [AQtion] (rev 03)

LnkCap2: Supported Link Speeds: 2.5-8GT/s,

Any HAT to be designed to take advantage of the PCI-e connection for M.2 support will have to physically be made not to obstruct the airflow from the required active fan-cooler. Hence a HAT designed to be placed over where the fan-cooler should be or would obstruct airflow from a fan-cooler installed in a PI case would negate the efficiency of cooling and PI's may then run into thermal throttling when under heavy loads.

It's likely the official HAT would be designed to have a small gap above the active cooler, which only sits a couple mm higher than the height of the GPIO pins. Then airflow shouldn't be a big issue.

The Pi Blog mentions that they'll be doing 2 NVME hats, one of which is "standard", and one of which is L-shaped and designed for use in the Pi Case.

That would result in heat from the SOC directly impinging on the M.2 HAT and NVME SSD which are none to run hot. There then maybe thermal issues with the NVME SSD.

pcie gen 3 is not 10GT/s, it's actually 8GT/s. However, gen 1 and gen 2 were using 10 bits to transfer 1 payload byte (8 bits), while gen 3 uses 130 bits to transfer 128 payload bits, so generally the user-attainable speed still went 2x (compared to gen 2)

Anybody know if one can power the Pi-5 via the GPIO connector? I have a motor controller hat on my Pi-4B that takes 12v in to run the motors, but it also feeds 5v down to the 4B to run it and the connected USB devices. Very nice to be able to run the entire system from 12v battery power.

Yes, though pushing more than a few amps through the GPIO pins wouldn't be advisable. So you'd need to run the Pi with current limiting (I think that might happen automatically?), and not use more than 600 mA through the USB ports.

Yay! Thank you for clarifying. As long as that's essentially unchanged from the Pi-4B, that will do. I just need a short header to extend the GPIO pins above the heat sink and fan so that the controller hat can be attached.

I'm using the Pi-4B on my telescope (the motor controller runs the electronic focuser), and am looking for the higher data transfer rates of the Pi-5 to improve the download speed from the primary imaging camera. The camera as its own external power.

I hope I can use my M.2 sata SSDs. I just brought a bunch for testing with my Pi4s, so it'd be a shame to waste them.

If you go to test a Navi card on it, it wouldn't surprise me if you run into firmware loading failing, like I did on LX2160A.

https://gitlab.freedesktop.org/drm/amd/-/issues/2313

I just can't get excited about something we may not even be able to buy.

Their commitment has been to industry with zero change to that stance. What's to say demand won't exceed their capacity again? Then what? Hobbyists shit out of luck again?

Why would I invest in a platform that is going to treat me that way?

I'm not sur that's fair....It's released to retail only first. Industry get it later.

The are using pre release model to be more fair (somehow) to overseas customers.

Upton was himself critical of the way large manufacturers treated smaller businesses.

Watch the Kevin McAleer interview.

What do you reckon the chances of something like this working on the pi5?

https://thepihut.com/products/pci-e-to-4-channel-sata-3-0-adapter-for-c…

Now that we have PCI-E supported i think there will be more motivation to get GPU supported, It's perfect for mining without the need to run a whole PC.

Hello!

Is there any information available for the PCIe connector type and pinout?

Would be greatly appreciated.

Thank you!

Greetings

Stefan

I'm wondering this exact thing..what specifically do I need to order to get PCIe slot? Jeff says he's using some kind of debug daughterboard thing I believe?

I've got a great idea for this, turning it into a NAS head for an old RAID box I've got laying around not doing anything.

I believe I should be able to connect directly to the RAID controller over PCIe...but finding a driver may be a problem :(

What exactly do I need to order to get that PCIe slot daughterboard thing?

If you have any ARM video card questions I have been through the ringer on a Solidrun LX2K. Your best bet is to use an AMD GCN5 or older card; I use a WX 2100 & WX 4100 and they work great with minimal effort on the driver side. nVidia support just came earlier this year and is 64-bit only, I.e. no Steam or 32-bit driver support. Newer AMD drivers were put into mainline kernel earlier this year as well but support is spotty across vendors and AFAIK still doesn’t work on the LX2K platform but been out of the scene for a min.

My LX2K Guide with some info: https://github.com/Wooty-B/LX2K_Guide

I have been waiting for this tiny board for long. However, seems it is not very "available" for testing. I would like to know if I can install any "hardware RAID card" to the PCI-E connector? So that I can really consider to build a NAS (Please don't suggest use software RAID instead), Thank you.

Great article (and video), just pre-ordered the pi5 for Xmas (my partner always asks what I would like and I often say the latest pi). Clearly I will be opening it on Xmas day and immediately be wanting to see what it can do. I have a pi3b and pi4 and was thinking of running some benchmarks, CPU/ram/graphics/storage (I can look up the numbers but this would be the hardware I own rather than generic). Is there anything else you would suggest looking at day 1 just for interest, side by side comparison wise?

Be sure to check out all the benchmarking and info we have been gathering in https://github.com/geerlingguy/sbc-reviews/issues/21.

hi,

it would be super interesting to test OnxxStream with this setup (RPI 5 with and without NVMe SSD)! I will buy the PI5 and the PCIe adapter as soon as they are available!

Disclaimer: I am the author. Link: https://github.com/vitoplantamura/OnnxStream

Do you know of any USB 3.0 expansion boards that will use that FFC connector so I can expand my Pi 5's USB output?

Not yet; I will be cataloging all the HATs I find out about on https://pipci.jeffgeerling.com/hats.

As someone who recently built a bit of an odd ball mini itx ssd based NAS, I would love to see you (or red shirt Jeff) renew the Pi Nas project!

Dream system would be able to put 2 systems in one mini itx one single board computer handling Nas duty and a windows PC all in one box.

Ooh, that would be an interesting build!

I have connected 2 SSDs to my pi5 over usb3. On boot only 1 of them is powered/detected.

If I remove them and plug them in in sequence both work.

I also use the official 27W power supply.

Any ideas why?

Any chance you could share where you got the pcie adapter you're using in this blog post? I have a small project where the form factor of that adapter (or something similar) would work much better than the hats! And the nvme adapter too, all I find are much bigger ones with the expansion brackets.

Is there any reason to use an sd card with better than UHS 1 capability in the RPi5 ? For example, will a Sandisk Extreme or Extreme Pro (UHS3 @ >160MB/s capability) perform any better in an RPi5 compared to a Sandisk Ultra (UHS1 @ 120MB/s) ?

In the comparison table, the RAM frequencies are 2133 MHz for the rPi4 and 4167 MHz for the rPi5. This is probably a mistake since LPDDR4 and LPDDR4x cannot achieve those frequencies. Those values make sense but for the Data Transfer Rate (DTR) expressed in MT/s. I am no RAM expert, but if I understand correctly, the frequencies should be half the given values.

References:

https://en.wikipedia.org/wiki/LPDDR

https://www.hardwaretimes.com/lpddr4-vs-ddr4-vs-lpddr4x-which-one-is-be… "In addition, LPDDR4X increases the bandwidth from 3200MT/s to 4266MT/s (without OC). This is the result of a faster I/O bus clock (1600MHz to 2134MHz) and a memory array (200-266.7MHz)."

Where can we get the PCIe adapter you are using

Right now it's unavailable—the one I was using was a one-off from Raspberry Pi themselves, used for engineering testing. I hope that one or two companies or makers will have some PCIe breakouts available soon though, maybe this year, or early next year!

Thanks Jeff.

Can wait to do this on my own pi...

*Cant

Hey, Great article,

I wonder if There is some Kind of splitter so you can connect 2 M.2 boards through the FFC (from pi to “splitter” and from that to 2 M.2 boards (top and bottom)

hi, where did you get that PCIe cable? I bought the Pimoroni NVME hat and the cable is super short, I'd like one like that so I can run it through a slit I made on the official case, :) thanks.

I just order a Mini PCIe hat , a full sized PCIe hat, NVME hat etc. Very excited to get the dual NIC 2.5G Syba card to work ! Building a home lab based on a manager, PoE 4 port 2.5 + 1 SFP 10g port + 10GBT RJ45 port ($110)

I plan to make it an RPi 5 cluster base for the dual NIC 2.5g RPi5 , Coral TPU edge unit RPi5 , WiFi 6e / SIM7600 module RPi5, NAS NVME RPi5, etc.

Thanks for your great work Jeff !