A few months ago someone told me about a new Raspberry Pi Compute Module 4 cluster board, the DeskPi Super6c.

You may have heard of another Pi CM4 cluster board, the Turing Pi 2, but that board is not yet shipping. It had a very successful Kickstarter campaign, but production has been delayed due to parts shortages.

The Turing Pi 2 offers some unique features, like Jetson Nano compatibility, remote management, a fully managed Ethernet switch (capable of VLAN support and link aggregation). But if you just want to slap a bunch of Raspberry Pis inside a tiny form factor, the Super6c is about as trim as you can get—and it's available today!

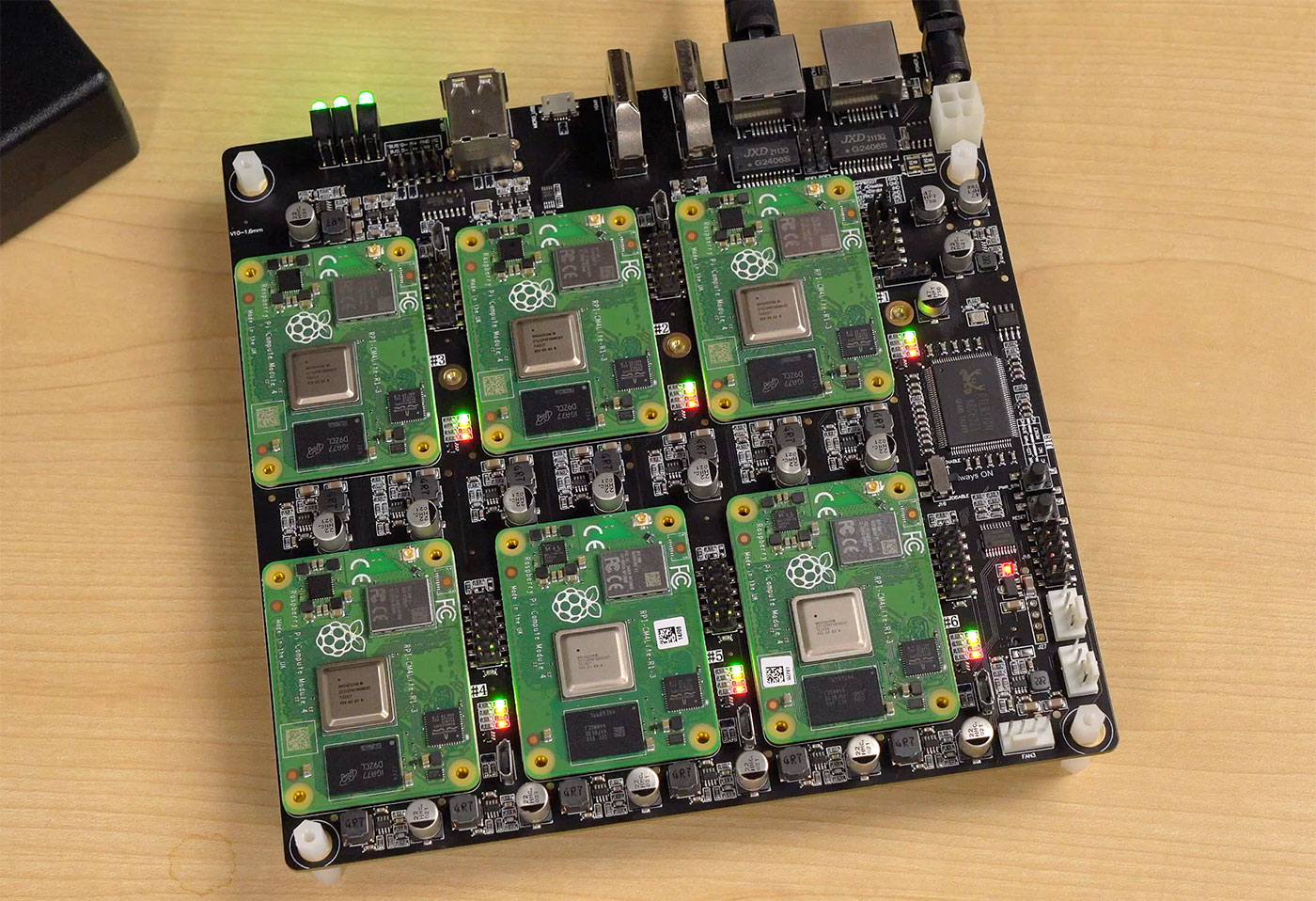

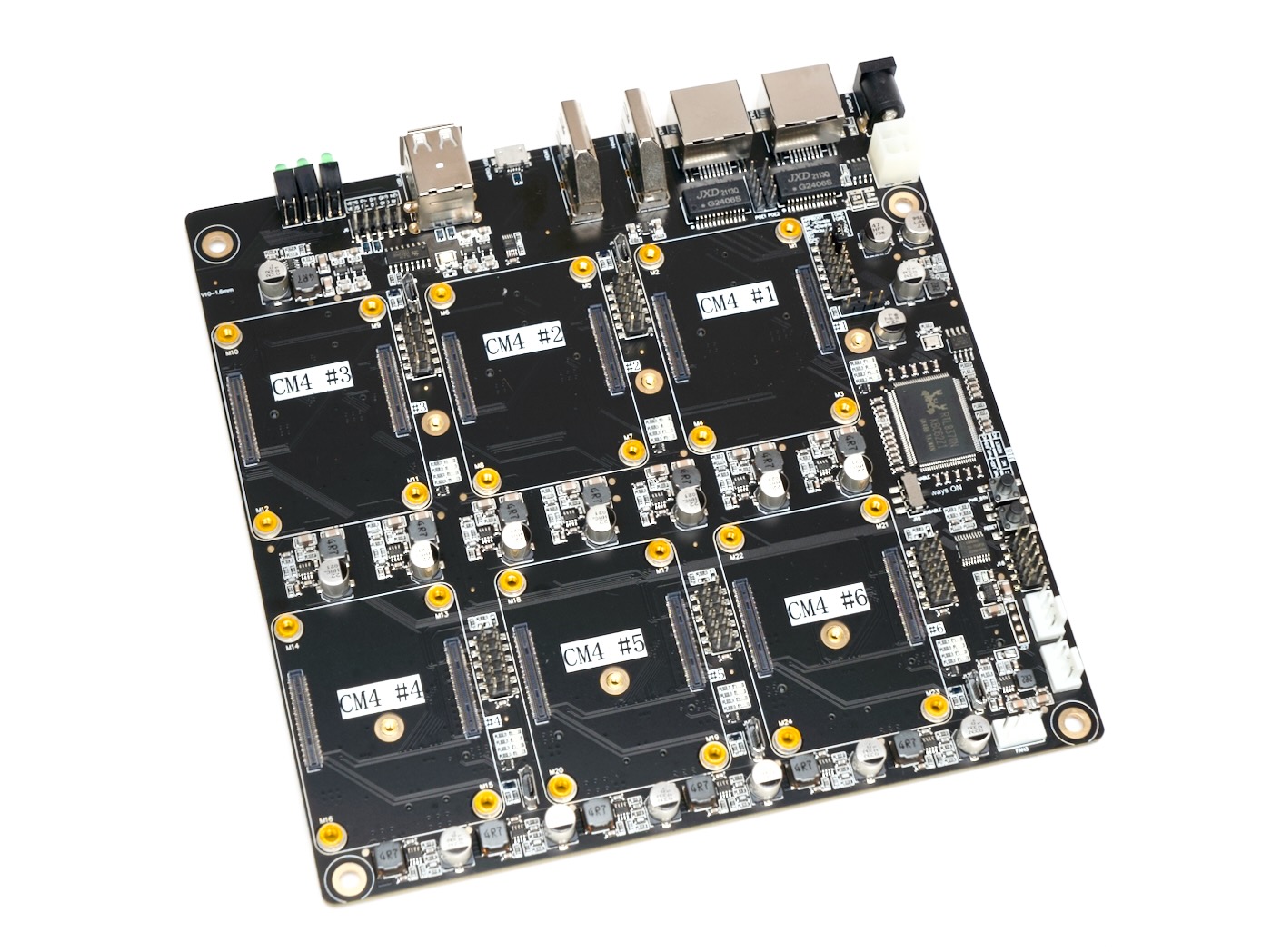

On the top, there are slots for up to six Compute Module 4s, and each slot exposes IO pins (though not the full Pi GPIO), a Micro USB port for flashing eMMC CM4 modules, and some status LEDs.

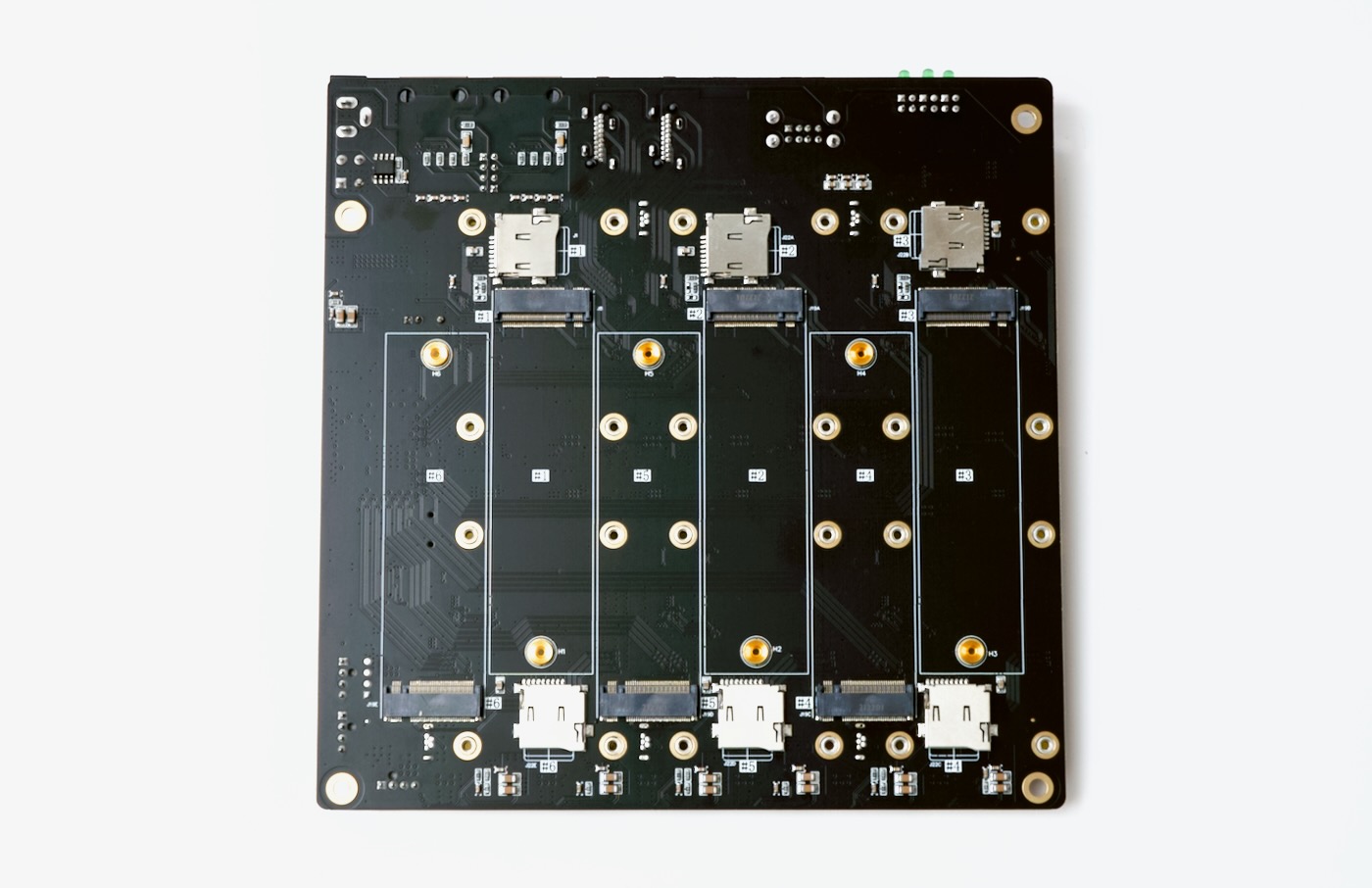

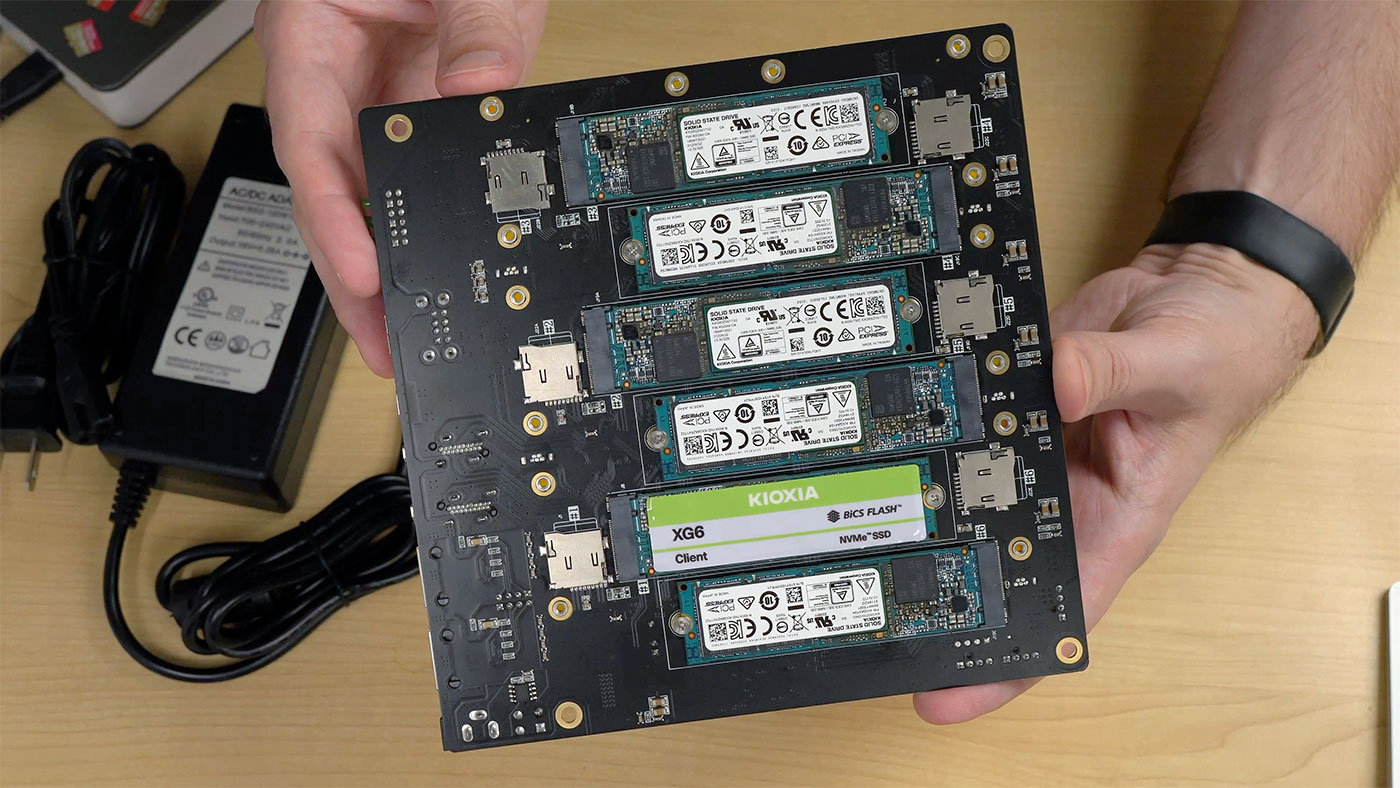

On the bottom, there are six NVMe SSD slots (M.2 2280 M-key), and six microSD card slots, so you can boot Lite CM4 modules (those without built-in eMMC).

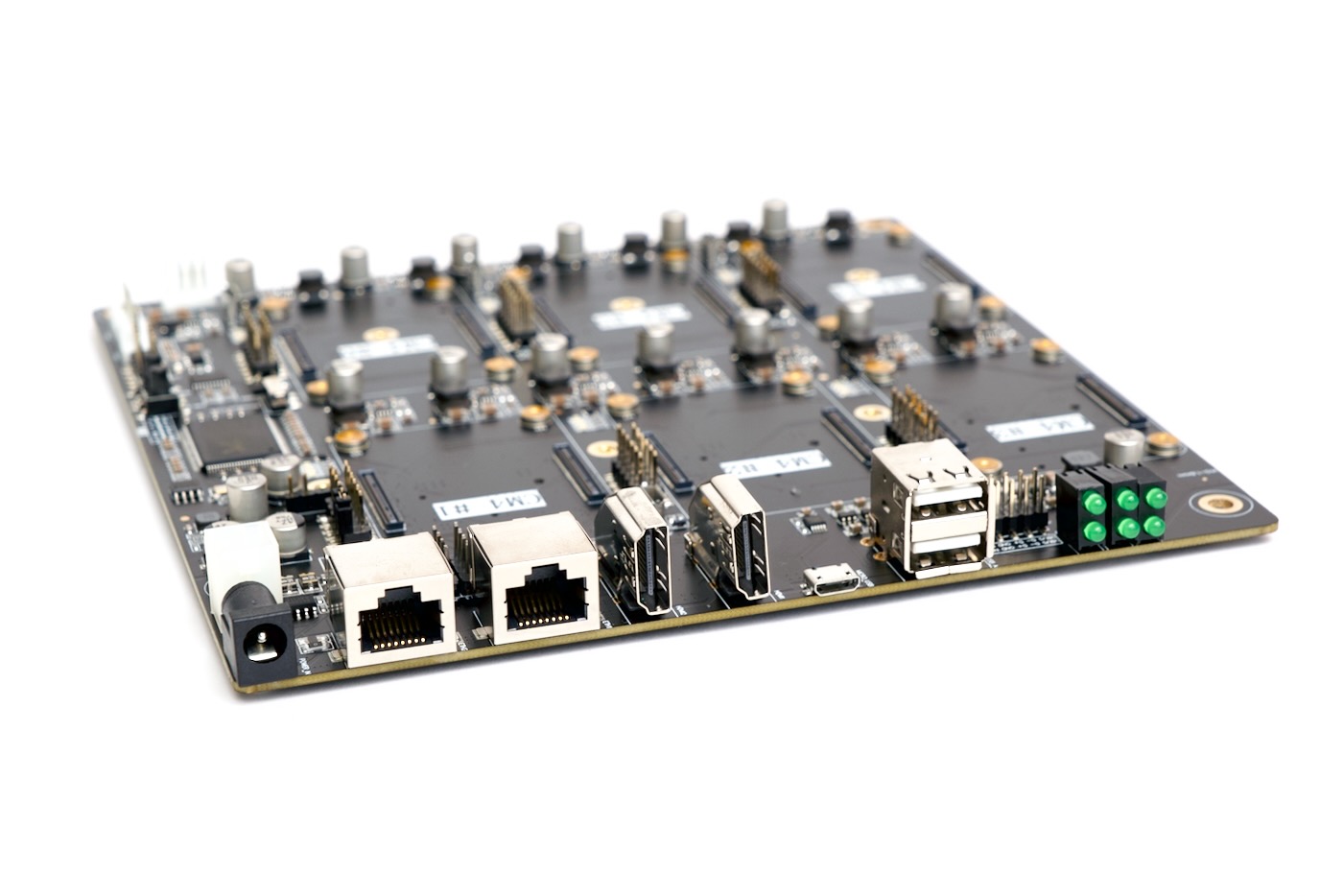

On the IO side, there are a bunch of ports tied to CM4 slot 1—dual HDMI, two USB 2.0 (plus an internal USB 2.0 header), and micro USB, so you can manage the entire cluster self-contained. You can plug a keyboard, monitor, and mouse into the first node, and use it to set up everything else if you want. (That was one complaint I had with the Turing Pi 2—there was no option of managing the cluster without using another computer.)

There's also two power inputs: a barrel jack accepting 19-24V DC (the board comes with a 100W power adapter), and a 4-pin ATX 12V power header if you want to use an internal PSU.

Finally, there are six little activity LEDs sticking out the back, one for each Pi. Watching the cluster as Ceph was running made me think of WOPR, from the movie War Games—just on a much smaller scale!

Ceph storage cluster

Since this board exposes so much storage directly on the underside, I decided to install Ceph on it using cephadm following this guide.

Ceph is an open-source storage cluster solution that manages object/block/file-level storage across multiple computers and drives, similar to RAID on one computer—except you can aggregate multiple storage devices across multiple computers.

I had to do a couple extra steps during the install since I decided to run Raspberry Pi OS (a Debian derivative) instead of running Fedora like the guide suggested:

- I had to enable the backports repo by adding the line

deb http://deb.debian.org/debian unstable main contrib non-freeto my/etc/apt/sources.listfile and then runningsudo apt update. I added a file at

/etc/apt/preferences.d/99pin-unstablewith the lines:Package: * Pin: release a=stable Pin-Priority: 900 Package: * Pin: release a=unstable Pin-Priority: 10After that, I could install cephadm with

sudo apt install -y cephadm

Once cephadm was installed, I could set up the Ceph cluster using the following command (inserting the first node's IP address):

# cephadm bootstrap --mon-ip 10.0.100.149

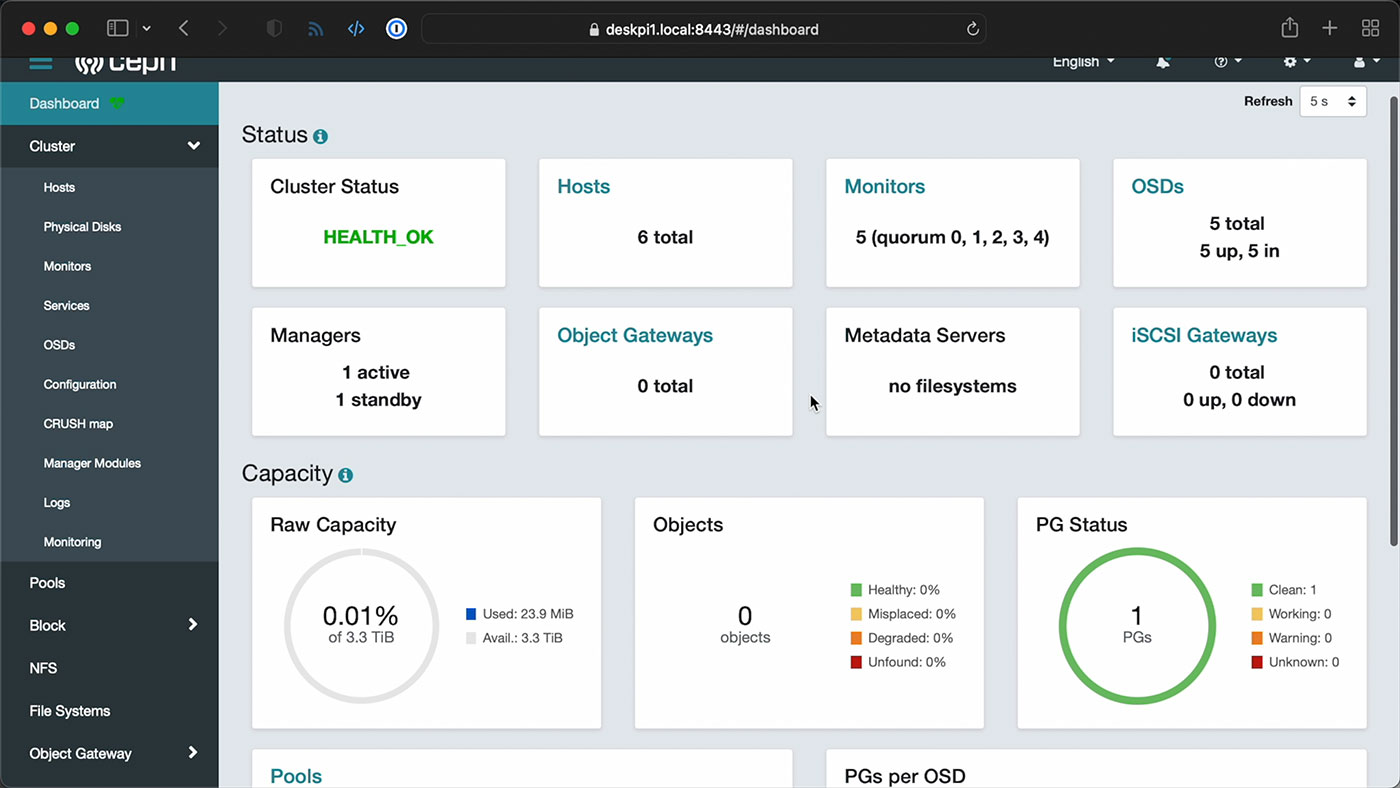

This bootstraps a Ceph cluster, but you still need to add individual hosts to the cluster, and I elected to do that via Ceph's web UI. The bootstrap command outputs an admin login and the dashboard URL, so I went there, logged in, updated my password, and started adding hosts:

I built this Ansible playbook to help with the setup, since there are a couple other steps that have to be run on all the nodes, like copying the Ceph pubkey to each node's root user authorized_keys file, and installing Podman and LVM2 on each node.

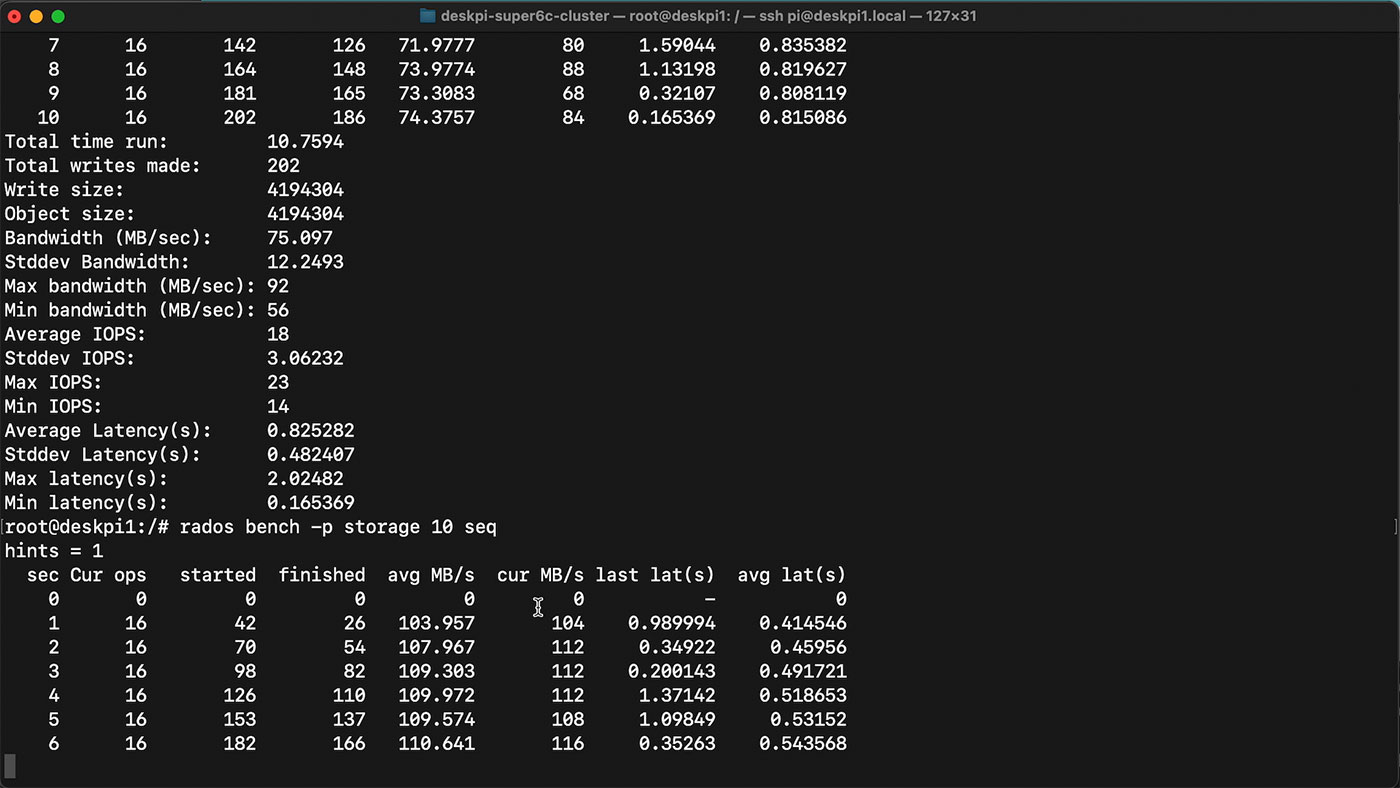

But once that was done, and all my hosts were added, I was able to set up a storage pool on the cluster, using the 3.3 TiB of built-in NVMe storage (distributed across five nodes). I used Ceph's built-in benchmarking tool rados to run some sequential write and read tests:

I was able to get 70-80 MB/sec write speeds on the cluster, and 110 MB/sec read speeds. Not too bad, considering the entire thing's running over a 1 Gbps network. You can't really increase throughput due to the Pi's IO limitations—maybe in the next generation of Pi, we can get faster networking!

Throw in other features like encryption, though, and the speeds are sure to fall off a bit more. I also wanted to test an NFS mount across the Pis from my Mac, but I kept getting errors when I tried adding the NFS service to the cluster.

If you want to stick with an ARM build, a dedicated Ceph storage appliance like the Mars 400 is still going to obliterate a hobbyist board like this, in terms of network bandwidth and IOPS—despite its slower CPUs. Of course, that performance comes at a cost; the Mars 400 is a $12,000 server!

Video

I produced a full video of the cluster build, with more information about the hardware and how I set it up, and that's embedded below:

For this project, I published the Ansible automation playbooks I set up in the DeskPi Super6c Cluster repo on GitHub, and I've also uploaded the custom IO shield I designed to Thingiverse.

Conclusion

This board seems ideal for experimentation—assuming you can find a bunch of CM4s for list price. Especially if you're dipping your toes into the waters of K3s, Kubernetes, or Ceph, this board lets you throw together up to six physical nodes, without having to manage six power adapters, six network cables, and a separate network switch.

Many people will say "just buy one PC and run VMs on it!", but to that, I say "phooey." Dealing with physical nodes is a great way to learn more about networking, distributed storage, and multi-node application performance—much more so than running VMs inside a faster single node!

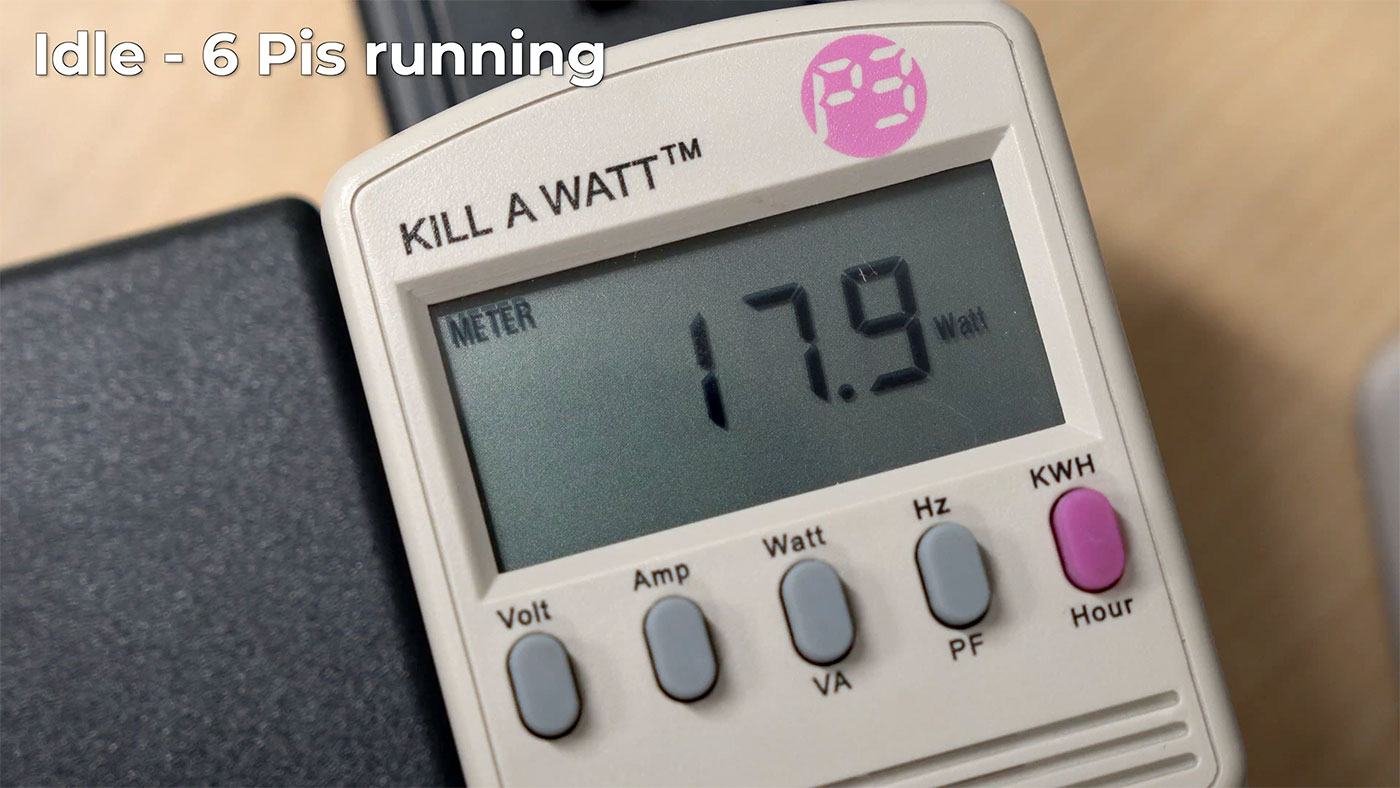

In terms of production usage, the Super6c could be useful in some edge computing scenarios, especially considering it uses just 17W of power for 6 nodes at idle, or about 24W maximum, but honestly, using other more powerful mini/SFF PCs would be more cost effective right now.

You can buy the DeskPi Super6c on Amazon or on DeskPi's website for $199.99.

Comments

Hey Jeff, the etc/apt/preferences.d/99pin-unstable file commissions an error. One of those block should say unstable. Hope you have a great day!

Ah shoot, typo on my part!

Are you sure the contents of /etc/apt/preferences.d/99pin-unstable are correct? You seem to pin a=stable twice.

From the video: The 11W when not powered on is interesting. Maybe the network switch is always turned on when power is applied. Can you use this device "turned off" as a network repeater?

Does this run this site like your Turing farm is does this work as a back up considering it's small form and capabilities?

Wow this is incredible. It has what I've always wanted from the Turing Pi and that's storage for each of the nodes rather than just some of them.

I wonder how Kubernetes with Longhorn storage would go on it.

I agree, an M.2 slot that works for each PI is what TuringPI is missing. Quite a letdown when they adding individual M.2 slots that can only be used with the other, non-pi modules…. :-(

I was able to install longhorn on k3s using supe6c without any issues. Had to ditch this solution because longhorn + SD card doesn't play well and I'm too cheap to buy multiple m.2 :) Also, was able to install nfs in k3s and it worked great except of remote DBs: SQlite gets corrupted due to nfs limitation. I'm looking at iSCSI in k3s but I need to include iSCSI module into kernel to make one of my cm4 a iSCSI target :) It's been fun :)

Just wanted to see how easy it is to post here. Great website!

Let's hope minetards get off their GPU kick and start using these to make thei fake money.

Of course now that the video came out, the price has been jacked up to $249.00 on amazon with a 1 to 2 month wait.

My only issues with a backplane like this is when it fails. I bought the SOPINE clusterboard years ago and had my docker swarm on it (before i started using kubernetes). I populated it with all 7 boards, one day I shut it down to move it and plugged it back in and the network switch onboard died. Now it sits in the corner in shame (for about 2+ years now) and that was a $99 backplane (and the cost of all the modules). Imagine that happening with a$249 board like this.

Hi, do you know the read/write performance on the NVMEs? Direct Read/Write from the CM4 to the NVME not over the network would be interesting. Are those NVMEs in AHCI or real NVME mode? Thanks :)

NVMe direct, and the read/write speeds are documented on this page: https://pipci.jeffgeerling.com/cards_m2/kioxia-xg6-m2-nvme-ssd.html

Over the network, assuming SMB or NFS, you can usually write at over 100 MB/sec (sometimes it seems to get stuck around 80-90 MB/sec), and read at 119 MB/sec.

Jeff, did you try a 5 port sata adapter in one of the nvme slots? Just got my super6c and debating on ordering an adapter.

I have not but as it's a standard M.2 M-key slot, it should work with any PCIe device that matches the pin out, so I see no reason it wouldn't work!

I might order one then. Just have to figure out how to mount the board to make them accessible.

Thanks Jeff G and - I just got one from Amazon basically overnight. I originally tried to order via DeskPi diretcly, but that's a shipment that is international and you have to pay more than the 199 when you receive it. The $249 was probably a little more than the direct order, but relatively instant gratification.

Filled mine w/4 CM4s (all I have) and NVMe. Planning on a K3s cluster, with kube-vip, longhorn storage, and etcd redundancy. CEPH sounds interesting, but it seems to me, "all in one" may be better... So, I have an ansible script that adds lvm2, k3s config.txt requirements and splits the NVME into 40% for K3s & kubelet storage, mounts and links, and 60% raw volume ready for longhorn storage. Going to be working on some ansible scripts to safely patch that setup, taking one node at a time, etc.

Do you have only one NVMe or 4 NVMes for each of the nodes? I assume it's 4 NVMes just because you mentioned Longhorn :) Was there any luck with booting from NVMe directly? I use CM4 Lite (8GB ram, no wifi/bluetooth) and cannot make it work without an SD card. When I connect HDMI to $1 CM4 and have only NVMe, the screen is blank. If I use NVMe + SD, it boots SD and I do see NVMe drive. If there are no storage devices connected, I can see that CM4 cycles through the boot order 0xf25641 SD, USB-MSD, BCM-USB-MSD, PCI/nvme and finally Network boot. According to the https://www.raspberrypi.com/documentation/computers/raspberry-pi.html#f… - "If you are using CM4 lite, remove the SD card and the board will boot from the NVMe disk". Bootloader version: 507b2360 2022/04/06. I'll try to upload the bootloader tomorrow. Please let me know if you have CM4 lites and if it boots from NVMe, thx!

Sorry for the delay. 1 NVMe per pi/cm4. I have CM4 with eMMC, but if you don't, then use the SD card. I think it's easiest to Boot from eMMC / SD Card and put your data on the nvme... I ended up doing some 'funky' links from /var to specific k3s directories that really fill up. I probably should have gone 25% of the nvme or less, on a 240g nvme. Basically, nvme is lvm with 2 volumes:

1) /k3s-data -- for kubernetes stuff

2) mounted to /var/lib/longhorn -- for longhorn.

then in /k3s-data make directories - kubelet and rancher

then symlink /var/lib/rancher --> /k3s-data/rancher

and /var/lib/kubelet --> /k3s-data/kubelet

I found the other /var stuff not terribly filling to move to the data volume, and I may be wrong, but i understand they don't write much...but i could be TOTALLY off.

(i've seen some try to move /run/k3s to the data volume...but I haven't poked in enough

This looks really interesting. One question though: Will that switch chip transparently pass through 802.1q VLANs?

Strange problem with BOOT_ORDER... or should it be called BOOT_DISORDER?

SUMMARY: BOOT_ORDER does not appear to be deterministic. Problems may be partial caused by device time outs and fall-backs (sound familiar to USB storage device users??), but it is seemingly worse than that. For example, each time I (you??) boot, we can get different results, even IF our BOOT_ORDER specifies ONLY 1 device type!

DEMONSTRATION OF PROBLEM:

My story began when I populated a super6c with 6 CM4s, all of which had on-board mmc memory.

I flashed each CM4 with an OS, and all was good. Systems booted, and responeded well, and I learned to use Ansible from Jeff Geerling's book. BOOT_ORDER could do no wrong... with only one plausible boot image to be found anywhere.

Eventually I got hold of a pile of nvme cards. I used the Raspberry PI imager to set the NVME cards up, and then I tried (with no change in my "default" BOOT_ORDER=0xf25641) to install them to boot from the bottom of my super6c. I had read "somewhere" that they would have priority "somehow," so I was curious to see what happened.

Surprisingly, about 50% of the devices booted from NMVE, and 50% from mmc... and it was RANDOM which would boot from what device, on any given reboot. Worse than that, sometimes doing a "df" revealed that various combinations of mmc and nvme mounts could appear after the boot completed!?!

Random df results included the reasonable results like a clean nvme boot:

/dev/nvme0n1p2 mounted on /

/dev/nvme0n1p1 mounted on /boot/firmware

and clean mmc boot:

/dev/mmcblk0p2 mounted on /

/dev/mmcblk0p1 mounted on /boot/firmware

as well as amazing mixtures, with half(?) from nvme, and half(?) from mmc:

/dev/mmcblk0p2 mounted on /

/dev/nvme0n1p1 mounted on /boot/firmware

and:

/dev/nvme0n1p2 mounted on /

/dev/mmcblk0p1 mounted on /boot/firmware

I even one time had nothing(?) bounted on /boot/firware (which left me wondering if that mount was for show/record-keeping. I'm quite curious as to WHAT it really means, and is used for).

I wouldn't have been bothered if BOOT_ORDER was complied with, and the SD storage got chosen 100% of the time, so I guessed that EVEN the on-board SD mmc storage could be "slow" to get ready, and it MIGHT be that we had to run through the list more than once. After, the final "f" in the boot order induces a "try the list again."

At that point I created an updated BOOT_ORDER=0xf25416, flashed it in via the rpiboot.exe, and experimented again with a series of reboots. The good(?) news is that the probability of correct results improved, but didn't clear up completely!?!

Finally, I decided to give up the potential for a fall-back to SD (mmc), and changed to BOOT_ORDER=0xf6. That BOOT_ORDER requests "try for NVME... and if it fails... try again..." This at least got me a consistent mount of:

/dev/nvme0n1p2 mounted on /

but then randomly (about 1/3 probability, impacting ALL of my CM4s in one reboot or another) rebooting provided either:

/dev/mmcblk0p1 mounted on /boot/firmware

instead of the "expected":

/dev/nvme0n1p1 mounted on /boot/firmware

Any comments on how this is all happening?? What does it mean?

-----------------------------------------------------------

DEBUGGING CLARIFICATIONS FOR FOLKS WITH SUGGESTIONS

I'm currently booting into Ubuntu Server 23.10 provided by Raspberry Pi Imager (because I'm using the same OS on my RPI 5 and other devices in my cluster... and it seemed simpler and more consistent).

To close the loop with other pieces of debug info... before you ask... yes... I'm using a recent eeprom courtesy of a lot of Ubuntu apt update and ugrade operations (and reboots). This was verified (with help of Ansible) on all my devices as being (please assume that "super" is the group of IP addresses found on my super6c board):

>> ansible super -a "vcgencmd version "

[each internal LAN IP] | CHANGED | rc=0 >>

Mar 17 2023 10:50:39

Copyright (c) 2012 Broadcom

version 82f3750a65fadae9a38077e3c2e217ad158c8d54 (clean) (release) (start)

In case you're wondering if I REALLY did get rpiboot.exe to work each time... I left the various BOOT_ORDER lines commented out as I progressed to each new BOOT_ORDER trial, and this can be seen (identically) in all my CM4s via:

>> ansible super -a "vcgencmd bootloader_config "| grep BOOT_ORDER

# Boot Order Codes, from https://www.raspberrypi.com/documentation/computers/raspberry-pi.html#B…

#BOOT_ORDER=0xf25641

#BOOT_ORDER=0xf25416 # Ensure NVME comes first

BOOT_ORDER=0xf6 # NVME is only acceptable item

It is IMO rather amazing that with that last line, as the current BOOT_ORDER, that I ever get an mmc mount... and it is more surprising that it is random from boot to boot, and device to device.

Can anyone else repro this "feature" of BOOT_ORDER? Note it is easier to do on the super6c, as you have standard/consistent hardware, and you get 6 results from each:

>> ansible super -a reboot -b

command, before "checking" via a:

ansible super -a df | grep "\(CHANGED\)\|\(mmcb\)"

Can anyone explain the significance of /boot/firmware mounting??

Thanks in advance!

Jim

I'm curious if you've had the chance to experiment with a full-fledged Kubernetes Cluster on DeskPi or if you're considering producing a video on the topic.

Thanks