A few months ago, I posted a video titled Enterprise SAS RAID on the Raspberry Pi... but I never actually showed a SAS drive in it. And soon after, I posted another video, The Fastest SATA RAID on a Raspberry Pi.

Well now I have actual enterprise SAS drives running on a hardware RAID controller on a Raspberry Pi, and it's faster than the 'fastest' SATA RAID array I set up in that other video.

A Broadcom engineer named Josh watched my earlier videos and realized the ancient LSI card I was testing would not likely work with the ARM processor in the Pi, so he was able to send two pieces of kit my way:

- A Broadcom MegaRAID 9460-16i Tri-Mode storage controller

- A Broadcom-designed reference 'Universal Backplane' following the SFF-TA-1005 standard

After a long and arduous journey involving multiple driver revisions and UART debugging on the card, I was able to bring up multiple hardware RAID arrays on the Pi.

This blog post is also available in video form on my YouTube channel:

SAS RAID

But what is SAS RAID, and what makes hardware RAID any better than the software RAID I used in my SATA video?

The drives you might use in a NAS or a server today usually fall into three categories:

- SATA

- SAS

- PCI Express NVMe

All three types can use solid state storage (SSD) for high IOPS and fast transfer speeds.

SATA and SAS drives might also use rotational storage, which offers higher capacity at lower prices, though there's a severe latency tradeoff with that kind of drive.

RAID, which stands for Redundant Array of Independent Disks, is a method of taking two or more drives and putting them together into a volume that your operating system can see as if it were just one drive.

RAID can help with:

- Redundancy: A hard drive (or multiple drives, depending on RAID type) can fail and you won't lose access to your data immediately.

- Performance: multiple drives can be 'striped' to increase read or write throughput (again, depending on RAID type).

Extra caching configuration (to separate RAM or even to dedicated faster 'cache' drives) can speed up things even more than is possible on the main drives themselves, and hardware RAID can provide even better data protection with separate flash storage that caches write data if power is gone.

Caveat: If you can fit all your data on one hard drive, already have a good backup system in place, and don't need maximum availability, you probably don't need RAID, though.

I go into a lot more detail on RAID itself in my Raspberry Pi SATA RAID NAS video, so check that out to learn more.

Now, back to SATA, SAS, and NVMe.

All three of these describe interfaces used for storage devices. And the best thing about a modern storage controller like the 9460-16i is that you can connect to all three through one single HBA (Host-Bus Adapter).

If you can spend a couple thousand bucks on a fast PC with lots of RAM, software RAID solutions like ZFS or BTRFS offer a lot of great features, and are pretty reliable.

But on a system like my Raspberry Pi, software-based RAID takes up much of the Pi's CPU and RAM, and doesn't perform as well. (See this excellent post about /u/rigg77's ZFS experiment).

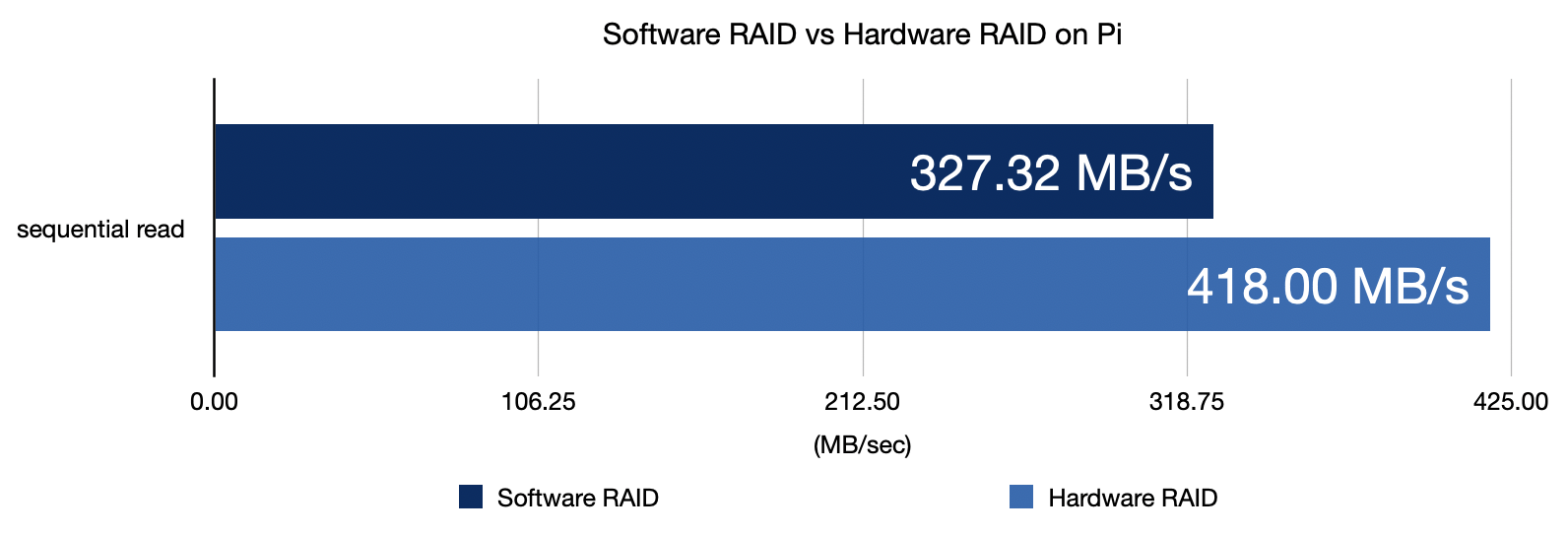

The fastest disk speed I could get with software mdadm-based RAID was about 325 MB/sec, and that was with RAID 10. Parity calculations may make that maximum speed even lower!

Using hardware RAID allowed me to get over 400 MB/sec. That's a 20% performance increase, AND it leaves the Pi's CPU free to do other things.

We'll get more into performance later, but first, I have to address the elephant in the room.

Why enterprise RAID on a Pi?

People are always asking why I test all these cards—in this case, a storage card that costs $600 used—on low-powered Raspberry Pis. I even have a 10th-gen Intel desktop in my office, so, why not use that?

Well, first of all, it's fun, I enjoy the challenge, and I get to learn a lot since failure teaches me a lot more than easy success.

But with this card, there are two other good reasons:

First: it's great for a little storage lab.

In a tiny corner of my desk, I can put 8 drives, an enterprise RAID controller, my Pi, and some other gear for testing. When I do the same thing with my desktop, I quickly run out of space. The burden of having to go over to my other "desktop computer" desk to test means I am less likely to set things up on a whim to try out some new idea.

Second: using hardware RAID takes the IO burden of the Pi's already slow processor.

Having a fast dedicated RAID chip and an extra 4 GB of DDR4 cache for storage gives the Pi reliable, fast disk IO.

If you don't need to pump through gigabytes per second, the Pi and MegaRAID together are more energy efficient than running software RAID on a faster CPU. That setup used 10-20W of power.

My Intel i3 desktop running by itself with no RAID card or storage attached idles at 25W but usually hovers around 40W—twice the power consumption, without a storage card installed.

A Pi-based RAID solution isn't going to take over Amazon's data centers, though. The Pi just isn't built for that. But it is a compelling storage setup that was never possible until the Compute Module 4 came along.

Broadcom MegaRAID 9460-16i

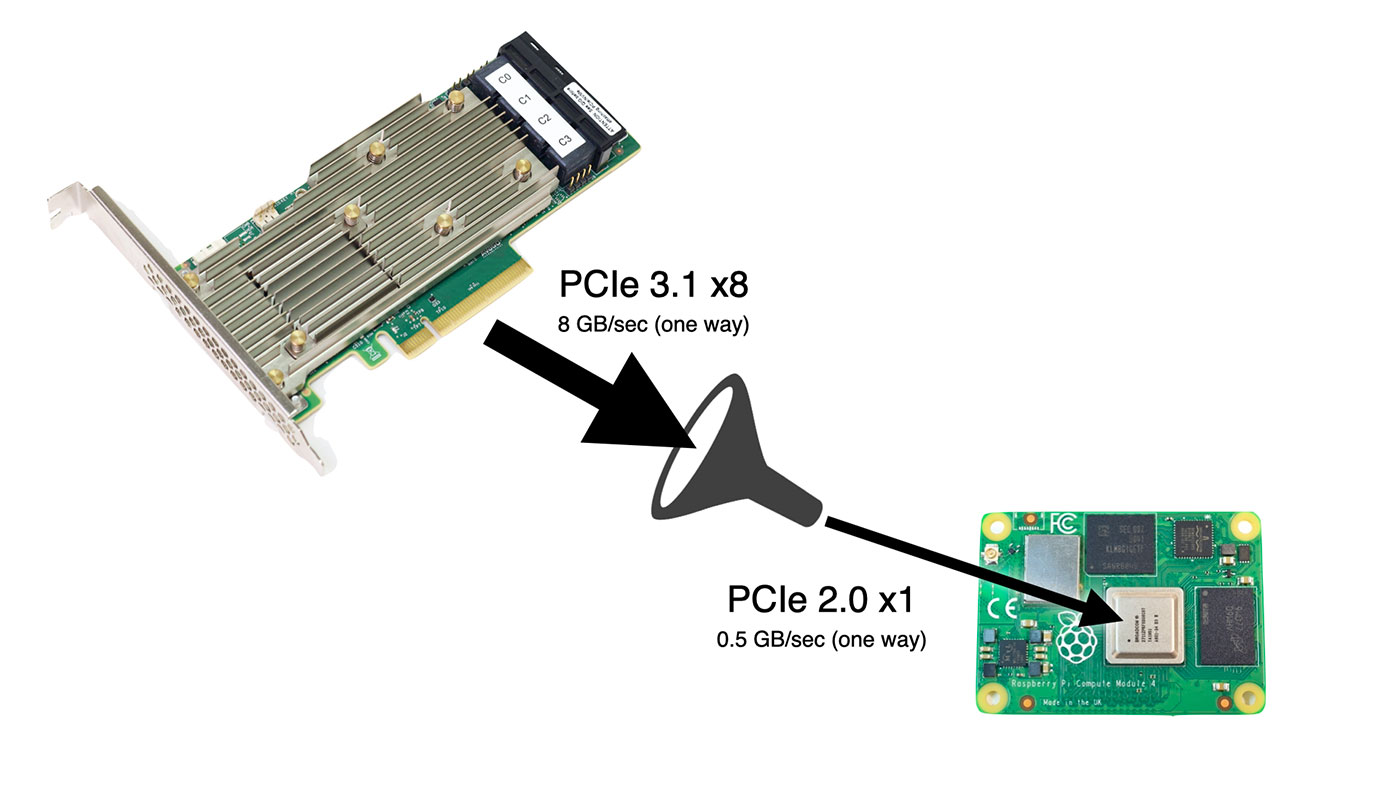

The MegaRAID card Josh sent is PCIe Gen 3.1 and supports x8 lanes of PCIe bandwidth.

Unfortunately, the Pi can only handle x1 lane at Gen 2 speeds, meaning you can't get the maximum 6+ GB/sec of storage throughput—only 1/12 that. But the Pi can use four fancy tricks this card has that the SATA cards I tested earlier don't:

- SAS RAID-on-Chip, a computer in its own regard, taking care of all the RAID storage operations. The Pi's slow CPU is saved from having to manage RAID operations.

- 4 GB DDR4 SDRAM cache, speeding up IO on slower drives, and saving the Pi's precious 1/2/4/8 GB of RAM.

- Optional CacheVault flash backup: plug in a super capacitor, and if there's a power outage, it dumps all the memory in the card's write cache to a built-in flash storage chip.

- Tri-mode ports allow you to plug any kind of drive into the card (SATA, SAS, or NVMe), and it will work with it.

It also does everything internal to the RAID-on-Chip, so even if you plug in multiple NVMe drives, you won't bottleneck the Pi's poor little CPU (with it's severely limited IO bandwidth). Even faster processors can run into bandwidth issues when using multiple NVMe drives.

Oh, and did I mention it can connect up to 24 NVMe drives or a whopping 240 SAS or SATA drives to the Pi?

I, for one, would love to see a 240 drive petabyte Pi NAS...

Getting the card to work

Josh sent over a driver, some helpful compilation suggestions, and some utilities with the card.

I had some trouble compiling the driver at first, and ran into a couple problems immediately:

- The

raspberrypi-kernel-headerspackage didn't exist for the 64-bit Pi OS beta (it has since been created), so I had to compile my own headers for the 64-bit kernel. - Raspberry Pi OS didn't support MSI-X, but luckily Phil Elwell committed a kernel tweak to Pi OS that enabled it (at least at a basic level) in response to a forum topic on getting the Google Coral TPU working over PCIe.

With those two issues resolved, I cross-compiled the Pi OS kernel again, and fan into another issue: the driver assumed it would have CONFIG_IRQ_POLL=y for IRQ polling functionality, but that wasn't set by default for the Pi kernel, therefore I had to recompile again to get that option working.

Finally, thinking I was in the clear, I found that the kernel headers and source from my cross-compiled kernel weren't built for ARM64 like the kernel itself, and rather than continue debugging my cross-compile environment, I bit the bullet and did the full 1-hour compile on the Raspberry Pi itself.

An hour later, the driver compiled without errors for the first time.

I excitedly ran sudo insmod megaraid_sas.ko, and then... it hung, and after five minutes, printed the following in dmesg:

[ 372.867846] megaraid_sas 0000:01:00.0: Init cmd return status FAILED for SCSI host 0

[ 373.054122] megaraid_sas 0000:01:00.0: Failed from megasas_init_fw 6747

Things were getting serious, because two other Broadcom engineers joined a conference call with Josh and I, and they had me pull off the serial UART output from the card to debug PCIe memory addressing issues!

We found the driver would work on 32-bit Pi OS, but not the 64-bit beta. Which was strange, since in my experience drivers have often worked better under the 64-bit OS.

A few days later, a Broadcom driver engineer sent over patch which fixed the problem, which was related to the use of the writeq function, which is apparently not well supported on the Pi. Josh filed a bug report to the Pi kernel issue queue about it: writeq() on 64 bit doesn't issue PCIe cycle, switching to two writel() works.

Anyways, the driver finally worked, and I could see the attached storage enclosure being identified in the dmesg output!

Setting up RAID with StorCLI

StorCLI is a utility for managing RAID volumes on the MegaRAID card, and Broadcom has a comprehensive StorCLI reference on their website.

The command I used to set up a 4-drive SAS RAID 5 array went like this:

sudo ./storcli64 /c0 add vd r5 name=SASR5 drives=97:4-7 pdcache=default AWB ra direct Strip=256

- I named the

r5arraySASR5 - The array uses drives 4-7 in storage enclosure ID 97

- There are a few caching options.

- I set the strip size to 256 KB (which is typical for HDDs—64 KB would be more common for SSDs).

I created two RAID 5 volumes: one with four Kingston SA400 SATA SSDs, and another with four HP ProLiant 10K SAS drives.

I used lsblk -S to make sure the new devices, sda and sdb, were visible on my system. Then I partitioned and formatted them with:

sudo fdisk /dev/sdXsudo mkfs.ext4 /dev/sdX

At this point, I had a 333 GB SSD array, and an 836 GB SAS array. I mounted them and made sure I could read and write to them.

Storage on boot

I also wanted to make sure the storage arrays were available at system boot, so I could share them automatically via NFS, so I installed the compiled driver module into my kernel:

- I copied the module into the kernel drivers directory for my compiled kernel:

sudo cp megaraid_sas.ko /lib/modules/$(uname -r)/kernel/drivers/ - I added the module name, 'megaraid_sas', to the end of the /etc/modules file:

echo 'megaraid_sas' | sudo tee -a /etc/modules - I ran

sudo depmodand rebooted, and after boot, everything came up perfectly.

One thing to note is that a RAID card like this can take a minute or two to initialize, since it has its own initialization process. So boot times for the Pi will be a little longer if you want to wait for the storage card to come online first.

A note on power supplies: I used a couple different power supplies when testing the HBA. I found that if I used a lower-powered 12V 2A power supply, the card seemed to not get enough power, and would endlessly reboot itself. I switched to this 12V 5A power supply, and the card ran great. (I did not attempt using an external powered 'GPU riser', since I have had mixed experiences with them.)

Performance

With this thing fully online, I tested performance with fio.

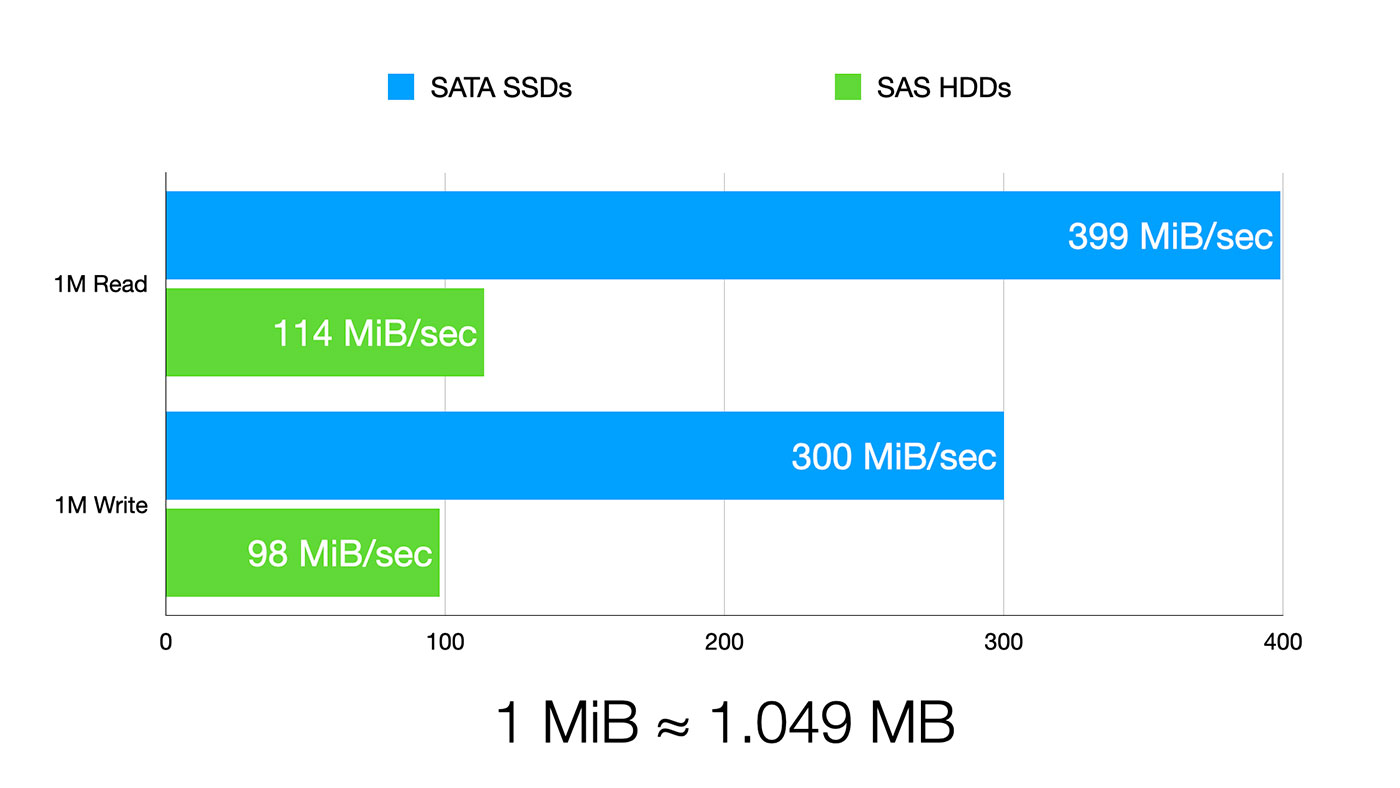

For 1 MB random reads, I got:

- 399 MiB/sec on the SSD array

- 114 MiB/sec on the SAS array

For 1 MB random writes, I got:

- 300 MiB/sec on the SSD array

- 98 MiB/sec on the SAS drives

Note: 1.000 MiB = 1.024 MB

These results show two things:

First, even cheap SSDs are still faster than spinning SAS drives. No real surprise there.

Second, the limit to the SSD speed is the Pi's PCIe bus. I'm able to get 3.35 Gbps of bandwidth, and that's actually better than the bandwidth I could pump through an ASUS 10G network adapter, which could only get up to 3.27 gigabits.

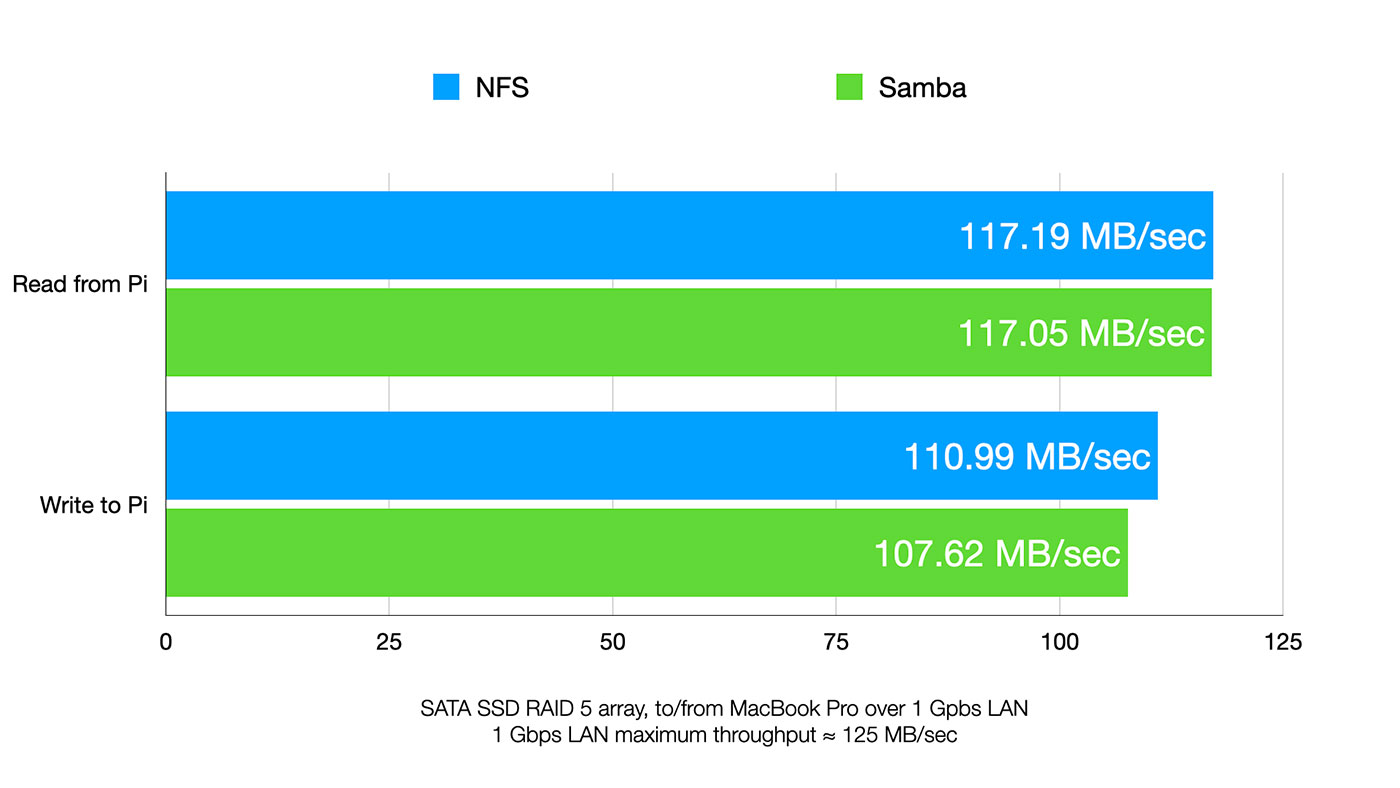

There are tons of other tests I could do, but I wanted to see how the drives performed for network storage, so I installed Samba and NFS and ran more benchmarks.

I was amazed both Samba and NFS got almost wire speed for reads, meaning the Pi was able to feed data to my Mac as fast as the gigabit interface could pump it through.

If you remember from my SATA RAID video, the fastest I could get with NFS was 106 MB/sec, and that speed fluctuated when packets were queued up while the Pi was busy sorting out software RAID.

With the storage controller handling the RAID, the Pi was staying a solid 117 MB/sec continuously, during 10+ GB file copies.

PCIe Switches and 2.5 Gbps Networking

What about 2.5 GbE networking? I actually have two PCIe switches, and a few different 2.5 Gbps NICs I've already successfully used with the Pi. So I tried using both switches and a few NICs, and unfortunately I couldn't get enough power through the switch for both the NIC and the power-hungry storage controller.

I even pulled out my 600W PC PSU, but accidentally fried it when I tried shorting the PS_ON pin to power it up. Oops.

Watch me zap the PSU (and luckily, not myself) here.

So 2.5 Gbps performance will, unfortunately, have to wait until I get a new power supply.

Conclusion

Finally, I have true hardware SAS RAID running on the Raspberry Pi. Josh was actually first to do it, though, on 32-bit Pi OS.

The driver we were testing is still pre-release, though. If you run out and buy a MegaRAID controller today, you'll have some trouble getting it working on the Pi until the changes make it into the public driver.

Am I going to recommend you buy this $1000 HBA for a homemade Pi-based NAS? Maybe not. But there is a lower-end version you can get, the 9440-8i. Still has all the high-end features, and it's less than $200 used on eBay (Dell server pulls, mostly).

Even that might be overkill, though, if you just want to build a cheap NAS and only use SATA drives. I'll be covering more inexpensive NAS options soon, so subscribe to this blog's RSS feed, or subscribe to my YouTube channel to follow along!

Comments

Just a quick question, is the card setup in IT or IR mode?

The card is setup as it came from the factory, in normal Hardware RAID mode (not IT mode). The RAID volumes were being managed entirely on the chip, and individual drives were not being passed through to the Pi at all (just the created volumes).

Hi,

would you mind sharing your fio command line so we can compare our results with the same settings? Thanks a lot!

Cheers,

Uli

Sure thing! I've added the tests to this GitHub issue for the card: https://github.com/geerlingguy/raspberry-pi-pcie-devices/issues/72#issuecomment-786789848.

Thanks a lot - but... knowing many copy-and-paste or git clone people are out there... I think you should change the target from /dev/sda to /dev/sdX....:-) just for precaution:-)

[Target0]

filename=/dev/sda

numjobs=1

flow_id=0

Cheers

Uli

Can you give us more info about the disk enclosure that you used in this video?

Looks really nice

This is a modified backplane (built specifically to demonstrate the U.2/U.3 SATA/SAS/NVMe storage options for this card) following the SFF-TA-1005 standard. But the chassis itself seems to be based on a SerialCables.com 8 port SAS Direct Attached JOBD device, which is now discontinued.

Thanks!

This was a great video Jeff, and answers my previous questions about multiple drives being connected to the pi. Thanks to the person who sent you the card also!

Thank you for sharing .

You'd answer my year question that pi with pci-e can do this or not. I just gave up pi4b and 5 bays sata usb 3.1 with 6TB rotation sata disk and ZFS last december disks performance unacceptable. first I was think that because of usb Now I'm get the answer.

Wait to see 2.5GbE and what about 10GbE?

> My Intel i3 desktop running by itself with no RAID card or storage attached idles at 25W but usually hovers around 40W—twice the power consumption, without a storage card installed.

25W seems a bit high for an i3 with nothing attached... I just took a look at one of my more minimal systems that happens to have a Kill-a-watt on it (GIGABYTE GA-Z270N-WIFI - i7-7700 - Samsung SSD 960 EVO 1TB - Corsair SF600 PSU) and found it idles at around 12 watts with normal loads around 17 watts.

Could be HP's poor engineering then :P — the computer isn't the best in the world, but it's been reliable at least. Fast enough to play Halo MCC on Steam!

Huh, interesting! Wish there were more comprehensive real-world PC power consumption benchmarks available. Seems like we usually just get these one-off data-points...

Anyway, thanks for indulging my curiosity and - in general - writing this blog!

(Please delete this comment if it's not helpful)

Hi there Jeff! If you're using fio to generate stats any chance you can include the fio version and the job command line you used alongside future stat reports? It makes it useful for other people reproducing your results (and most overlook https://github.com/axboe/fio/blob/master/MORAL-LICENSE )...

fiocommands used here: https://github.com/geerlingguy/raspberry-pi-pcie-devices/issues/72#issuecomment-786789848The version was... whatever was installed on Raspberry Pi OS from the default repo; don't have it for reference right now though.

I'm actually starting to use a new benchmark script that should give me more reproducible/accurate results, too: https://github.com/geerlingguy/raspberry-pi-dramble/blob/master/setup/benchmarks/disk-benchmark.sh.

This is an awesome post and video.

Do you think it is possible to use a hardware raid configuration like this to act as a central storage unit for a Pi cluster (and maybe utilizing more of the PCIe lanes with the cluster)? It would be really intriguing to watch you configuring it.

Have you heard about the Helios64? It's an ARM powered OSS NAS: kobol.io

I have the predecessor Helios4. It runs at about 30 W idle with 4 10TB SATA HDDs. Performance of the RAID10 array formatted with ext4 is about 300 MB/s random write and 450 MB/s random read (measured with iozone3).

How much RAM do you recommend for the Pi for this setup?

Would older MegaRAID boards (such as the 9440-8i) need the same driver compilation, or is it just the newer controller that you used which needed that?

At least 4 GB (that's what I had on my Pis I was testing with. 8GB of RAM would be ideal though.

Any MegaRAID board with tri-mode support should work, but would still need the custom driver for now.

Hi Jeff,

Great tutorial, very useful.

Do you think this driver you compiled works with non RAID IT firm HBA like LSI 9400-8i as well?

Thank you in advance for your comment.

It seems like it has to support tri-mode

So I don’t think so…

I was interested in using a higher end board that has SFP+ to run an external SAS HBA with my EMC enclosures. Each EMC enclosure idles at around 30w empty because of the fans. 50w filled when the drivers are spun down (15 drives).

Was hoping I could get some sort of ARM setup to replace my x86 server with the hopes of cutting about 50-60w of idle usage.

But I just don’t think ARM is going to be the way to do this on a budget in the next 5 years.

I really want to see this done from a budget perspective with 2000mbit or better of transfer speed (at least 200MB/s or better). I get 600-800MByte transfer speeds on my x86 setup to my main PC with SFP+ but would be happy with the equivalent of a 2.5g connection.

Just doesn’t seem like I’m gonna be able to do it on a budget. No point in spending thousands to save $50/yr in electricity.