The Raspberry Pi Compute Module 4 eschews a built-in USB 3.0 controller and exposes a 1x PCI Express lane.

The slightly older Raspberry Pi 4 model B could be hacked to get access to the PCIe lane (sacrificing the VL805 USB 3.0 controller chip in the process), but it was a bit of a delicate operation and only a few daring souls tried it.

Watch this video for more detail about my experience using these GPUs on the CM4:

GPUs on a Raspberry Pi Compute Module 4!

Now that the CM4's IO Board has a 1x slot, it's trivial to plug in any PCI Express card, and test out its functionality with the Raspberry Pi. And test I have! I am detailing all the cards I've tested and the results on my Raspberry Pi PCIe device compatibility database, and you can look at the linked issues to learn more about each card.

GPUs and the Raspberry Pi

The Pi's BCM2711 SoC includes a VideoCore 6 GPU capable of features like H.265 4Kp60 decode, H.264 1080p60 decode, and 1080p30 encode. It also supports OpenGL ES 3.0, and it's not a terrible little GPU for what it costs.

But there are many people who have wanted to know whether you could use an Nvidia or AMD GPU with a Pi, and I have tried answering that question.

And so far, the answer seems to be 'no.'

Partly it's due to lack of support for I/O BAR space on the BCM2711 ARM SoC, and partly it may be due to driver bugs or features that are only supported on X86 or certain ARM architectures.

I have tried the following drivers, and all of them failed to initialize the card fully in one way or another:

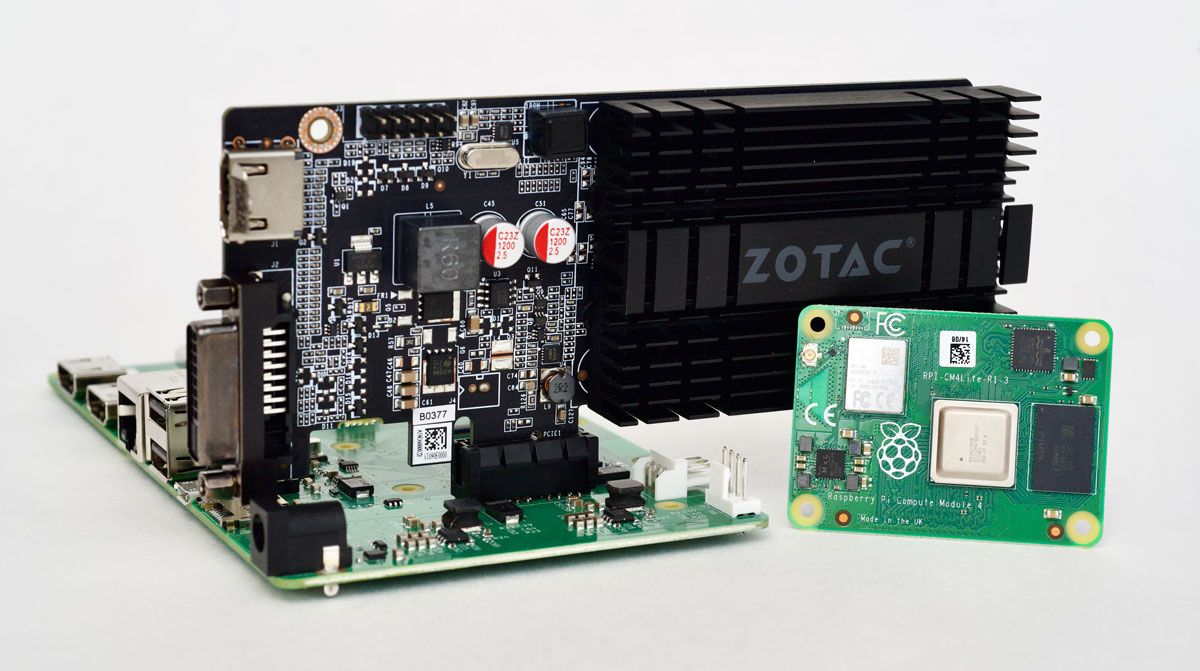

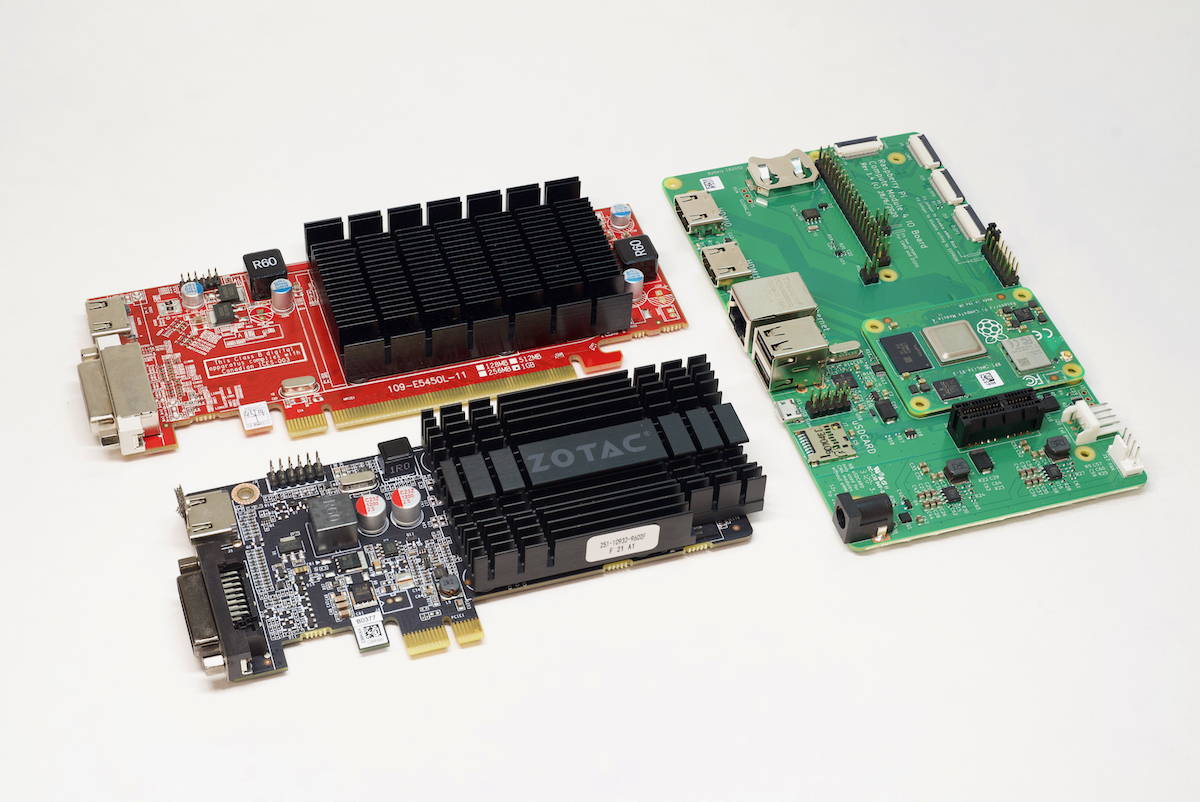

For the Zotac Nvidia GeForce GT 710:

- Nouveau open source driver (you have to recompile the Raspberry Pi OS kernel to enable it)

- Nvidia 32-bit ARM driver

- Nvidia 64-bit ARM driver

For the VisionTek AMD Radeon 5450:

- Radeon open source driver (you have to recompile the Raspberry Pi OS kernel to enable it)

- AMDGPU open source driver... which I found after trying to use it does not work with as old a video card as the one I tried!

Just getting the hardware to plug in might be your first challenge, as many (in fact, almost all) GPUs have physical slots that won't fit in the IO Board's x1 PCIe slot. You could hack off the bit in the middle to make the card physically fit, and it would work, but luckily there are x1 to x16 adapters that allow larger cards to plug into the x1 slot (though without the added lanes of bandwidth).

Most cards work fine with fewer lanes available; they just don't get as much bandwidth between the GPU and the CPU.

Both cards were recognized appropriately when I ran lspci, so at least there was a glimmer of hope:

# Nvidia GeForce GT 710

01:00.0 VGA compatible controller: NVIDIA Corporation GK208 [GeForce GT 710B] (rev a1) (prog-if 00 [VGA controller])

Subsystem: ZOTAC International (MCO) Ltd. GK208B [GeForce GT 710]

Flags: fast devsel

Memory at 600000000 (32-bit, non-prefetchable) [disabled] [size=16M]

Memory at <unassigned> (64-bit, prefetchable) [disabled]

Memory at <unassigned> (64-bit, prefetchable) [disabled]

I/O ports at <unassigned> [disabled]

[virtual] Expansion ROM at 601000000 [disabled] [size=512K]

Capabilities: [60] Power Management version 3

Capabilities: [68] MSI: Enable- Count=1/1 Maskable- 64bit+

Capabilities: [78] Express Legacy Endpoint, MSI 00

Capabilities: [100] Virtual Channel

Capabilities: [128] Power Budgeting <?>

Capabilities: [600] Vendor Specific Information: ID=0001 Rev=1 Len=024 <?>

# AMD Radeon 5450

01:00.0 VGA compatible controller: Advanced Micro Devices, Inc. [AMD/ATI] Cedar [Radeon HD 5000/6000/7350/8350 Series] (prog-if 00 [VGA controller])

Subsystem: VISIONTEK Cedar [Radeon HD 5000/6000/7350/8350 Series]

Flags: fast devsel, IRQ 255

Memory at <unassigned> (64-bit, prefetchable) [disabled]

Memory at 600000000 (64-bit, non-prefetchable) [disabled] [size=128K]

I/O ports at <unassigned> [disabled]

[virtual] Expansion ROM at 600020000 [disabled] [size=128K]

Capabilities: [50] Power Management version 3

Capabilities: [58] Express Legacy Endpoint, MSI 00

Capabilities: [a0] MSI: Enable- Count=1/1 Maskable- 64bit+

Capabilities: [100] Vendor Specific Information: ID=0001 Rev=1 Len=010 <?>

Capabilities: [150] Advanced Error Reporting

BAR space woes

PCI express devices require BAR ('Base Address Register') space to be able to initialize and map memory to the computer's own memory space, and the Pi currently gives devices 64 MB of RAM for this purpose.

That's fine for a simple device like the VL805 USB 3.0 controller used in the regular Pi 4, and for things like NVMe drives and SATA adapters. But GPUs require a lot more BAR space, so after a bit of research, I documented the process for expanding the BAR space on the Pi to 1 GB after some help from a couple engineers on the Pi Forums.

The Nvidia GPU was happy with just 256 MB of RAM available, but the AMD Radeon wanted 1 GB. This also means that the lowest-end 1 GB Compute Module 4 might not be adequate for certain applications when you want to pair them with PCIe devices.

Driver woes

Once I got through the BAR space issue, and dmesg was showing all the MEM space was allocated correctly (though IO space was not, since the BCM2711 doesn't have any IO registers for PCIe devices), I started trying out all the drivers I could find:

- The Nouveau open source driver for Nvidia cards had to be compiled into a custom Pi kernel—neither Pi OS nor Ubuntu for Pi has it available by default (to save space in their images). Once I got it compiled, the Pi completely locked up during boot if I had the card inserted. No serial output, nothing.

- The Nvidia ARM32 driver would fail to compile the kernel module due to a ton of errors; I'm not sure if this driver would install on any ARM device currently.

- The Nvidia ARM64 driver compiled and loaded, but during card initialization it output

RmInitAdapter failed!and I couldn't get past that. - ** The Radeon open source driver** also needs to be compiled, and almost initialized the card, but complained that it was

Unable to find PCI I/O BA. Oh well.

You can follow my entire journey with these cards in these two issues:

What about CUDA?

I thought that maybe I could still use CUDA on the Nvidia card even though the GPU wouldn't initialize and output a signal over HDMI, nor work with Xorg... but alas, it seems the CUDA tools require the card to initialize in the same way as the graphics bits, so I got that same RmInitAdapter failed! message in dmesg.

It also turns out the 710 might not be compatible with CUDA 11, as it wasn't listed on the GPU compatibility page (even though the 705, 720, 730, and 720 were), even though it is advertised as having 192 CUDA cores. Ah well.

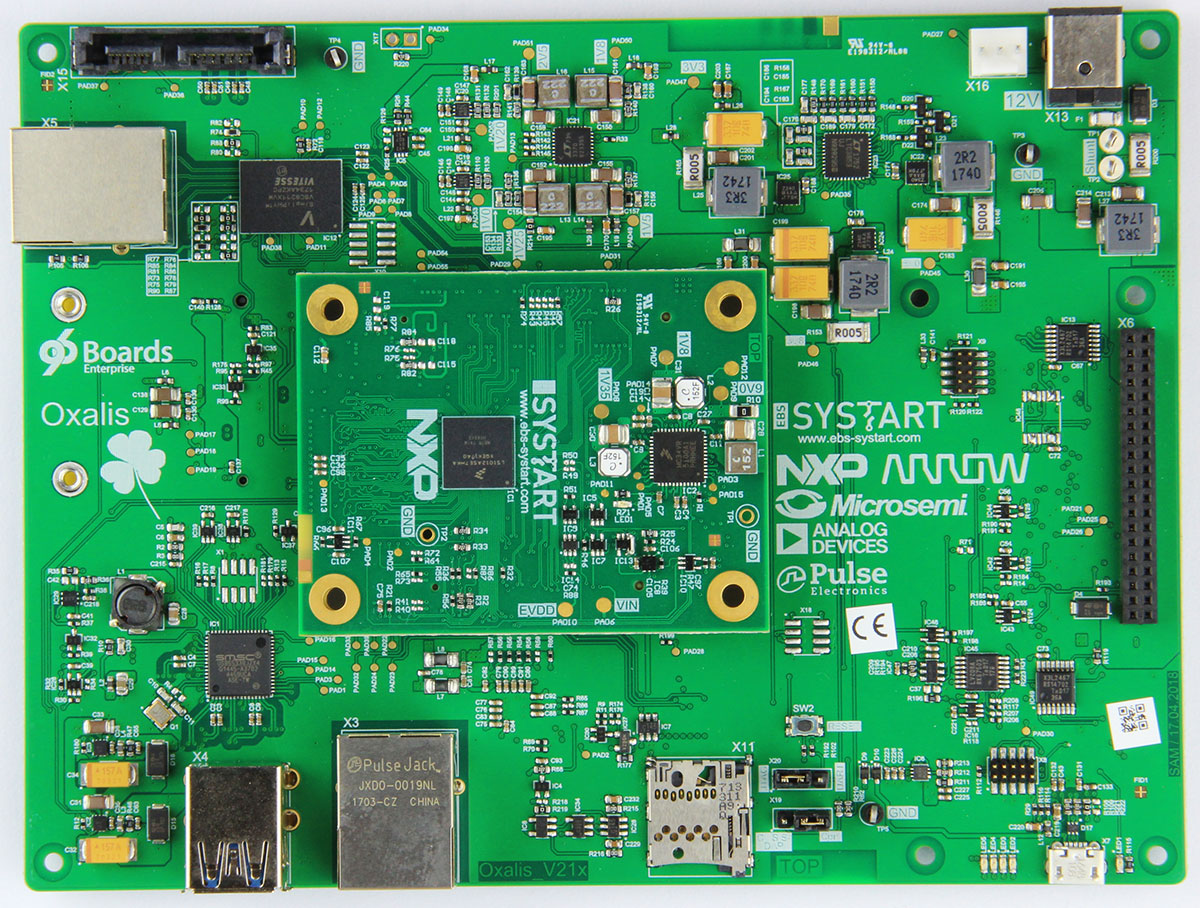

Other ARM attempts

It looks like the closest anyone's gotten to using a GPU with a readily-available ARM board is @sahajsarup, and in this livestream with 96Boards, he demoed getting console output over HDMI through the 96Boards Oxalis hardware. That board costs over $400, though, so it's hardly in the same price range as a Raspberry Pi!

And before the Compute Module 4 was released, a couple people hacked out the VL805 chip and routed the PCIe bus through an external riser, most notably @domipheus, who got about as far along as I did and seemed to also hit a wall.

Besides that, it looks like there were similar troubles in the land of the ROCKPro64, as tllim mentions:

I don't think NVidia card can work on ROCKPro64, this due to graphic card needs large IO Memory range.

As TL Lim is the founder of Pine64, I'd say his opinion is pretty solid.

Next Steps

Every time I post a story of failure or conjecture, there are immediately a number of suggestions for follow-up, and if I tried them all... well I'd never be able to get anything else done.

But a few things did stand out, and when I get time I may try them:

- Using an externally-powered riser to see if getting more power to the GPU that is not coming through the Pi's own PCIe circuit could help at all. (I have a riser, but don't have the power adapter to get it going yet).

- Trying out Windows on Raspberry—I don't have high hopes for it to work, but this would be a good excuse to at least take it for a spin.

- Trying to see if there's another way to work around the IO BAR space limitations. According to some people I've spoken with, they have gotten these cards working on other ARM devices, but they're always mum about which devices specifically (I'm guessing maybe things like Graviton at AWS, which they probably can't discuss in detail?).

- Testing a slightly newer card, like the AMD Radeon RX 550, which doesn't have BIOS (uses UEFI) and has overall newer architecture, supported by the amdgpu driver.

Anyways, this was quite an adventure, and in the end, even though I didn't get a GPU to output a video signal from the Pi, I did learn a lot about PCIe, the Linux kernel, and the Raspberry Pi Compute Module 4.

Be sure to check out my video GPUs on a Raspberry Pi Compute Module 4!, and my website where I'm documenting all the PCIe cards I'm testing with the CM4: Raspberry Pi PCIe device compatibility database.

Comments

What about a 1x amd card:

HIS 6450 Silence 2GB DDR3 PCIe x1 DP/DVI/VGA

Right now I'm going to try out an RX 550, which seems like it may be able to work... fingers crossed!

I've seen your video, I know that GT1030 exist with DVI-D and HDMI or DP and HDMI, maybe could be without I/O bar? 🤔

I just ordered a Radeon RX 550, which may work better (according to some sources around AMD...). We shall see!

Any updates on the rx550 attempt?

Still working on it. Currently working on setting up an external GDB debugger to step through the driver code since something locks up the Pi completely during the doorbell init.

Hey Jeff , First-off great work man keep it up. On the rx 550 , any updates in this ? And are you testing with the 2GB or the 4GB version ?

Still working on it ;)

2GB version, and I am currently debugging the amdgpu driver in the kernel, but had to pause to catch up in a few other areas.

Thank you for the quick answer man. Please do post it here first once you accomplish it. I have faith in you :D.

Hi Jeff,

Great work getting things this far. Is this something you are still working on? Would love to see where you get to with this.

Yes; I have another update video recorded, and will hopefully be releasing it in the next week or two. Still no joy, but there are signs of hope!

Waiting waiting 🤩🤩

Been a year, have you got anywhere yet? I think you said in your latest video that you had been busy with that DDOS attack (good video that), and hadn't had time to try get a GPU working yet.

I will have an update by the end of April! Been working on it this month...

1) https://envytools.readthedocs.io/en/latest/hw/bus/bars.html maybe could be helpfull?

2) Seeing your video Idk if I/O BARS are only on VGA anyway Nvidia GT1030 have 2 configs: DVI-D + HDMI or DP + HDMI, maybe could be usefull?

Can you try something like an h200e if it works you could connect the pi to a disk shelf like a md1200 or any iscs shelf.

What about the Rock Pi x?

It uses x86 architecture.

It's interesting how you test the limits, while others concerned about the limitations 💪🔥🌱

I am going to try https://www.phoronix.com/scan.php?page=article&item=asus-50-gpu&num=1

on https://www.khadas.com/vim3

I have M.2 to PCIE with power connector so I hope that I will have enough power :)

Good luck with raspberry pi

And feel free to make bug report on raspberry pi https://github.com/raspberrypi/firmware/issues

I think some people in RPI foundation will push it

Very interesting that the BAR space is configurable on the Broadcrap SoC.

You can get around the I/O BAR issue on radeon: in your kernel source, go to drivers/gpu/drm/radeon/radeon_device.c, remove these lines:

https://github.com/torvalds/linux/blob/598a597636f8618a0520fd3ccefedaed…

(in radeon_device_init() the /* io port mapping */ section)

This is a silly check left over there, you can see another place in that file says DRM_ERROR("Unable to find PCI I/O BAR; using MMIO for ATOM IIO\n"); so the cards are perfectly fine without the I/O BAR. In the FreeBSD port of the driver, these lines are just ifdef'd out, sooooo yeah :) And of course there is no such mistake in the amdgpu kernel driver so indeed modern Radeons likely would just work.

BTW the hardware I have is the SolidRun MACCHIATObin, well under $400, PCIe gen2 x4, comes with an already open-ended slot.

And you really should use EDK2 TianoCore UEFI firmware with it, not the stock U-Boot it comes with. It does even emulate x86 for the GOP driver which allows for early graphics output in the firmware screen, before you load the driver. My firmware build is at https://unrelentingtech.s3.dualstack.eu-west-1.amazonaws.com/flash-imag… in case anyone needs it. The controller on here, despite some quirks, does support ECAM and is exposed in ACPI, which means generic ACPI operating systems run, without any device tree crap. And PCIe I/O port access is converted to MMIO internally as expected, I/O BARs just work, as they do on all server grade ARM hardware.

Thanks, I'll give it a try!

really stupid idea, what about the FreeBSD image? Just a thought.

Did you try changing the driver code to issue a warning / info only instead of error?

DRM_INFO

Wondering if you got this working, so cool.

hi, great work with pi, i am considering moving to Raspberry Pi Compute Module 4 (on a Compute Module 4 IO Board) with for my kit product for my church,

i have only one concern, will it work with my current capture board B102 HDMI to CSI-2 Bridge (22 pin FPC) from auvidea? the data sheet does not explicitly say that the CSI/DSI is backward compatible with RPI 4

How does the Nvidia Jetson handle the IO BAR space?

https://developer.nvidia.com/buy-jetson?product=jetson_nano&location=US

The jetson doesn't support external GPUs on its PCIe slot, so I'd guess however it's hooked up is specified manually in a dtb or something similar.

There's a video showing that Fedora on Armada HW and Firestream 9250 video card.

Have you had a look at https://www.youtube.com/watch?v=i7WJsZYWtPw

This other video also claims ARM + NVidia GPU

I'm running an AMD Radeon Pro WX 2100 with a Solidrun HoneyComb LX2K. Theres a few GPU quirks we use to get AMD cards working such as limiting the bus badwidth (parameter on GRUB linux line) and patching "linux-firmware" drivers.

I have a RockPro64 as well I am playing with but the general consensus is AMD cards have the best chance of working, and are the only ones with 3D acceleration working, not just display out. Also, nothing newer than GCN 5th Gen, and preferably not so old that you'd hit legacy ATI drivers.

Solidrun discord may help out

Hi Jeff.

Jetson Nano uses an ARM64 processor and a Maxwell architecture GPU. Do you think it would be possible to run a 900 series NVIDIA GPU with Pi4? Maybe with NVIDIA's embedded drivers for jetson

Hi Jeff,

I came across the IBM GXT145 PCI-e 1x graphics card jobbie on fleabay and since you are trying hard maybe it's worth a shot. They sell for as low as $50USD. It's for IBM Power ISA servers. Looks like it's built with a Matrox (G550?) GPU which is put behind a TI PCI-e to PCI bridge on-board. These old Matrox GPUs are not a powerhouse, but have mainline linux kernel support. Maybe it's enough to put a smile on Eben Upton's face. If it works we have a good starting point and at least some feeling of accomplishment. Though I'm a bit concerned about it's video BIOS would only run on Power or maybe x86. The other I came across is Vortex86's VortexVGA, but looks to be unobtainium.

For noveau you might want to give void-linux a shot. It has it compiled for ARM, although I believe it's not tested. You'll have to use void-mklive to make the ISO, since there is no preconfigured one yet.

use a GeForce graphics card and install windows on your pi then you can download the nividia driver

Ah right, so let's just download one of the many ARM drivers that Nvidia provides for Windows....