tl;dr: This website is currently being hosted off-grid, on a cluster of Raspberry Pis, via 4G LTE—or at some points through the same tunnel via WiFi if signal strength gets too low. Here's the GitHub repo for the project.

Note: The website was down for a few hours this morning, as shortly after this post I started getting a 40-50 Mbps flood of POST requests (over 6 million in a 30 minute time frame)... and yeah, no way the little Pi cluster could handle that. Thanks, Internet. It's back up through Cloudflare now, and I'll post more on this 'fun' experience later.

A couple weeks ago, after months of preparation, I took my 4-node Turing Pi 2 cluster (see my earlier review) to my cousin's farm, and ran this website (JeffGeerling.com) on it, live on the Internet—completely disconnected from grid power or hard-wired Internet.

Right now this website is running on the same cluster, using the same configuration, but at my house (I couldn't leave the cluster at my cousin's farm indefinitely—the cows would get it!)

But what's special about my cluster? Well, my goal at the outset was to build an energy efficient Pi cluster that I could take literally anywhere (within reason), and continue hosting this website.

I automated the entire cluster setup in my Turing Pi 2 Cluster repository on GitHub.

I can't relate the whole story in this post—for that, watch the video:

Full Disclosure: The YouTube video I made for this project was sponsored by EcoFlow.

In this post, I'll give a quick rundown of the major problems I encountered, and my solutions.

Networking

4G Connectivity: After finding a hardware bug with the prototype board (caused by the resistor pictured above), I used a Quectel EC25-A 4G modem and a SixFab SIM card to get connected to the Internet.

I wrote up an entire post about the process: Using 4G LTE wireless modems on a Raspberry Pi.

I could get connected to the Internet, but in doing so, I encountered another new problem:

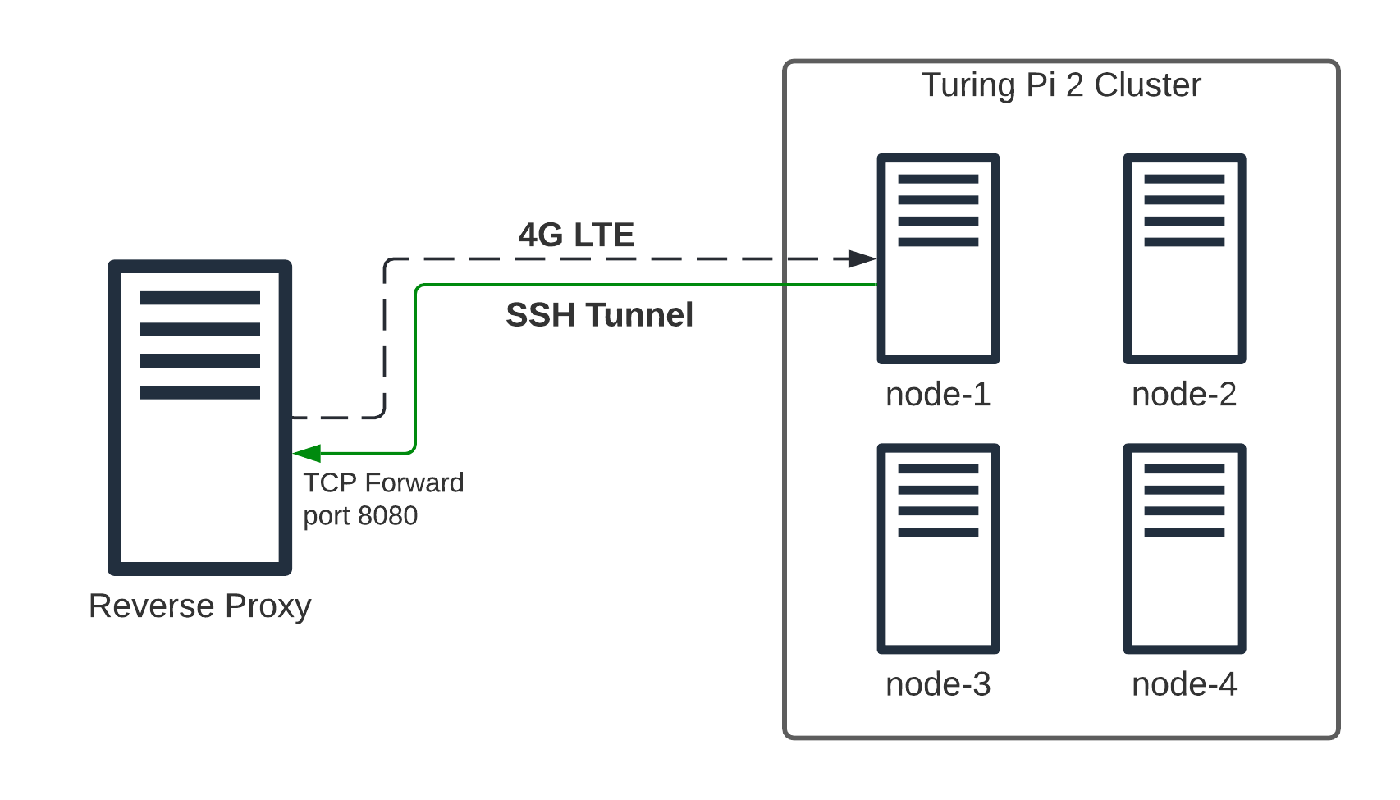

CG-NAT: Because I couldn't get a publicly-addressable IPv4 address (and IPv6 support across different carriers isn't reliable), I had to set up an SSH tunnel between the cluster and a VPS under my control, which I configured as a reverse proxy (using Nginx).

I wrote up a post on that, too: SSH and HTTP to a Raspberry Pi behind CG-NAT.

All these things are great for a single Raspberry Pi, but my Turing Pi 2 cluster is made up of four Pis, all together in a Kubernetes cluster.

Not wanting to compromise on the flexibility a 4-node cluster gives me, I decided to set up the first node (the one with the 4G modem) as a lightweight router.

This was doubly necessary as Kubernetes was very unhappy when the control plane and all the nodes would change IP addresses as I swapped the cluster between various networks (LAN at my house, LAN at a friend's house, a separate WiFi network, a 4G hotspot, etc.).

Because getting Kubernetes running on top of OpenWRT is a pain, I instead set up dnsmasq and iptables to bridge the first node's connection to the rest of the cluster through the Turing Pi 2's built-in network, on a subnet separate from my LAN.

Finally, I needed a way to switch between Ethernet, WiFi, and USB (4G) interfaces, so I learned how to do that using network route priorities (metrics) in dhcpcd in Debian... and of course I wrote up that process: Network interface routing priority on a Raspberry Pi.

There are a few other tidbits I did in the Turing Pi 2 Cluster Ansible playbook, but those were the major challenges.

Storage

Everyone knows how unreliable microSD cards are for longevity, especially under random-write-heavy workloads (even the best ones).

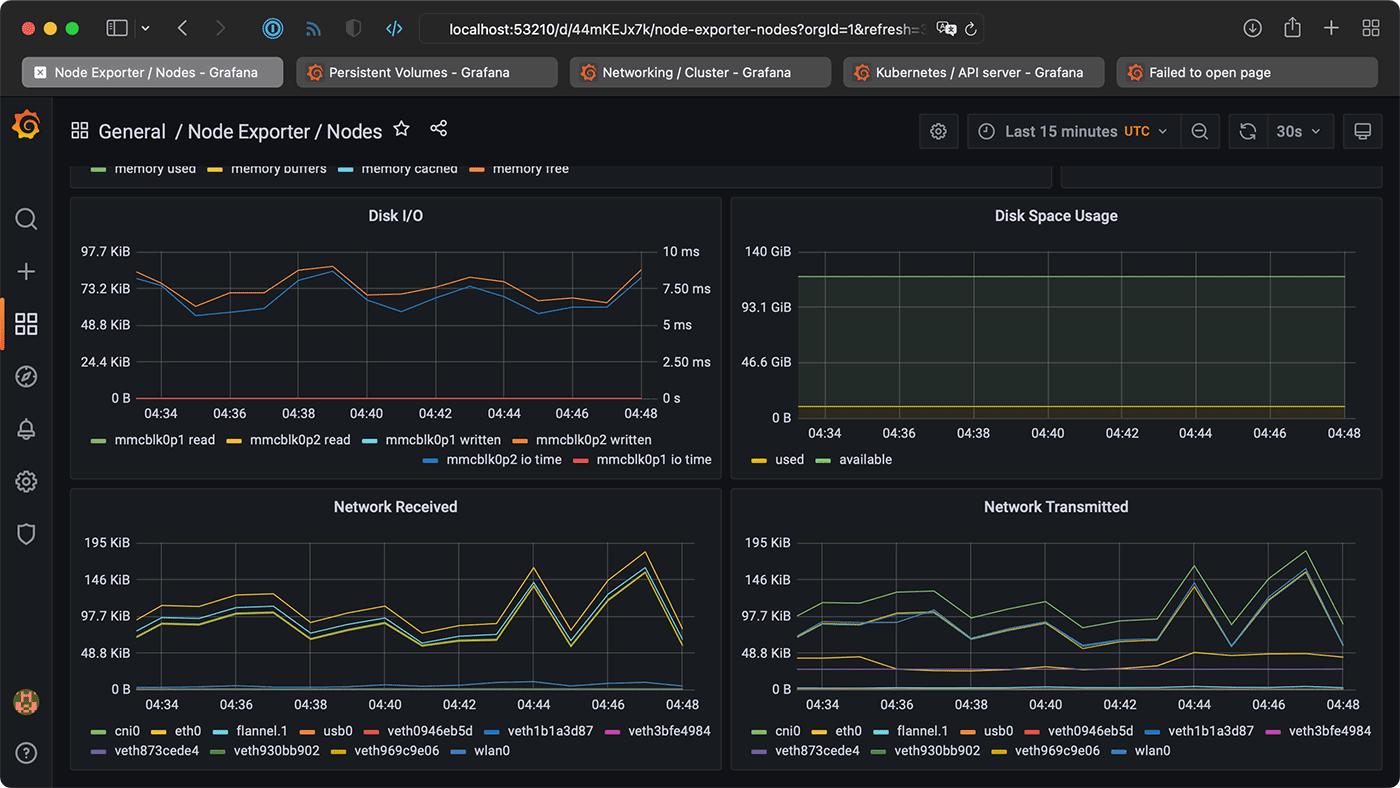

And seeing as I'd be monitoring the cluster with Prometheus and Grafana, plus running my site's MariaDB database on the Pis, storing everything on microSD (or even eMMC) storage was a non-starter.

Luckily, the Turing Pi 2 includes two SATA III connections directly attached to node 3. So I set up a RAIDZ1 ZFS mirror zpool on two Crucial MX500 SSDs and configured an NFS share—and I wrote this up in my ZFS for Raspberry Pi post.

In Kubernetes, I set up the nfs-client provisioner so I could have Drupal and MariaDB store their persistent data on NFS volumes.

The storage situation isn't perfect: now nodes 1 (for network routing) and 3 (for storage) are both critical to the functioning of the cluster, and are both Single Points of Failure (SPOF).

Ideally I'd have fast storage on each node, and I'd create a distributed filesystem with Rook/Ceph or GlusterFS... but I can't have everything on a $75 Raspberry Pi (I'm running the 8GB Lite versions with WiFi + Bluetooth).

Power

As I mentioned earlier, one of my main goals was to create a completely portable system that could be run anywhere—grid connection or not. And for power, I'm plugging the cluster into an EcoFlow Delta Max portable power station, with 1,612 Watt-hours of battery capacity.

I contacted EcoFlow on a whim after seeing Matthias Wandel try overloading one of their 'Pro' units last year, and they were happy to assist on this project—even throwing in sponsorship so I could devote more time and budget to making the video at the top of the post!

They sent the Delta Max—which I've tested as being able to run the cluster (at about 17W average consumption) for up to 42 hours—along with two 400W solar panels.

These panels are pretty massive (each one weighs 12.5 kg or about 30 lbs), but they live up to the marketing—I was able to pull down 460W using just one in full sunlight, and was hitting 700-800W power input on the Delta Max when I had them connected in series. That's plenty of juice to keep the battery topped off so I can power the cluster indefinitely.

It was a little awkward to carry everything while plugged in, but I was able to transfer the battery and cluster from my car to the solar panels (to top off their charge), then inside, and back to my car—and the cluster remained online the entire time.

Physical Protection

Finally, to make sure I could transport the Turing Pi 2 cluster safely, I racked it up in a 2U Mini ITX server enclosure made by MyElectronics.nl, then mounted that inside this SKB 2U soft rack case.

And here it sits—this picture was taken before I turned in for the night on February 8, a few hours before this blog post is set to go live on the 9th:

Conclusion

There are some things I still want to improve. As noted in this issue, I'd love to build a more automated 'switch between VPS and remote cluster' setup—though the hardest part of that is pulling down or pushing up the database, and rsync'ing the 1.2 GB or so of files I have on this website.

Even with a stable 4G connection, I can sometimes only get a few megabits of upload bandwidth, and that means to do the 'failover' correctly, I could either miss some comments or other site data during the long process, or I'd have to have my site offline for a long (to me) period of time, in the range of minutes. (Not fun for someone who prides himself on having 99.998% of uptime since 2009!)

As it is, I'm happy with how this all turned out. Things have been running quite stable:

I have, multiple times, hard-power-cycled the cluster, booted it with either 4G or WiFi routing, and it always connects back through it's tunnel to my VPS, and always resumes serving web traffic.

There was one time CoreDNS stopped working—of course it's DNS—but a quick reboot fixed that, and it's been surprisingly reliable since.

Of course, if this post gets any traction on HN, Reddit, or elsewhere... that could change 😅.

Comments

Just adding a comment to to refresh your nginx cache

Ha! It's still up! :)

Test comment.

Commenting just to keep the testing going. Cool setup!

Lets refresh this!!!

This all seems familiar. I've a Turing Pi (version1), on a farm with 4G signal (8 times faster than DSL here) and a DO VPS too. Not quite as crazy setup as yours, but does run off solar for some of the day.

Keep up the good work, and hope you don't get charged too much data for me making this comment.

Heh, no problem—since it's just the comment form and a POST request, its only a few KB—so far for the entire morning, it's still less than 1MB of data!

Dissapointed it's not using starlink for connectivity :( Maybe one day it will be cheap enough and reliable enough to use starlink in remote locations.

That's my hope—especially if they can get together a more mobile solution geared towards RVs/boats/mobile use! I'm not holding my breath though... it will take time, and there are many hurdles to solve too.

Another comment to get through to the Pis

Well done!

Hey, what are you doing here Red Shirt Jeff!?

Adding a comment to test cache.

Cool Beans!! (just trying to eat your LTE data :P)

We all are here just because we can be

Refresh!

Still works? Lol

Very nice

Cool stuff! Very well done.

Off to the farm this message goes :)

Boink - this is a test comment ;).

Sad reality - but I have to do this in my own home..

Great job Jeff!

I like the idea of my comment being stored on such unique web server =)

Instead of using autossh for VPN with your VPS, could be used wireguard.

Awesome!

Nicely done! I don't think I've seen a video from you covering Nebula (from Slack), could be a nice alternative to the SSH tunnel, though it does require some additional infrastructure.

Pretty quick (accessed from London)

Isn't all this a bit wasteful? It's not doing something 'good'

Very impressive. One of those "just because you can, doesn't mean you should" - but an impressive demo of what can be done. As you say, a Pi cluster isn't the best performance wise, but you can't argue too much at being able to run off batteries for 2 days (and solar rechargeable ones at that).

Love your content, first time visiting your website, couldn't resist at this point! I've done some similar toying around, but never over LTE! Always have a soft spot for a project where the answer to the "Why" is "Why not?".

In the Netherlands some carriers have the option to change some APN settings, after which they will hand out an static (public) IP

Huh, how would you even access this? Through your login? Here in the US all you can access online is payment info!

I'm not sure on a USB modem... but a Hotspot (preferably with ethernet) you can login to the device and change some settings like APN...

Amazing job! Keep up the great work!

Hi Jeff, thanks for your inspiration and all the cool projects you show. Since I followed your PI Firewall project with the DF Robot Dual NIC, my firewall setup works so much better! At this point many thanks from Germany.

you should try to get a updating meter on the webpage stating the battery percentage and wattage in and out. would be cool stat's

Testing latency to db

Is it still running Jeff? I just watched the video! Very impressive sir!!

Hey. I just want this packet to hit the server on the farm because it's kinda cool to think about how this request is making it's journey all the way to your farm server.

This is cool!

Keep up the good work Jeff!

Bravo! I hope one day to do something similar to this AND buy a small farm! Thank you for all the education and inspiration. Writing this from Fort Worth, Texas

Try the same set up but work with a ham radio operator to send and receive data through amateur radio. In a true disaster, you won’t have 4G LTE.

This is awesome!

What happens if the ssh connection crashes? Do you have that accounted for? If the connection crashes you have no way to control the remote system and no way to even reboot it.

Hi Jeff! Awesome video!

That's absolutely great to watch what doing. Keep going! :)

So this comment and website is stored on a off the grid raspberry pi that's rlly cool

I'm just commenting so my traffic goes through the farm. :) Very nice project, enjoyed the YouTube video.

ping

pong

pong

Another comment just so I can say I definitely hit the actual Pi cluster. All the cool kids are doing it.

Really enjoyed watching this. I know the reason why is why not.

Fun project through. How long are you going to host it this way.

Is the digital ocean server not just a fail over if the pi cluster is not found?

i need to refresh your nginx cache.

this is epic

test comment. (testing after that 40-50 mbps flood)

This zis cool .

Tho I am not sure how you got it running in 4G LTE , aren't those thing run under CGNAT with shared ip address on each tower?

impressive

🇧🇳

where's the blog post of racking up the pi cluster?

It's a video, and it's here: https://www.youtube.com/watch?v=RijuRF0ITdE :)

so you don't have a blog post for it yet? (usually you have a blog post and a video for everything)

amazing

you are now "green shirt jeff"...

so if i comment i make the site 0.00000000001 slower?

Can we game through this setup?

Can you create an article about how you set up NGinx?

I have built my own apache server, struggling with ssl at the moment, surely it'll work soon. I hope to get a pi soon

tesst

Hello!

still works?