I've been working a bit with Red Hat lately, and one of the products that has intrigued me is their OpenShift Kubernetes platform; it's kind of like Kubernetes, but made to be more palatable and UI-driven... at least that's my initial take after taking it for a spin both using Minishift (which works with OpenShift 3.x), and CRC (which works with OpenShift 4.x).

Because it took me a bit of time to figure out a few details in testing things with OpenShift 4.1 and CRC, I thought I'd write up a blog post detailing my learning process. It might help someone else who wants to get things going locally!

CRC System Requirements

First things first, you need a decent workstation to run OpenShift 4. The minimum requirements are 4 vCPUs, 8 GB RAM, and 35 GB disk space. And even with that, I constantly saw HyperKit (the VM backend CRC uses) consuming 100-200% CPU and 12+ GB of RAM (sheesh!).

Installing CRC

Before you can use CRC, you must have a Red Hat Developer account. Sign up on the Red Hat Developers Registration site. You can then visit the CRC install page on cloud.redhat.com to view the CRC Getting Started Guide, download the platform-specific crc binary, and copy your 'pull secret' (which is required during setup).

After you download the crc binary and place it somewhere in your $PATH, you have to run the following commands:

$ crc setup

$ crc start

crc setup creates a ~/.crc directory, and crc start will prompt you for your pull secret (which you must get from your account on cloud.redhat.com at the bottom of the CRC Installer page.

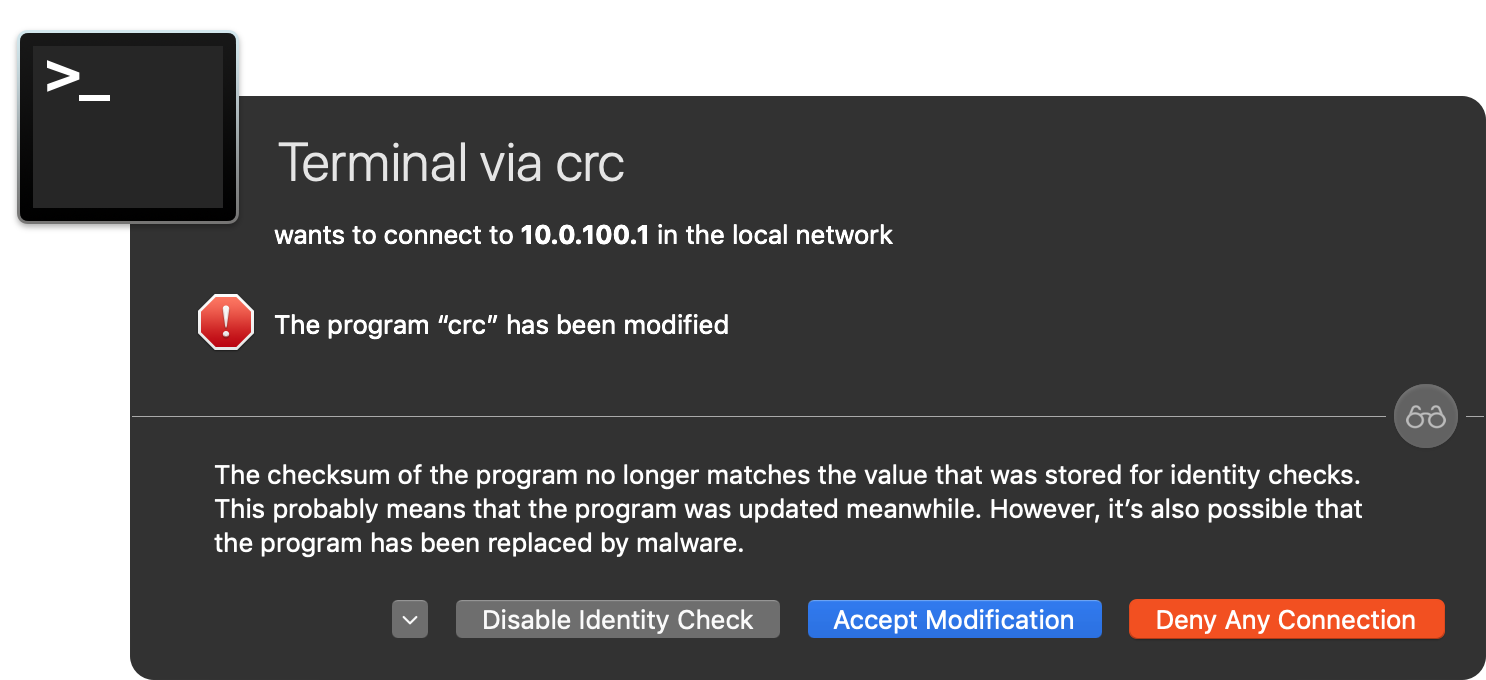

Note that during crc setup, I had a big scary warning pop up on my Mac about the binary code signature being changed (this is from a malware detection system built into Little Snitch):

I clicked 'Accept Modification', as the modification is part of CRC's install process... but hopefully that's something that could be avoided in future versions of CRC!

After a few minutes, crc start prints out some cluster information, like:

INFO Starting OpenShift cluster ... [waiting 3m]

INFO To access the cluster using 'oc', run 'eval $(crc oc-env) && oc login -u kubeadmin -p [redacted] https://api.crc.testing:6443'

INFO Access the OpenShift web-console here: https://console-openshift-console.apps-crc.testing

INFO Login to the console with user: kubeadmin, password: [redacted]

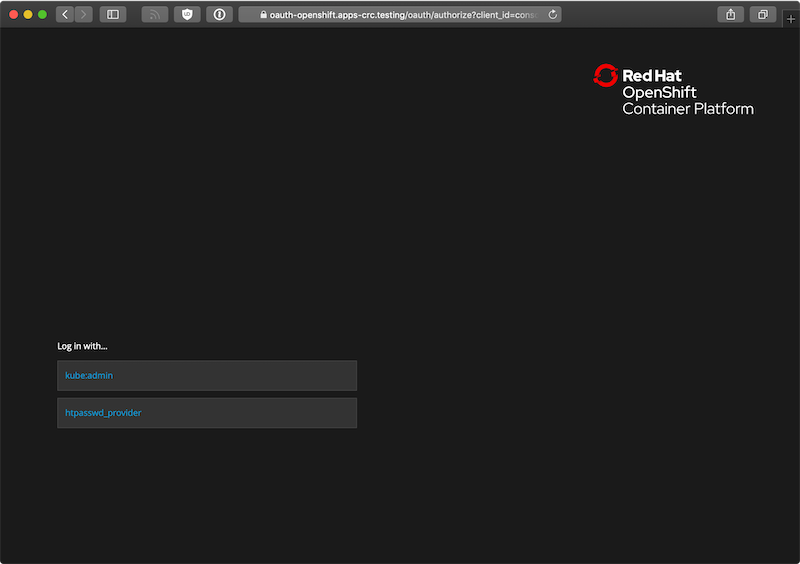

CodeReady Containers instance is runningIf you open the web console (https://console-openshift-console.apps-crc.testing), you'll need to accept the self-signed certificate... then you'll be redirected to another local URL, and you'll need to accept another self-signed certificate. But once that's done you should arrive at a login screen:

Click on the kube:admin option, then log in using the credentials output by crc start. Note that if you get an error the first time you click on the kube:admin option, try again in a minute or two; the cluster still might be initializing.

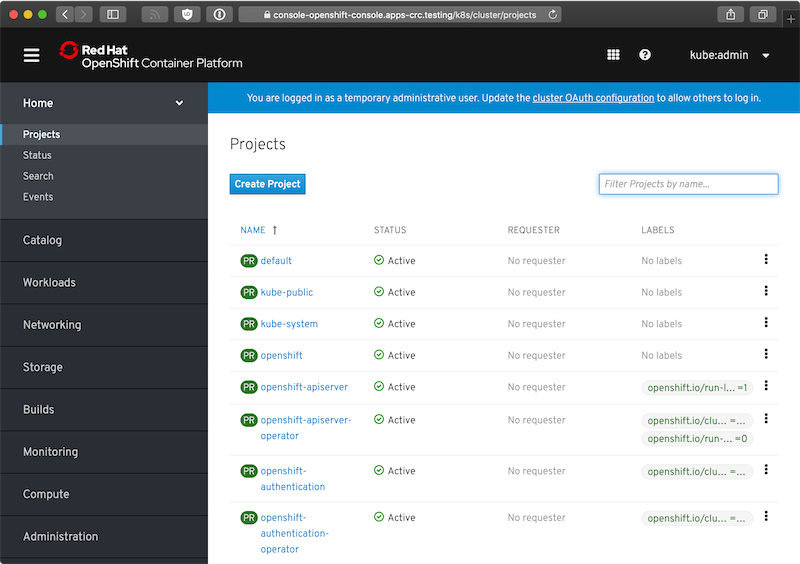

Now you should arrive at the OpenShift dashboard:

Running an application on OpenShift locally

One nice thing about OpenShift is that you can manage most everything via the UI, if you desire (assuming the user you are logged in as has the RBAC rights to do so); you can also inspect all the raw resources (either in OpenShift's layout or as YAML in an in-browser editor) pretty easily.

Since I fancy myself a PHP developer, I decided to deploy an example CakePHP application (never used CakePHP before, though):

- I created a project with name

php-testand display name 'PHP Test'. - On the Project Status page, I chose to browse the application catalog to find something to deploy.

- I scrolled down to PHP, clicked it, and chose 'Create Application'.

- I named it

php-test, left all the defaults (except for checking the 'create route' checkbox to create a public URL), and clicked the 'Try Sample' button to test out the example repo (which happens to be a demo CakePHP app). - I waited

With this application, it requires some time to pull the base image, run a container to pull the source, run another container to build the project (using PHP's package manager, Composer), and finally run the final container so the PHP test Pod is ready to start responding to HTTP requests.

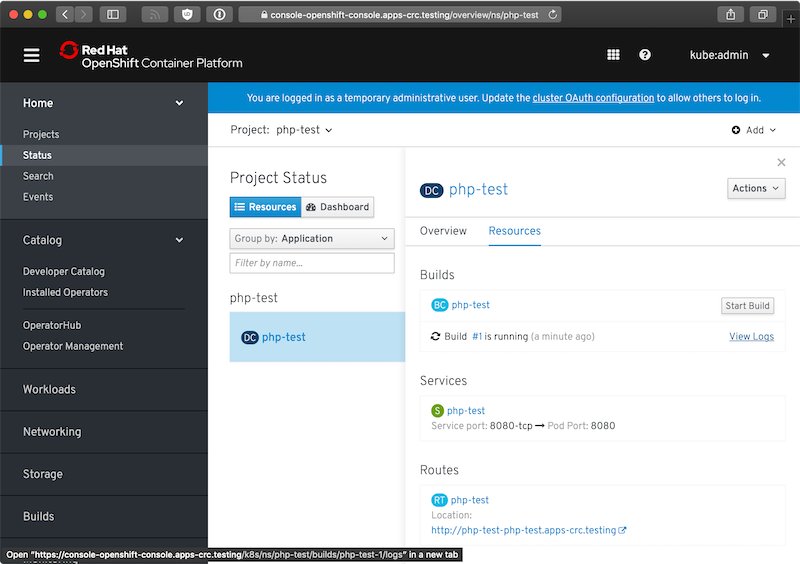

You can monitor the progress of the build by clicking on the DeploymentConfig (DC) 'php-test', and then inspecting the 'Resources':

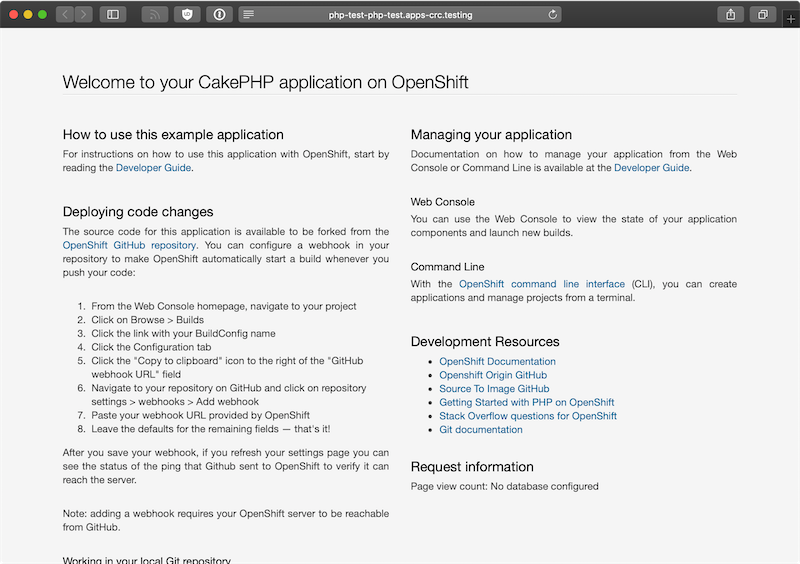

It took a few minutes for the build process to complete (there's a lot to download, apparently!), but once that was done, I could visit http://php-test-php-test.apps-crc.testing/ and see the running application:

It's interesting, I've never seen 'Routes' in K8s nomenclature (I'm used to 'Ingress'), but it seems to be about the same, just with some extra decoration for some OpenShift-specific features. I could also manually add a Kubernetes Ingress resource that allowed me to direct a domain (e.g. http://php.test/) to the php-test service running in the php-test namespace.

Managing the cluster

After you're finished, you can run crc stop to stop the cluster, and crc delete to completely delete it. crc start then generates a new cluster (or restarts a stopped cluster).

Getting the CRC VM IP address

I wanted to get the IP address of the HyperKit VM, too, so I could access exposed services (either by NodePort or Ingress via DNS), and there are three quick ways to do that:

- In the OpenShift UI, go to Compute > Nodes, and look at the 'Internal IP' under 'Node Addresses'.

- Run

crc ip. - Ping the console URL (e.g.

ping console-openshift-console.apps-crc.testing).

Comments

If you want to explore the RHCOS Linux, that the OpenShift 4.1.11 of the CRC is running on:

ssh -i ~/.crc/cache/crc_libvirt_4.1.11/id_rsa_crc [email protected]sudo -i

Thanks, it fails in my case but then I tried the following and it worked:

```

ssh -i ~/.crc/machines/crc/id_rsa core@$(./crc ip)

```

Thanks this worked.

thx for this writeup.

I installed crc in a centos vm on ESXi. Do you know how I can make a project accessible from outside of the VM?

I have seen these IPs:

192.168.0.30 IP CentOS VM

192.168.130.11 IP CodeReady Containers VM

172.30.161.x Cluster IP

10.128.1.x POD IPs

Thanks!

If you add a NodePort you should be able to access the service behind it at

192.168.130.11:[NodePort-here]How did you run crc setup on a linux vm...i keep getting an error that virtualization flag is not set in bios.

You need to enable v-tex ( virtualization ) in the BIOS. If you are using a VM then you need to enabled nested virtualization. You can check how to enable in the documentation of of virtualization software you are using. I have run into multiple errors when using nested virtualization, I would suggest to install on laptop.

Setup HAproxy and add a second init to your dnsmasq.d that points to your host for the wildcard domains. That's what I'm doing.

Could you elaborate on how you configured your HAProxy ? I am also trying to setup the CRC on a CentOS server and access it remotely using HAProxy. But unfortunately, I always get "Connection closed by foreign host" when I telnet through the command line, or either a "503 Service Unavailable" when trying to access it through the browser.

Do you know whether it is possible to login to the crc-VM. If it is possible which credentials should be used for the login?

Today I tried to install CRC on my upgraded Mac running Catalina (10.15.1), and I'm running into a dialog that blocks me from running

crc setup, which reads:I opened up the following issue in the CRC issue queue: Can't run crc setup on macOS Catalina - "the developer cannot be verified".

In the finder, you must click each binary that does this (I also saw this on kubernetes) and right click them and do open (from the finder) in terminal... you then wait for the first run of the binary to complete and then you will have authorized it to run. Then you can do crc setup.

DO you know how to allow the developer to login to the CRC console? After I install it and everything seems OK. the kubeadmin can login to console but the user developer with its default password "developer" cannot login to console. In the OCP 3.x, I don't remember my user cannot login to the console. It was just some restrictions showing if the user is not an admin user in a project.

I have installed and setup CRC OpenShift 4 cluster on RHEL.

I can see 51 projects from "oc projects " command.

I am unable to open the console "https://console-openshift-console.apps-crc.testing/".

Getting below error "This site can’t be reached"

Please help.

TQ

After setting up the CRC OCP 4 cluster on RHEL, I am unable to open the console. I can run oc commands successfully but can't seem to open the console.

getting Error "This site can’t be reached"

Windows 10 + Windows Linux Subsystem + Ubuntu Distro. I install CRC and when I run "crc setup" it fails thinking virtualization is not available. I've verified BIOS Virtualization, and Hyper-V are enable. I run the command "lscpu" and I see my virtualization is set to "container" What is "crc setup" looking at to determine virtualization is available? Out of ideas, and appreciate any suggestions.

I would open Hyper-v Manager and make sure the crc vm is not there. Then delete your .crc directory completely. Next run crc setup after then is down run crc start -p C:\Users\jim\crc-windows-1.4.0-amd64\pull-secret.txt -m 9192 -n 8.8.8.8 Please note you will need to change the path from C:\Users\jim\crc-windows-1.4.0-amd64\pull-secret.txt to where to stored your pull-secret fie. I hope that helps

Jim, I just wanted to post a big thank you for listing this entire start command for crc because it really solved a problem for me. I was getting the following error after running "crc start":

****start output*****

$ crc start

level=info msg="Checking if oc binary is cached"

level=info msg="Checking if podman remote binary is cached"

level=info msg="Checking if running as normal user"

level=info msg="Checking Windows 10 release"

level=info msg="Checking if Hyper-V is installed and operational"

level=info msg="Checking if user is a member of the Hyper-V Administrators group"

level=info msg="Checking if Hyper-V service is enabled"

level=info msg="Checking if the Hyper-V virtual switch exist"

level=info msg="Found Virtual Switch to use: crc"

? Image pull secret [? for help]

level=error msg="Incorrect function."

level=error msg="Failed to get pull secret: Incorrect function."

***end output****

I ran your command on Windows 10 specifying the pull-secrets file on the filesystem and it ran great. In hindsight the instructions kept telling me it would prompt for the secret, but it seems like on my system it did not. Specified and ready to go. Now if I am on to the next error...

level=info msg="Checking if oc binary is cached"

level=info msg="Checking if podman remote binary is cached"

level=info msg="Checking if running as normal user"

level=info msg="Checking Windows 10 release"

level=info msg="Checking if Hyper-V is installed and operational"

level=info msg="Checking if user is a member of the Hyper-V Administrators group"

level=info msg="Checking if Hyper-V service is enabled"

level=info msg="Checking if the Hyper-V virtual switch exist"

level=info msg="Found Virtual Switch to use: crc"

level=info msg="Extracting bundle: crc_hyperv_4.3.8.crcbundle ..."

level=info msg="Checking size of the disk image C:\\Users\\spartansage\\.crc\\cache\\crc_hyperv_4.3.8\\crc.vhdx ..."

level=info msg="Creating CodeReady Containers VM for OpenShift 4.3.8..."

level=error msg="Error creating host: Error creating the VM: Error creating machine: Error in driver during machine creation: open /Users/spartansage/.crc/cache/crc_hyperv_4.3.8/crc.vhdx: The system cannot find the path specified."

not sure why it is using that pathing since it should be /c/Users/.... but moving on.

thank you again.

OpenShift CRC is a garbage. The console is very slow, almost useless: most of the pages show nothing unless you refresh them a few times. Even if you use oc to deploy something the console cannot even display logs: after 10 mins says 404 Not Found. Complete garbage and you are lasting your time try it

What OS are you using, how much memory do you have, and what CPU are you using?

It sounds like you were trying to use it on a slow computer without enough memory.

I don't know what computer is using the other guy, but I'm using Fedora 38 with 32 GB of RAM, an i7-1270P and every now and then crc stops with CPU usage at 300%, crc stop does not work, even crc status is stuck and the fastest way out of it is rebooting the PC.

I want to learn Openshift, but if this is the way, then I maybe will shift to another platform for my work

So are you saying that CRC cannot be run on VMWare Fusion on a macbook pro running a CentOS 7 VM?

That would most likely not work; you'd probably need to run it directly on the MacBook Pro (though I haven't tried inside a VMware VM).

How can I add new user to CRC (other than kube:admin & developer)? it uses htpasswd as the identity provider. To be able to add user & set password, i must have local access to the vm but I can't ssh to the vm. no access details available anywhere in any documentations. Any help is much appreciated.

Hi Jeff, thanks for all the information. I hope you can help me to solve this: I actually don't have a laptop or any other machine that fill the hardware requisites to build a CRC cluster. So, I got a GCP Virtual Instance (I'm using a custom image, with nested virtualization), and there I can enable crc and create and start my cluster. I also can use oc commands to login and perform several actions inside the cluster. However, I'm not able to access the GUI console. GCP firewall is OK to allow access on port 6443. I added port 6443 to linux semanage http list too. I have a feeling about some virsh configuration that I need to do, but I don't have clues about. I could blame DNS, but using my VM external IP:6443 should work in that case, right? I don't know if you've faced something like this before, but probably you can give some precious hints to get it working.

Thanks in advance!!