How to Export a 2D illustration of a 3D model in OpenSCAD

I've been getting into OpenSCAD lately—I'd rather wrestle with a text-based 3D modeling application for more dimensional models than fight with lockups of Fusion 360!

One thing I wanted to do recently was model a sheet-metal object that would be cut from a flat piece of sheet metal, then folded into its final form using a brake. Before 3D printing the final design, or cutting metal, I wanted to 'dry fit' my design to make sure my measurements were correct.

The idea was to print a to-scale line drawing of the part on my laser printer, cut it out, fold it, and check to make sure everything lined up correctly.

Some online utilities took an STL file and turned it into a PNG, but they weren't great and most wouldn't output a PNG with the exact dimensions as the model (they printed too big or too small).

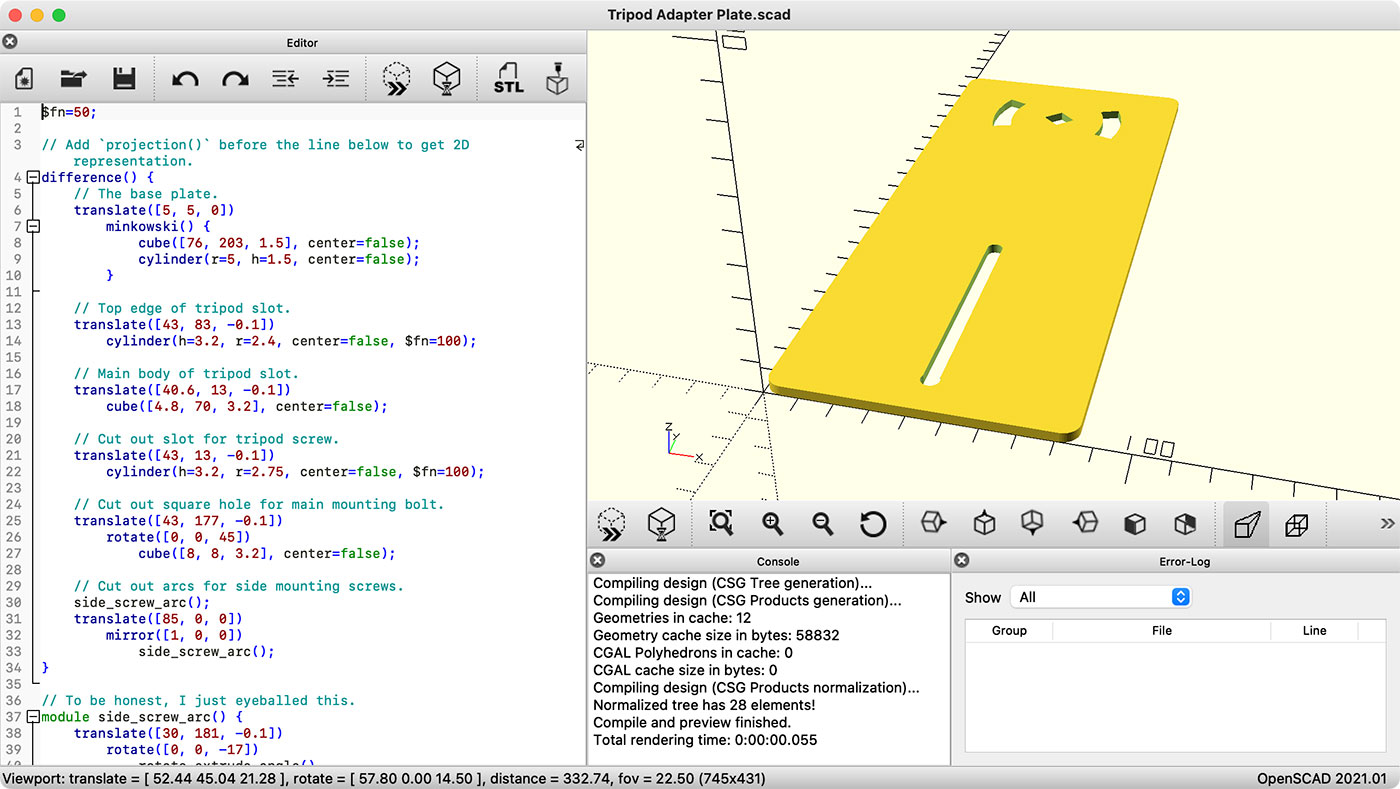

Here was my model: