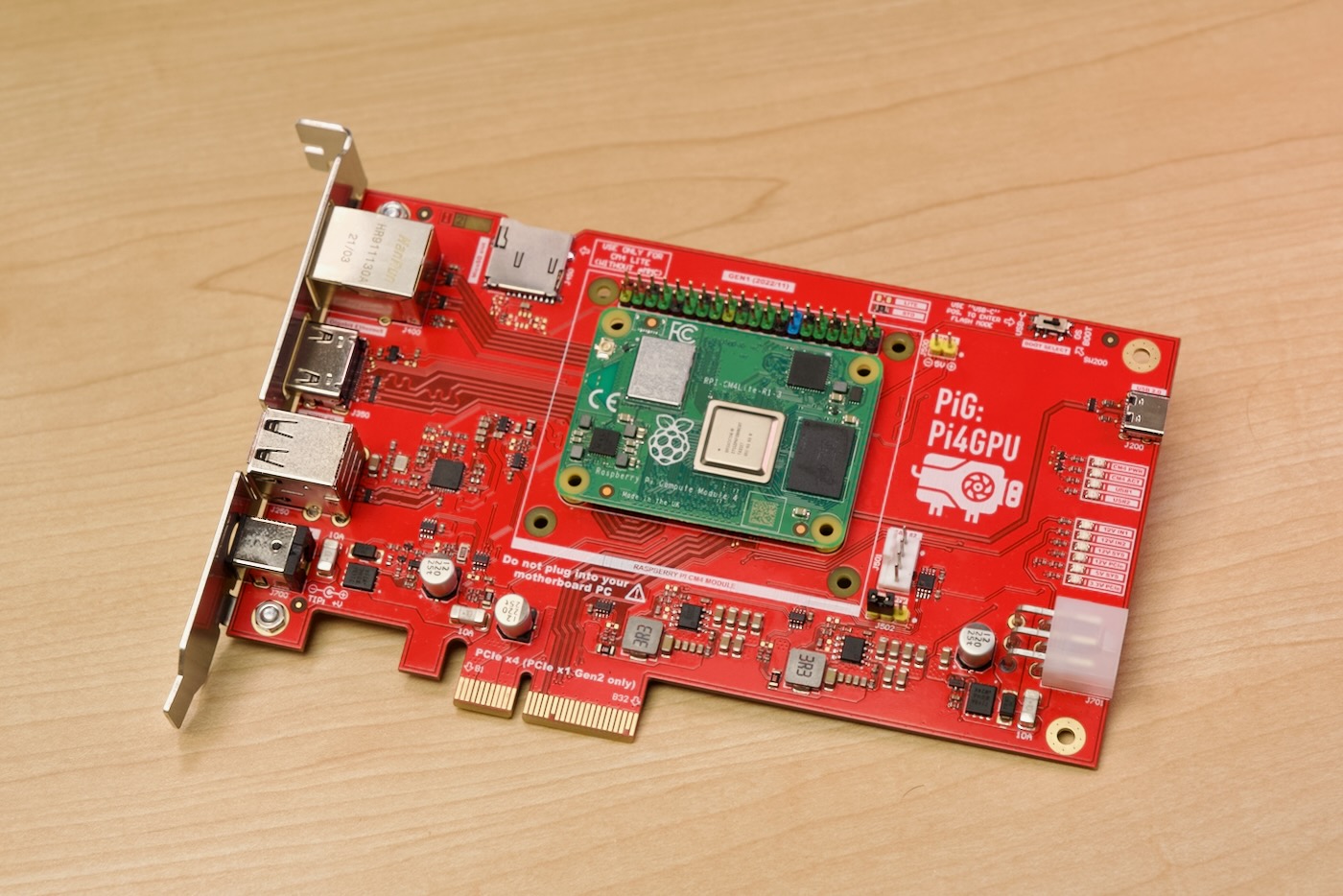

I partnered up with Mirek (of Mirkotronics / @Mirko_DIY on Twitter) to build the Pi4GPU (or 'PiG' for short):

This journey started almost three years ago: almost immediately after the Raspberry Pi Compute Module 4 was launched, I started testing graphics cards on it.

First I tried some low-spec cards like the Zotac Nvidia GT 710 and the VisionTek AMD Radeon 5450. They kept locking up regardless of the driver and Linux versions.

Over the next couple years, I kept testing more and more cards—over 14 at the time of this writing. The reason? Each card (really, each generation of each vendor's cards) had quirks that made it more or less likely to run on the Pi.

Why's that? Well, the Pi has problems with cache coherency on the PCI Express bus beyond 32-bits. And many (well, all nowadays) of the drivers expect that to function. That wasn't the first problem, though—early on, there were issues with the BAR (Base Address Register) space allocated on the Pi's OS. Luckily this could be worked around by re-compiling a DTB file.

This whole endeavor is what inspired me to create the Raspberry Pi PCIe Device Database, which documents (often in excruciating detail) the travails bringing up various PCI Express devices on a Raspberry Pi—and now, on other ARM SBCs like the Radxa Rock 5 and the Pine64 SOQuartz.

Making a Custom PCB

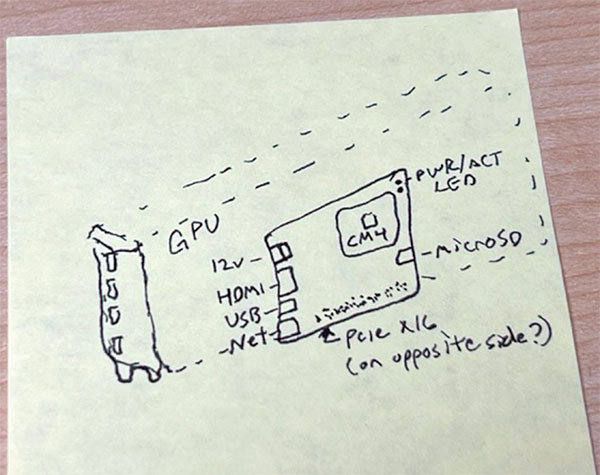

Last year I floated the idea of a custom PCB to 'plug a computer into a graphics card' to Mirek. He didn't immediately say 'no', so I started pursuing the idea, coming up with this quite basic post-it note illustration:

Miraculously, through a series of emails, we refined that concept into a working PCB design. Mirek had it printed by JLCPCB, soldered on a bunch of SMD components, and shipped the PCBs (along with some metal brackets he had his friend Adam fabricate) by February.

In the midst of that journey, I had another major surgery, and wound up working on a so-far-still-secret-project that soaked up the entire month of March 2023.

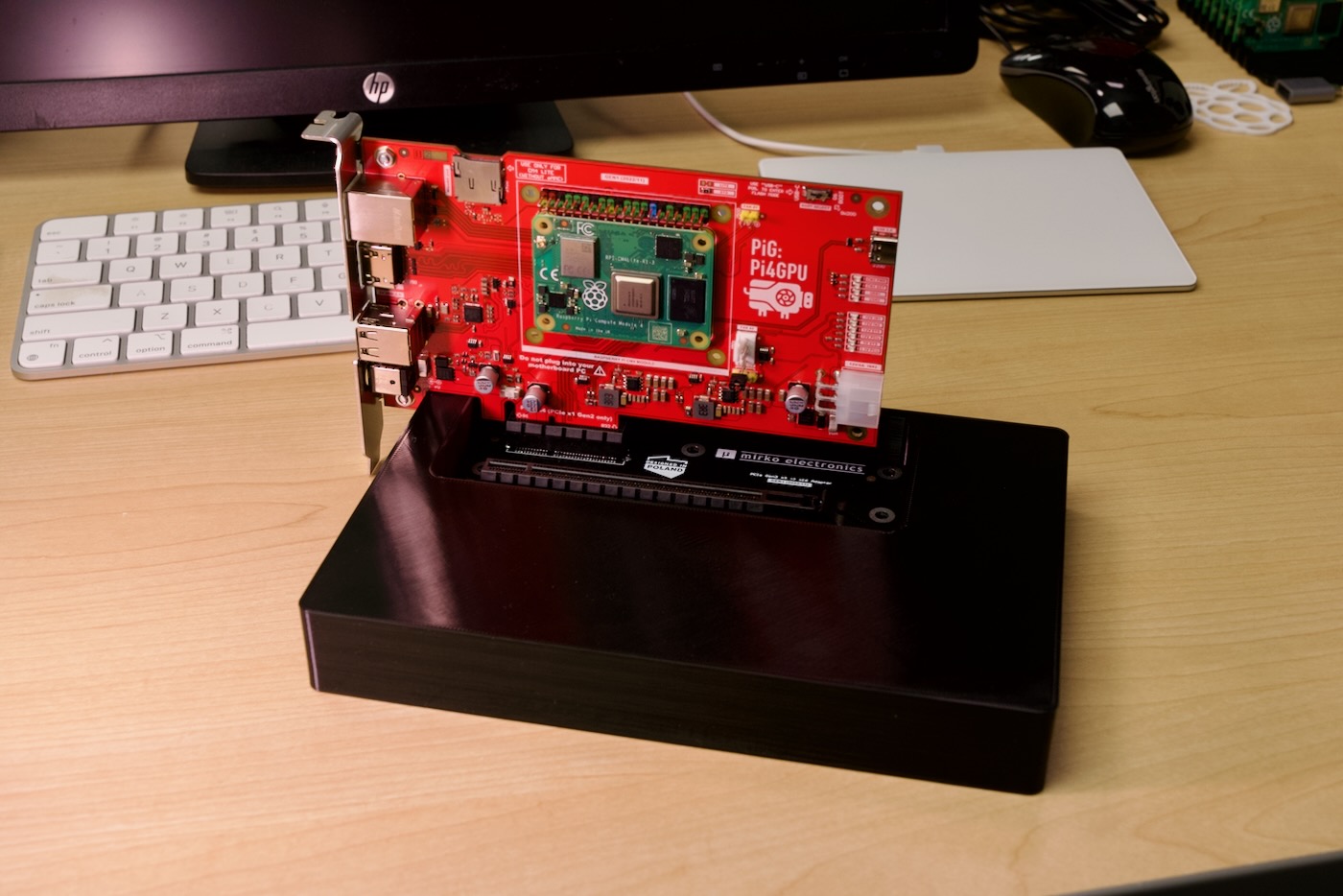

So here we are today: between spare hours in March and a few weeks' time finally testing this thing with all the cards this month, I've uncovered one or two minor quirks with the build, designed a 3D-printed base (pictured above) that supports up to a 4090-sized behemoth PCIe card (pictured below), and documented everything in our open source Pi4GPU repository.

The card has a standard standard PCIe x4 (physical) edge connector, and it plugs into a special PCIe-to-PCIe adapter board, which fits neatly into the recessed part of a 3D printed base.

The graphics card slots into the x16 (physical, only x1 pins are connected) slot on the adapter board, and if needed, you use an external power supply to power beefier GPUs.

The Pi4GPU board itself can be powered via USB-C or 6-pin PCIe (internal edge), or via 12v barrel plug (external edge). It also has 2 USB 2.0 ports, a full-size HDMI port, and a 1 Gbps LAN port (all through the rear PCIe bracket):

It can physically slot inside a computer in a motherboard, but that is not recommended, as I haven't tested that configuration...

External GPUs on a Raspberry Pi (or other Arm SBCs)

So we have this card, and I can plug it into a variety of graphics cards... do any of them work? Or for avid readers of this blog—has anything changed since last year's update?

Well... it's still a bit grim. So far we only have some older AMD cards working with a kernel patch for the radeon graphics driver, and the SM750 GPU which is used on ASRock Rack's M2_VGA, using this patch to the sm750 driver.

In some positive news, though, Nvidia's proprietary driver no longer hard-locks-up when it hits the memory errors on the Pi. Now it will attempt to load, but then error out. That would make debugging easier... if the driver source were fully open and available.

Unfortunately, it's not.

And on AMD's side (as well as other vendors for other PCI Express cards, like Google's Coral TPU), there's no desire to either maintain a fork of their drivers or spend time hacking their driver to work on the buggy PCIe bus on the Pi.

And the Pi isn't alone—it seems other SBC SoCs like the Rockchip RK3566 and RK3588 have a slightly broken PCIe implementation as well. 'Cache coherency' is the problem—these ARM SoCs that have their heritage in TV boxes and embedded devices don't have fully working PCI Express implementations.

Other ARM chips do, however, like this Ampere Altra Development Platform that just arrived courtesy of Ampere:

It has a fully functional PCI Express implementation, and also has 128 lanes of PCI Express Gen 4... which is about a zillion times more bandwidth than the single PCIe Gen 2 lane on the Pi and similar-era SoCs.

Check out my initial review of the Ampere system: Testing a 96-core Ampere Altra Developer Platform.

Apple's M-series chips might have even more bandwidth (per lane), but there's no easy way to get at the PCI Express expansion on them. Maybe the upcoming Mac Pro will make it happen, but I'm not holding my breath.

Video

Check out my video with even more detail about the project:

Comments

Jeff — off topic — you’d posted a video a while back (seems like within the last year) talking about setting up a clustered or distributed file system and NAS server using Pi’s. Despite a bunch of searching/watching videos, I’m not finding the specific reference at the moment. Can you remind me what you’d used? OCFS2? Gluster? Something else entirely?

I used to use Gluster (and I think there's an old video floating around about it from way back when), but now I use Ceph as Gluster is being deprecated and development is stopped.

The ceph video was here: 6-in-1: Build a 6-node Ceph cluster on this Mini ITX Motherboard.

Great post Jeff.

Hi Jeff I am making a Compute Module 4 carrier board geared towards being a server.

I was wondering if you had any tips, or features I should add to it.

Are you going to be selling your carrier board?

I'm not sure whether we'll end up selling a version of this—I have discussed it a little with Mirek, and it's a possibility, really depends on how much uptake there would be a for a board like it (and whether the CM4 ever gets back in stock, or other RK3588S alternatives come to market!).

I would love to be able to host CM4s inside PCs on cards similar to these. I would be able to run multiple Pis inside an easy-to-power & easy-to-cool PC form factor instead of in a rats' nest of cables and boxes.

It would be dope if there was a way for the Pis to be accessible from the PC, even if it's through a secondary 'network' interface that the Pi would see that had no real PHY.

This is a wonderfully insane thing to do. I love it!

Jeff, if of interest I tried getting a Radeon R7 250 working against the Rock 5b, as you mentioned the pcie interconnect is partially crippled but something works (http://jas-hacks.blogspot.com/2023/04/rk3588-adventures-with-external-g…) . Anyway lets see what else we can use the pcie slot for.

I had a AMD Radeon Pro WX 5100 GPU running on the Marvell based Macchiatobin board in 2019. It worked pretty flawlessly after adapting Uboot. I also had two Xilinx FPGA boards streaming directly to the videocard in this system. Contact me via zeromips.org if you like to share experiences.

Are you going to try wiring one up to the Raspberry Pi 5? It has PCI Gen2 1x available that can be upped to Gen3. Seems like it could work!

Yes, I've been testing! This card won't work directly with Pi 5 model B, but hopefully there will be some other options!

Hi Jeff,

Have you by any chance tried this with the Rock 5 compute module

https://wiki.radxa.com/Rock5/CM5

That’s pin-compatible with the CM4 so should be a drop in?

MJ

I still haven't found one available for sale yet :(

Would love to test it out though.

Hi,

Need to get a graphic DP card with USB to connect to a server that hasn't got any internal graphic onboard. Need to get access via a physical KVM switch, the server only have an ILO port and an ILO service port :(

any sugestions.

i only got 1 port Pcie4 X16 Full height half legth.

THX Tommy