I will be posting a few videos discussing cluster computing with the Raspberry Pi in the next few weeks, and I'm going to post the video + transcript to my blog so you can follow along even if you don't enjoy sitting through a video :)

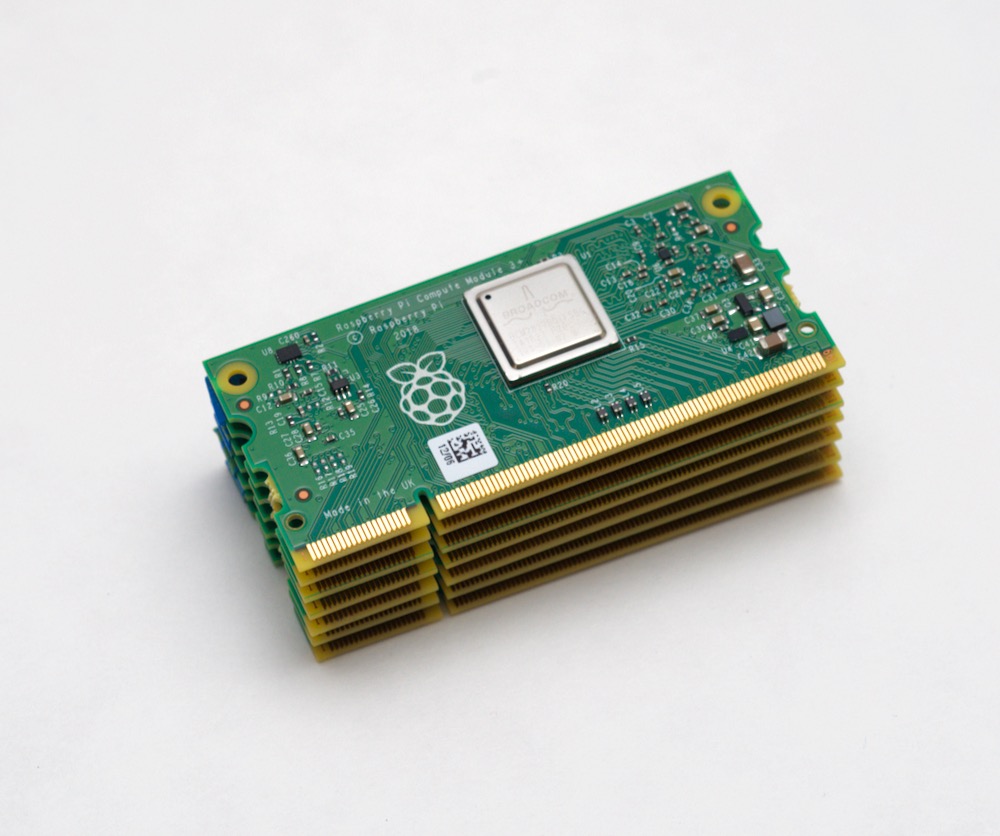

This is a Raspberry Pi Compute Module.

And this is a stack of 7 Raspberry Pi Compute Modules.

It's the same thing as a Raspberry Pi model B, but it drops all the IO ports to make for a more flexible form factor, which the Rasbperry Pi Foundation says is "suitable for industrial applications". But in my case, I'm interested in using it to build a cluster of Raspberry Pis.

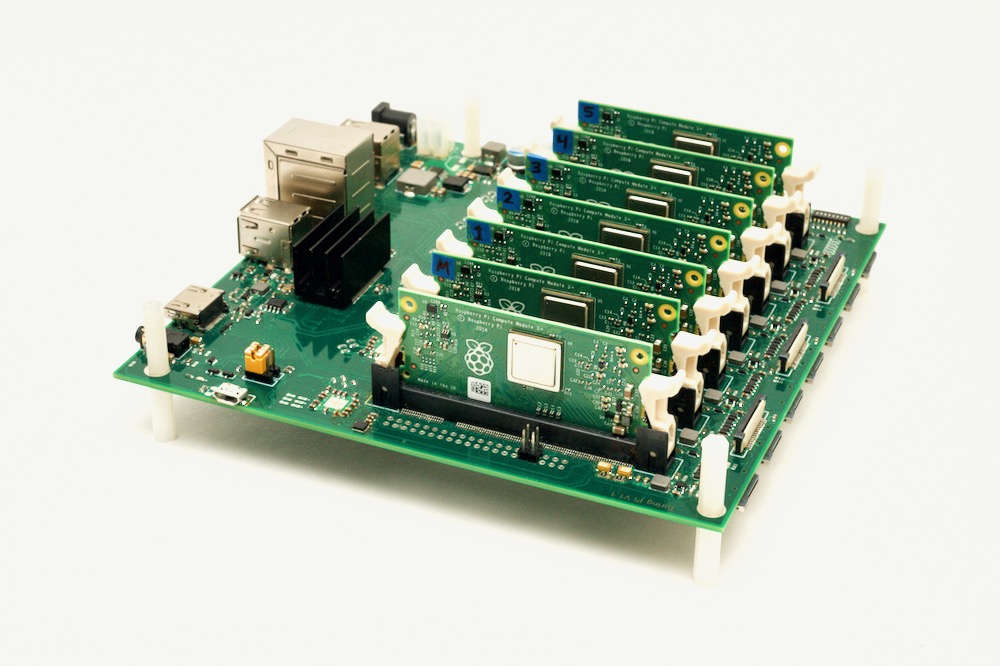

I'm going to explore the concept of cluster computing using Raspberry Pis, and I'm excited to be in posession of a game-changing Raspberry-Pi based cluster board, the Turing Pi.

I'll get to the Turing Pi soon, but in this first episode, I wanted to cover why I love using Raspberry Pis for clusters.

But first, what is a cluster?

Cluster Computing

A lot of people try to solve complex problems with computers. Sometimes they do things like serve a web page to a huge amount of visitors. This requires lots of bandwidth, and lots of 'backend' computers to handle each of the millions of requests. Some people, like weather forecasters, need to find the results of billions of small calculations.

There are two ways to speed up these kinds of operations:

- By scaling vertically, where you have a single server, and you put in a faster CPU, more RAM, and faster connections.

- By scaling horizontally, where you split up the tasks and use multiple computers.

Early on, systems like Cray supercomputers took vertical scaling to the extreme. One massive computer that cost millions of dollars was the fastest computer for a time. But this approach has some limits, and having everything invested in one machine means downtime when it needs maintenance, and limited (often painfully expensive) upgrades.

In most cases, it's more affordable—and sometimes, the only way possible—to scale horizontally, using many computers to handle large calculations or lots of web traffic.

Clustering has become easier with the advent of Beowulf-style clusters in the 90s and newer software like Kubernetes, which seamlessly distributes applications across many computers, instead of manually placing applications on different computers.

Even with this software, there are two big problems with scaling horizontally—especially if you're on a budget:

- Each computer costs a bit of money, and requires individual power and networking. For a few computers this isn't a big problem, but when you start having five or more computers, it's hard to keep things tidy and cool.

- Managing the computers, or 'nodes' in cluster parlance, is easy when there are one or two. But scaled up to five or ten, even doing simple things like rebooting servers or kicking off a new job requires software to coordinate everything.

The Raspberry Pi Dramble

In 2015, I decided to set up my first 'bramble'. A bramble is a cluster of Raspberry Pi servers. "Why is it called a bramble?" you may ask? Well, because a natural cluster of raspberries that you could eat is called a 'bramble'.

So I started building a bramble using software called Ansible to install a common software stack across the servers to run Drupal. I set up Linux, Apache, MySQL, and PHP, which is commonly known as the 'LAMP' stack.

Since my bramble ran Drupal, I made up the portmanteau 'dramble', and thus the Raspberry Pi Dramble was born.

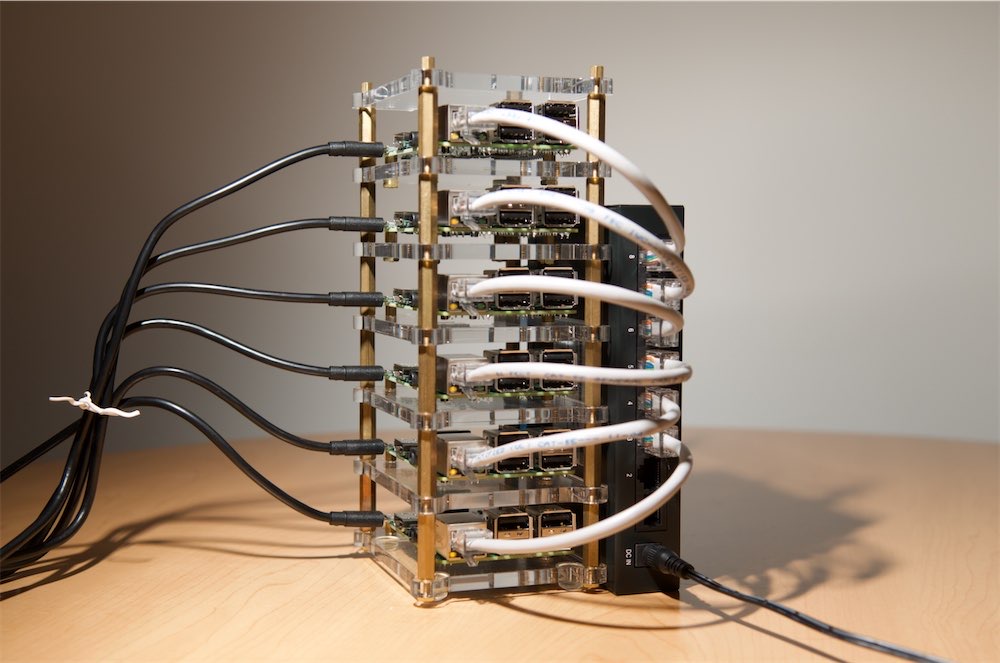

The first version of the cluster had six nodes, and I had a bunch of micro USB cables plugged into a USB power adapter, plus a bunch of Ethernet cables plugged into an 8 port ethernet switch.

When the Raspberry Pi model 3 came out, I trimmed it down to 5 nodes.

Then, when the Raspberry Pi Zero was introduced, which is the size of a stick of bubble gum, I bought five of them and made a tiny cluster using USB WiFi adapters, but realized quickly the old processor on the Zero was extremely underpowered, and I gave up on that cluster.

In 2017, I decided to start using Kubernetes to manage Drupal and the LAMP stack, though I still used Ansible to configure the individual Pis and get Kubernetes installed on them.

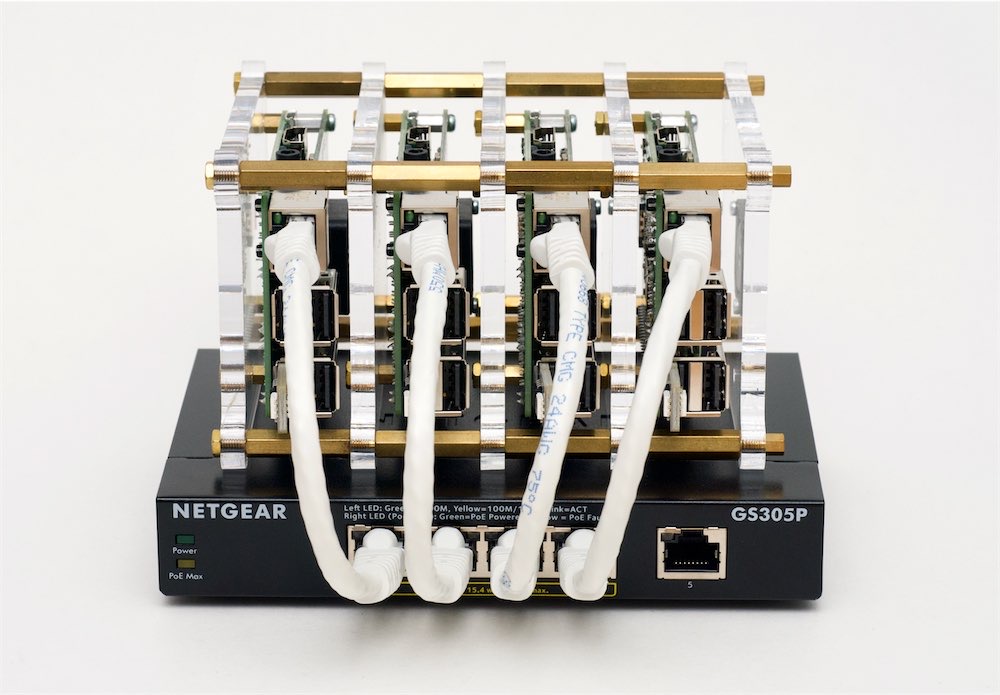

And today's Dramble, which is made up of four Raspberry Pi model 4 boards, uses the official Power-over-Ethernet (PoE) board, which allowed me to drop the tangle of USB power cables, but requires a more expensive PoE switch.

The Pi Dramble isn't all serious, though. I've had some fun with it, including making a homage to an old movie I enjoyed:

Through this whole experience, I've documented every aspect of the build, including all the Ansible playbooks, as an open source project that's linked from pidramble.com, and many others have followed these plans and built the Pi Dramble on their own!

Why Raspberry Pi?

This is all to say, I've spent a lot of time managing a cluster of Raspberry Pis, and the first question I'm usually asked is "why did you choose Raspberry Pis for your cluster?"

That's very good question.

An individual Raspberry Pi is not as fast as most modern computers. And it has limited RAM (even the latest Pi model 4 has, at most, 4 GB of RAM). And it's not great for speedy or reliable disk access, either.

It's slightly more cost-effective and usually more power-efficient to build or buy a small NUC ("Next Unit of Computing") machine that has more raw CPU performance, more RAM, a fast SSD, and more expansion capabilities.

But is building some VMs to simulate a cluster on an NUC fun?

I would say, "No." Well, not as much as building a cluster of Raspberry Pis!

And managing 'bare metal' servers instead of virtual machines also requires more discipline for provisioning, networking, and orchestration. Those three skills are very helpful in many modern IT jobs!

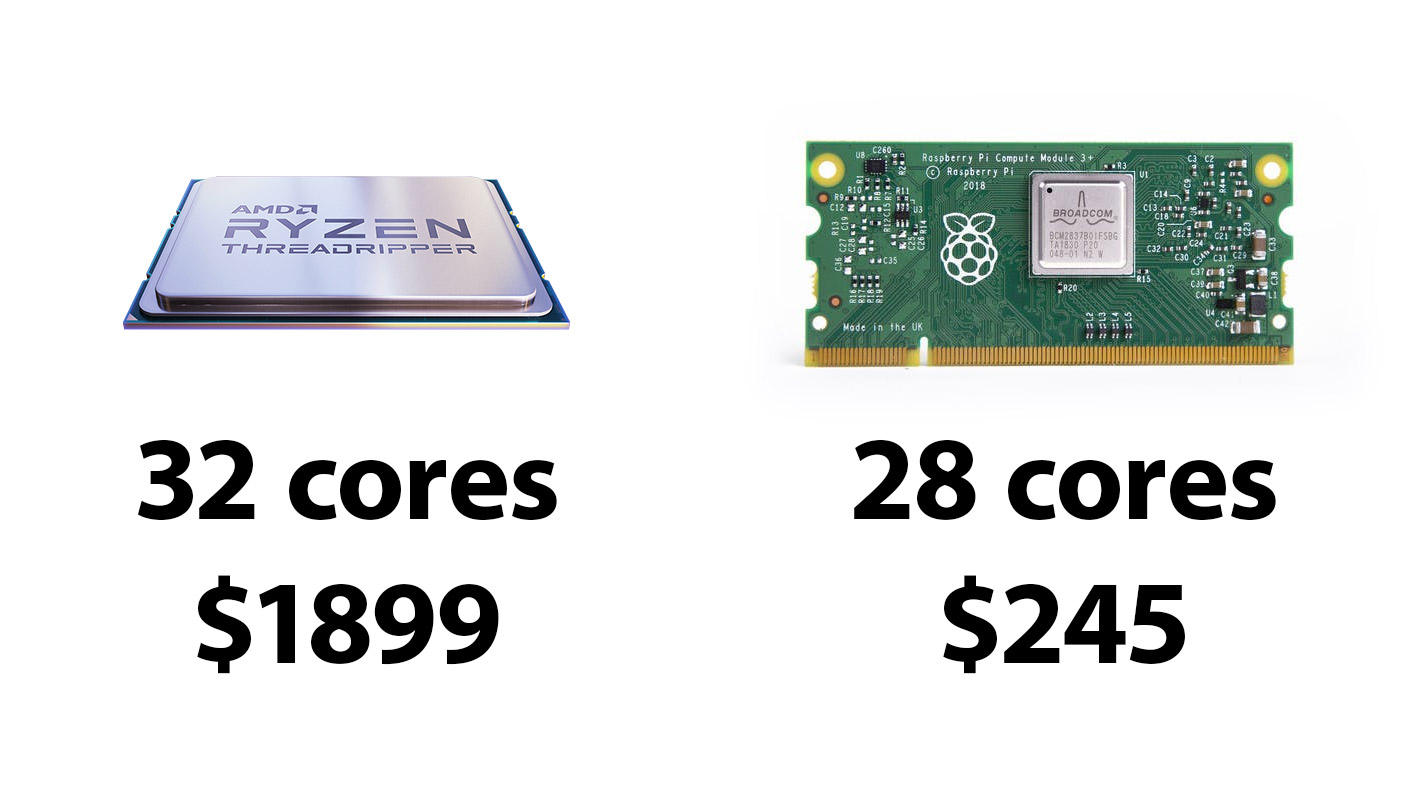

Also, while you may want to optimize for pure performance, there are times, like when building Kubernetes clusters, where you want to optimize for CPU core count. If you wanted to build a single computer with 32 CPU cores, you might consider the AMD ThreadRipper 3970x, which has 32 cores. Problem is, the processor costs nearly $2,000, and that's just the processor—the rest of the computer's components would put you past $3,000 total for this rig.

For the Raspberry Pi, each compute board has a 4-core CPU, and putting together 7 Pis nets you 28 cores. Almost the same number of cores, but costs less than $300 total. Even with the added cost of a Turing Pi and a power supply, that's the same number of CPU cores for 1/4 the cost!

Yes, I know 1 ThreadRipper core ≠ 1 Pi CM core; but in many K8s applications, a core is a core, and the more the merrier. Not all operations are CPU-bound and would benefit from having 4-8x faster raw throughput, or the many other little niceties the ThreadRipper offers (like more cache).

Finally, one of the most important lessons I learn working with Arduinos and Raspberry Pis is this: working with resource constraints like limited RAM or slow IO highlights inefficiencies in your code, in a way you'd never notice if you always build and work on the latest Core i9 or ThreadRipper CPU with gobs of RAM and 100s of thousands of IOPS!

If you can't get something to run well on a Raspberry Pi, consider this: many smartphones and low-end computers have similar constraints, and people using these devices would have the same kind of experience.

Additionally, even on fast cloud computing instances, you'll run into network and IO bottlenecks from time to time, and if your application fails in those situations, you could be in for a world of pain. Getting them to run on a Raspberry Pi can help you identify these problems quickly.

Why Turing Pi?

So, getting back to the Turing Pi: what makes this better than a cluster of standard Raspberry Pis, like the Pi Dramble?

Well, subscribe to my YouTube channel—I'll explore what makes the Turing Pi tick and how to set up a new cluster in my next video! If you liked this content and want to see more, consider supporting me on GitHub or Patreon.

Comments

I'm totally in. Pre-Ordered my TurningPi and 7 3+ compute modules. WHY? I'm a AWS Architect with building with Terraform and Ansible. I've been following you're work for a while and I'm wanting to build my own home lab and dig into your code. THANKS.

I'm building a 11+ Raspberry pi Zero bramble, despite underpowered processor. Needed cheap, light, fast, and plug and play. Would appreciate pointers on OS to use and ethernet interface tips. Would you set up one pi as a server and one as a brain, and the rest as drone compute modules, with static IP addresses? Or would you enable USB gadget feature and plug them all into a USB strip whose master was the "One Pi to Rule Them All"?

iḿ building a cluster with 5 raspberry pi 4b 4gbram and 2 raspberry zero for rendering 3d and docker. total 22 cores but when is rendering i will overcloked at 2.0 ghz the 5 raspberry 4b

I've been seriously considering a Pi4 cluster for blender rendering, and since I'll have a cluster, I want to explore it's other capabilities too. And of course, compare a pi clusters performance with other more typical compute platforms. I was poking around the benchmark article on your pidramble cluster. Do I understand this, "There are numerous difficulties in comparing a 4-node physical cluster with the equivalent of 16 CPU cores to a single node running a virtualized cluster of 8 vCPU cores on a 2-core i7 processor” correctly? I read that statement along side the benchmark results this way. A 4 node Raspberry Pi 3 B+ cluster can beat a 6th gen i7 dual core Macbook Pro in drupal tasks. So, based on the performance of the Pi 4, a 4 node cluster would be able to basically double the performance of the same Macbook Pro. Is that what I'm seeing? Thanks for your hard work and great documentation on all of this!

I noticed you didn't have any form of cooling for all the clusters pictured here (and all the individual ones). I know the raspberry pi 3s don't need cooling, but if you were to get some pi 4s would you consider cooling them down? It might add some extra performance, especially if you overclock them.

For the CM4, if it's in open air, it can run at the default clock without extra cooling as long as ambient temps are reasonable (< 80°F). But I would recommend at least a fan over the boards, if not a heat sink as well.

The Compute Module 4 definitely needs both if you want to run it with an overclock in any kind of enclosed space. I'm working on a new Turing Pi 2 build right now :)