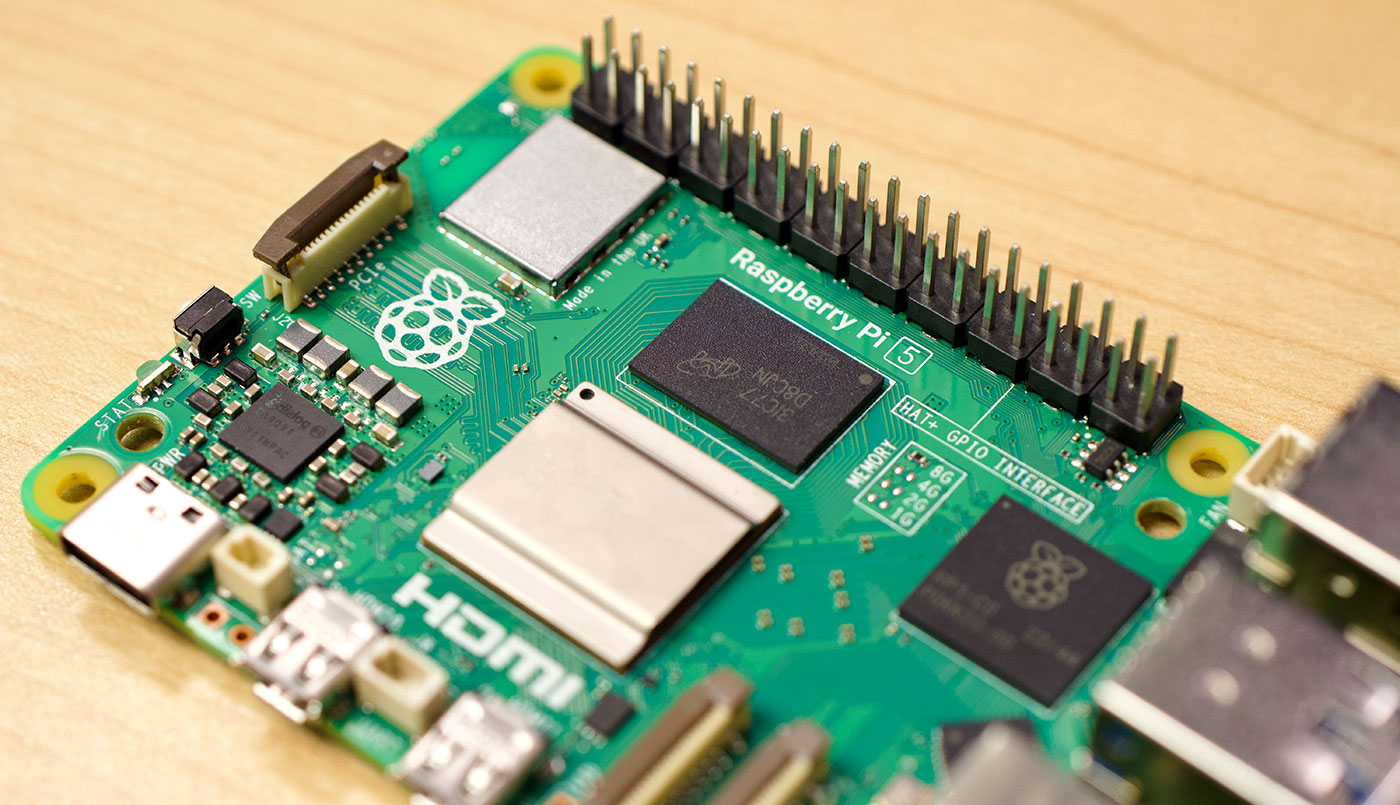

nmcli for WiFi on Raspberry Pi OS 12 'Bookworm'

If you haven't already, check out my full video on the Raspberry Pi 5, which inspired this post.

Raspberry Pi OS 12 'Bookworm' is coming alongside the release of the Raspberry Pi 5, and with it comes a fairly drastic change from using wpa_supplicant for WiFi interface management to everything network-related running through nmcli, or NetworkManager.

nmcli is widely adopted in Linux these days, and it makes managing WiFi, LAN, and other network connections much simpler.